Huggingface保存令牌程序

我试图将令牌程序保存在拥抱面中,以便以后可以从不需要访问internet的容器中加载它。

BASE_MODEL = "distilbert-base-multilingual-cased"

tokenizer = AutoTokenizer.from_pretrained(BASE_MODEL)

tokenizer.save_vocabulary("./models/tokenizer/")

tokenizer2 = AutoTokenizer.from_pretrained("./models/tokenizer/")但是,最后一行给出了错误:

OSError: Can't load config for './models/tokenizer3/'. Make sure that:

- './models/tokenizer3/' is a correct model identifier listed on 'https://huggingface.co/models'

- or './models/tokenizer3/' is the correct path to a directory containing a config.json file变压器版本: 3.1.0

不幸的是,How to load the saved tokenizer from pretrained model in Pytorch没有帮上忙。

编辑1

感谢@ashwin下面的回答,我尝试了save_pretrained,得到了以下错误:

OSError: Can't load config for './models/tokenizer/'. Make sure that:

- './models/tokenizer/' is a correct model identifier listed on 'https://huggingface.co/models'

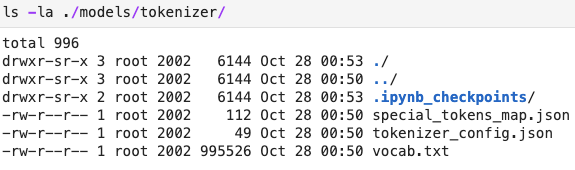

- or './models/tokenizer/' is the correct path to a directory containing a config.json file令牌程序文件夹的内容如下:

我尝试将tokenizer_config.json重命名为config.json,然后得到了错误:

ValueError: Unrecognized model in ./models/tokenizer/. Should have a `model_type` key in its config.json, or contain one of the following strings in its name: retribert, t5, mobilebert, distilbert, albert, camembert, xlm-roberta, pegasus, marian, mbart, bart, reformer, longformer, roberta, flaubert, bert, openai-gpt, gpt2, transfo-xl, xlnet, xlm, ctrl, electra, encoder-decoder回答 3

Stack Overflow用户

发布于 2020-10-27 10:41:26

save_vocabulary(),只保存令牌程序的词汇表文件( BPE令牌列表)。

要保存整个令牌程序,您应该使用save_pretrained()

因此,如下:

BASE_MODEL = "distilbert-base-multilingual-cased"

tokenizer = AutoTokenizer.from_pretrained(BASE_MODEL)

tokenizer.save_pretrained("./models/tokenizer/")

tokenizer2 = DistilBertTokenizer.from_pretrained("./models/tokenizer/")编辑:

出于某种未知的原因:

tokenizer2 = AutoTokenizer.from_pretrained("./models/tokenizer/")

使用

tokenizer2 = DistilBertTokenizer.from_pretrained("./models/tokenizer/")

很管用。

Stack Overflow用户

发布于 2021-04-07 00:18:40

将"tokenizer_config.json“文件(由save_pretrained()函数创建的文件)重命名为"config.json”解决了我的环境中的相同问题。

Stack Overflow用户

发布于 2021-05-16 16:13:51

您需要将模型和令牌程序保存在同一个目录中。HuggingFace实际上是在寻找模型的config.json文件,因此重命名tokenizer_config.json不会解决这个问题。

https://stackoverflow.com/questions/64550503

复制相似问题