在SparkSQL中执行SparkSQL后获取所有行

在SparkSQL中执行SparkSQL后获取所有行

提问于 2020-12-02 18:18:53

我试着在SparkSQL中做group by,这很好,但是大多数行都丢失了。

spark.sql(

"""

| SELECT

| website_session_id,

| MIN(website_pageview_id) as min_pv_id

|

| FROM website_pageviews

| GROUP BY website_session_id

| ORDER BY website_session_id

|

|

|""".stripMargin).show(10,truncate = false)我得到了这样的输出:

+------------------+---------+

|website_session_id|min_pv_id|

+------------------+---------+

|1 |1 |

|10 |15 |

|100 |168 |

|1000 |1910 |

|10000 |20022 |

|100000 |227964 |

|100001 |227966 |

|100002 |227967 |

|100003 |227970 |

|100004 |227973 |

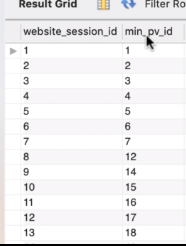

+------------------+---------+MySQL中的相同查询提供了如下所需的结果:

什么是最好的方法,以便在我的查询中获取所有行。

--请注意,,我已经检查了与此相关的其他答案,比如连接以获取所有行等等,但是我想知道是否还有其他方法可以得到类似于MySQL的结果?

回答 2

Stack Overflow用户

回答已采纳

发布于 2020-12-02 18:25:54

看起来它是按字母顺序排列的,在这种情况下,10出现在2之前。您可能需要检查列类型是数字,而不是字符串。这些列有哪些数据类型(printSchema())?

Stack Overflow用户

发布于 2020-12-02 18:27:17

我认为website_session_id是字符串类型的。将其转换为整数类型,并查看您得到了什么:

spark.sql(

"""

| SELECT

| CAST(website_session_id AS int) as website_session_id,

| MIN(website_pageview_id) as min_pv_id

|

| FROM website_pageviews

| GROUP BY website_session_id

| ORDER BY website_session_id

|

|

|""".stripMargin).show(10,truncate = false)页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/65113867

复制相关文章

相似问题