带约束的产品特征优化

带约束的产品特征优化

提问于 2020-12-20 17:08:09

我已经训练过一个Lightgbm模型来学习排序数据集。该模型预测样本的相关得分。所以预测越高越好。既然模型已经学会了,我想找到一些功能的最佳值,这些功能给了我最高的预测分数。

因此,假设我有特性u,v,w,x,y,z,而我想优化的特性是x,y,z。

maximize f(u,v,w,x,y,z) w.r.t features x,y,z where f is a lightgbm model

subject to constraints :

y = Ax + b

z = 4 if y < thresh_a else 4-0.5 if y >= thresh_b else 4-0.3

thresh_m < x <= thresh_n这些数字是随机合成的,但约束是线性的。

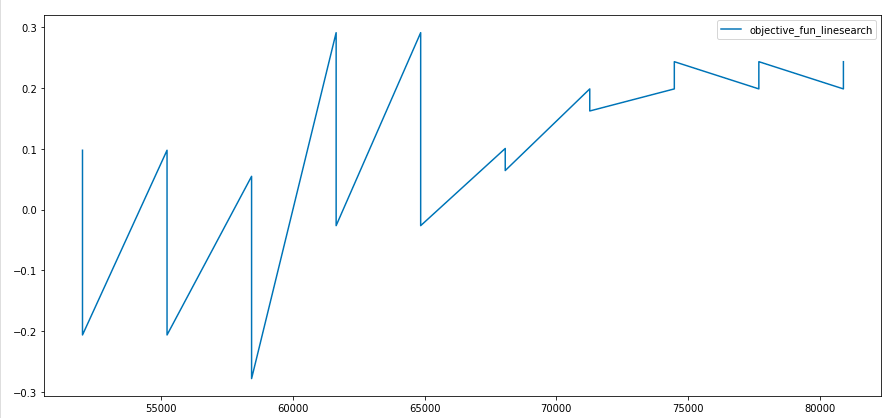

关于x的目标函数如下所示:

所以功能非常尖利,不光滑。我也没有梯度信息,因为f是lightgbm模型。

使用Nathan's answer,我写下了以下类:

class ProductOptimization:

def __init__(self, estimator, features_to_change, row_fixed_values,

bnds=None):

self.estimator = estimator

self.features_to_change = features_to_change

self.row_fixed_values = row_fixed_values

self.bounds = bnds

def get_sample(self, x):

new_values = {k:v for k,v in zip(self.features_to_change, x)}

return self.row_fixed_values.replace({k:{self.row_fixed_values[k].iloc[0]:v}

for k,v in new_values.items()})

def _call_model(self, x):

pred = self.estimator.predict(self.get_sample(x))

return pred[0]

def constraint1(self, vector):

x = vector[0]

y = vector[2]

return # some float value

def constraint2(self, vector):

x = vector[0]

y = vector[3]

return #some float value

def optimize_slsqp(self, initial_values):

con1 = {'type': 'eq', 'fun': self.constraint1}

con2 = {'type': 'eq', 'fun': self.constraint2}

cons = ([con1,con2])

result = minimize(fun=self._call_model,

x0=np.array(initial_values),

method='SLSQP',

bounds=self.bounds,

constraints=cons)

return result我得到的结果总是在最初猜测的范围内。我认为这是因为函数的非光滑性和没有任何梯度信息,这对于SLSQP优化器来说是很重要的。有什么建议,我应该如何处理这种问题?

回答 1

Stack Overflow用户

回答已采纳

发布于 2020-12-21 10:51:51

自从我上次写了一些严肃的代码以来,这是一个很好的时刻,所以我同意如果不完全清楚所有的事情都做什么,请尽管要求更多的解释。

进口:

from sklearn.ensemble import GradientBoostingRegressor

import numpy as np

from scipy.optimize import minimize

from copy import copy首先,我定义了一个新类,它允许我轻松地重新定义值。这个类有5个输入:

- 值:这是“基本”值。在您的等式

y=Ax + b中,它是b部件 - minimum :这是该类型将计算为

- 最大值的最小值:这是该类型将计算的最大值,这是第一个棘手的值。它是其他InputType对象的列表。第一种是输入类型,第二种是乘法器。在您的示例中,

[[x, A], [z, B], [d, C]] - relations:是

[[x, A]],如果方程是y=Ax + Bz + Cd,那么它将是最棘手的一个。它也是其他InputType对象的列表,它有四项:第一项是输入类型,第二项定义是否是使用min的上边界,如果是使用max的下边界。列表中的第三个项是边界的值,第四个是连接到它的输出值(

)。

小心,如果定义输入值太奇怪,我肯定会有奇怪的行为。

class InputType:

def __init__(self, value=0, minimum=-1e99, maximum=1e99, multipliers=[], relations=[]):

"""

:param float value: base value

:param float minimum: value can never be lower than x

:param float maximum: value can never be higher than y

:param multipliers: [[InputType, multiplier], [InputType, multiplier]]

:param relations: [[InputType, min, threshold, output_value], [InputType, max, threshold, output_value]]

"""

self.val = value

self.min = minimum

self.max = maximum

self.multipliers = multipliers

self.relations = relations

def reset_val(self, value):

self.val = value

def evaluate(self):

"""

- relations to other variables are done first if there are none then the rest is evaluated

- at most self.max

- at least self.min

- self.val + i_x * w_x

i_x is input i, w_x is multiplier (weight) of i

"""

for term, min_max, value, output_value in self.relations:

# check for each term if it falls outside of the expected terms

if min_max(term.evaluate(), value) != term.evaluate():

return self.return_value(output_value)

output_value = self.val + sum([i[0].evaluate() * i[1] for i in self.multipliers])

return self.return_value(output_value)

def return_value(self, output_value):

return min(self.max, max(self.min, output_value))使用此方法,可以修复从优化器发送的输入类型,如_call_model所示

class Example:

def __init__(self, lst_args):

self.lst_args = lst_args

self.X = np.random.random((10000, len(lst_args)))

self.y = self.get_y()

self.clf = GradientBoostingRegressor()

self.fit()

def get_y(self):

# sum of squares, is minimum at x = [0, 0, 0, 0, 0 ... ]

return np.array([[self._func(i)] for i in self.X])

def _func(self, i):

return sum(i * i)

def fit(self):

self.clf.fit(self.X, self.y)

def optimize(self):

x0 = [0.5 for i in self.lst_args]

initial_simplex = self._get_simplex(x0, 0.1)

result = minimize(fun=self._call_model,

x0=np.array(x0),

method='Nelder-Mead',

options={'xatol': 0.1,

'initial_simplex': np.array(initial_simplex)})

return result

def _get_simplex(self, x0, step):

simplex = []

for i in range(len(x0)):

point = copy(x0)

point[i] -= step

simplex.append(point)

point2 = copy(x0)

point2[-1] += step

simplex.append(point2)

return simplex

def _call_model(self, x):

print(x, type(x))

for i, value in enumerate(x):

self.lst_args[i].reset_val(value)

input_x = np.array([i.evaluate() for i in self.lst_args])

prediction = self.clf.predict([input_x])

return prediction[0]我可以定义您的问题,如下面所示的(请确保按照与最终列表相同的顺序定义输入,否则并不是所有的值都会在优化器中得到正确的更新!)

A = 5

b = 2

thresh_a = 5

thresh_b = 10

thresh_c = 10.1

thresh_m = 4

thresh_n = 6

u = InputType()

v = InputType()

w = InputType()

x = InputType(minimum=thresh_m, maximum=thresh_n)

y = InputType(value = b, multipliers=([[x, A]]))

z = InputType(relations=[[y, max, thresh_a, 4], [y, min, thresh_b, 3.5], [y, max, thresh_c, 3.7]])

example = Example([u, v, w, x, y, z])将结果称为:

result = example.optimize()

for i, value in enumerate(result.x):

example.lst_args[i].reset_val(value)

print(f"final values are at: {[i.evaluate() for i in example.lst_args]}: {result.fun)}")页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/65382539

复制相关文章

相似问题