Google \ StreamingDetectIntent在匹配第一意图后不处理音频

Google \ StreamingDetectIntent在匹配第一意图后不处理音频

提问于 2021-09-17 13:53:21

环境细节

- 操作系统: Windows 10,11. Debian 9(拉伸)

- Node.js版本: 12.18.3,12.22.1

- npm版本: 7.19.0,7.15.0

@google-cloud/dialogflow-cx版本: 2.13.0

问题

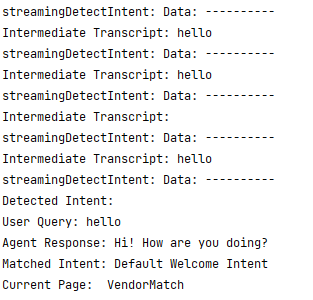

StreamingDetectIntent不处理音频后,匹配的第一个意图。我能够看到转录,它能够匹配第一个意图,但在匹配第一个意图,音频继续流,但我没有收到转录,on('data')回调也没有触发。简而言之,在匹配第一个意图之后什么都不会发生。

还有一件事是,我必须结束detectStream ,然后重新初始化它。然后它按预期运行。。

复制步骤

我试过const {SessionsClient} = require("@google-cloud/dialogflow-cx");和const {SessionsClient} = require("@google-cloud/dialogflow-cx").v3;

// Create a stream for the streaming request.

const detectStream = client

.streamingDetectIntent()

.on('error', console.error)

.on('end', (data)=>{

console.log(`streamingDetectIntent: -----End-----: ${JSON.stringify(data)}`);

})

.on('data', data => {

console.log(`streamingDetectIntent: Data: ----------`);

if (data.recognitionResult) {

console.log(`Intermediate Transcript: ${data.recognitionResult.transcript}`);

} else {

console.log('Detected Intent:');

if(!data.detectIntentResponse) return

const result = data.detectIntentResponse.queryResult;

console.log(`User Query: ${result.transcript}`);

for (const message of result.responseMessages) {

if (message.text) {

console.log(`Agent Response: ${message.text.text}`);

}

}

if (result.match.intent) {

console.log(`Matched Intent: ${result.match.intent.displayName}`);

}

console.log(`Current Page: ${result.currentPage.displayName}`);

}

});

const initialStreamRequest = {

session: sessionPath,

queryInput: {

audio: {

config: {

audioEncoding: encoding,

sampleRateHertz: sampleRateHertz,

singleUtterance: true,

},

},

languageCode: languageCode,

}

};

detectStream.write(initialStreamRequest);我尝试过通过文件流音频(.wav) &使用麦克风,但结果是相同的行为。

await pump(

recordingStream, // microphone stream <OR> fs.createReadStream(audioFileName),

// Format the audio stream into the request format.

new Transform({

objectMode: true,

transform: (obj, _, next) => {

next(null, {queryInput: {audio: {audio: obj}}});

},

}),

detectStream

);我也提到过这个实现和这个基于rpc的文档,但是没有找到任何理由来解释为什么这不应该起作用。

谢谢!

回答 1

Stack Overflow用户

回答已采纳

发布于 2021-09-20 12:29:50

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/69224606

复制相关文章

相似问题