有没有办法减少我的Tensorflow CNN模型中的记忆?

有没有办法减少我的Tensorflow CNN模型中的记忆?

提问于 2022-11-14 07:57:23

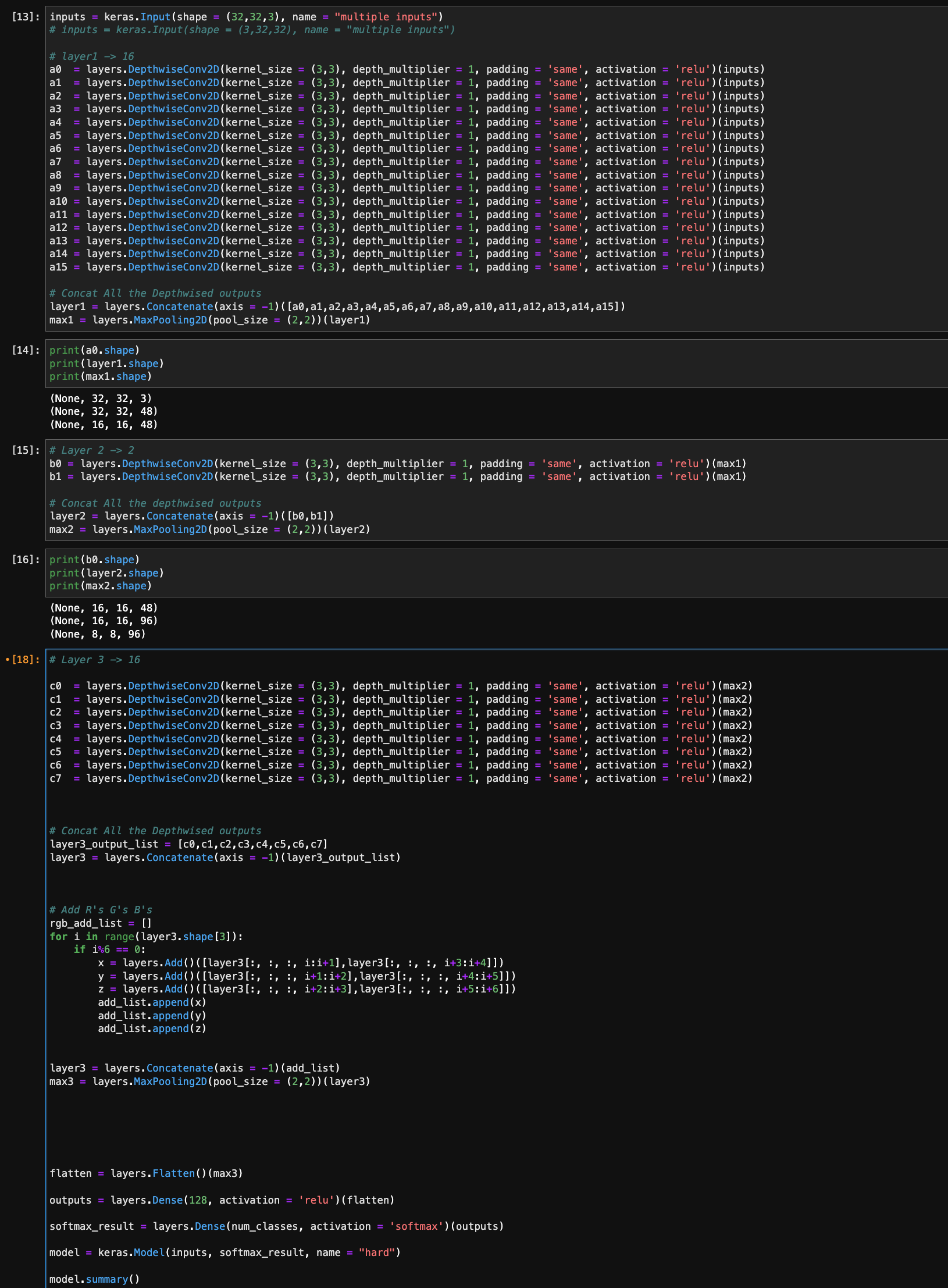

嗨,目前在做新的模式,我有一些记忆挣扎。我正在对前几层的输出进行求和。但这需要大量的计算,因为我认为它一直在分配新的记忆。

打印(max2.form)=(无,8,8,96)

...

c6 = layers.DepthwiseConv2D(kernel_size = (3,3), depth_multiplier = 1, padding = 'same', activation = 'relu')(max2)

c7 = layers.DepthwiseConv2D(kernel_size = (3,3), depth_multiplier = 1, padding = 'same', activation = 'relu')(max2)

# Concat All the Depthwised outputs

layer3_output_list = [c0,c1,c2,c3,c4,c5,c6,c7]

layer3 = layers.Concatenate(axis = -1)(layer3_output_list)

# Add R's G's B's

add_list = []

for i in range(layer3.shape[3]):

if i%6 == 0:

x = layers.Add()([layer3[:, :, :, i:i+1],layer3[:, :, :, i+3:i+4]])

y = layers.Add()([layer3[:, :, :, i+1:i+2],layer3[:, :, :, i+4:i+5]])

z = layers.Add()([layer3[:, :, :, i+2:i+3],layer3[:, :, :, i+5:i+6]])

add_list.append(x)

add_list.append(y)

add_list.append(z)

layer3 = layers.Concatenate(axis = -1)(add_list)打印(第3层形状)=(无,8,768)

max3 = layers.MaxPooling2D(pool_size = (2,2))(layer3)

flatten = layers.Flatten()(max3)有没有办法减少内存的使用?我必须为这个做定制层吗?

另外,为了解释for循环,使用的结果layer3,我想在某些结果之间做和“(添加(layer3,layer3)”并添加通道

目前我正在(ith,i+3th)和(i+1th,i+4th)和(i+2th,i+5th)之间求和,但我想我以后会改变我的求和方式

回答 2

Stack Overflow用户

发布于 2022-11-16 05:14:51

创建了一个执行通道添加的新层:

class ChannelAdd(tf.keras.layers.Layer):

def __init__(self,offset=3, **kwargs):

super(ChannelAdd, self).__init__(**kwargs)

self.offset = offset

def call(self, inputs):

s_ch = inputs + tf.roll(inputs, shift=-self.offset, axis=-1)

x = s_ch[:,:,:,::6]

y = s_ch[:,:,:,1::6]

z = s_ch[:,:,:,2::6]

concat = tf.concat([x[...,tf.newaxis], y[...,tf.newaxis], z[...,tf.newaxis]], axis=-1)

concat = tf.reshape(concat, [tf.shape(inputs)[0],tf.shape(inputs)[1],tf.shape(inputs)[2],-1])

return concat模式:

layer3 = keras.Input(shape=(8,8,768))

xyz = ChannelAdd()(layer3)

model = keras.Model(inputs=layer3, outputs=xyz)测试模型:

创建一个易于测试的特殊输入数组,其中通道包含频道号:通道0包含所有0,1所有1s等等。

a = tf.zeros(shape=(5,8,8,1))

inputs = tf.concat([a,a+1,a+2], axis=-1)

for i in range(255):

inputs = tf.concat([inputs, a+3*(i+1),a+3*(i+1)+1,a+3*(i+1)+2], axis=-1)输出:

outputs = model(inputs)

outputs.shape

#[5, 8, 8, 384]

outputs[:,:,:,:6]

#check first 6 channels

array([[[[ 3., 5., 7., 15., 17., 19.],

[ 3., 5., 7., 15., 17., 19.],

[ 3., 5., 7., 15., 17., 19.],

...,Stack Overflow用户

发布于 2022-11-17 10:16:18

现在,您正在做的是,就我所理解的问题而言,您正在添加相同的通道(G+.)然后(R+.)等等..。我做的代码和你的差不多。但是,采用不同的策略,首先我们需要将输入按照我们看到的3个通道(R,G,B)进行分割,然后我们将相应地添加这些通道(R->R,G->G,B->B)。我写了一个和你的代码差不多的代码,但是没有for循环.

Let suppose I have input x...

x = tf.random.normal((1,80,80,768))

#Here, first I need to split the input according to RGB Sequence

splitted_image = tf.split(x, 256 , axis=-1)

#Check the shape of the splitted_image

print(splitted_image[0].shape)TensorShape([1, 80, 80, 3])#Now, we have to add the channels in such a way that maps (R->R, G->G, B->B)

add_ = tf.keras.layers.Add()([tf.cast(splitted_image[::2], dtype=tf.float32), tf.cast(splitted_image[1::2], dtype=tf.float32)])

#Now, the shape of the output tensor is

print(add_.shape)TensorShape([128, 1, 80, 80, 3])#Now, we need to concatenate the inputs based on the last axis which is 3

concanated_output = tf.concat(tf.split(add_, 3 , axis=-1),axis=0)

#Output of the concatenated_output is

print(concatenated_output.shape)TensorShape([384, 1, 80, 80, 1])#Now, we need to set the arrangments of the axis using tf.Transpose

tf.transpose(concanated_output, perm=[1,2,3,0,4])#As I compared the output of both the tensors from your method and mine method both are giving me the same resultstf.transpose(concanated_output, perm=[1,2,3,0,4])[0,:,:,0,0] == layer3[0,:,:,0]<tf.Tensor: shape=(80, 80), dtype=bool, numpy=

array([[ True, True, True, ..., True, True, True],

[ True, True, True, ..., True, True, True],

[ True, True, True, ..., True, True, True],

...,

[ True, True, True, ..., True, True, True],

[ True, True, True, ..., True, True, True],

[ True, True, True, ..., True, True, True]])>在中使用实现Keras.layer

#for your model in one go

split_layer = tf.keras.layers.Lambda(lambda x : tf.split(x, 256, axis=-1))(layer3)

add_layer = tf.keras.layers.Add()([tf.cast(split_layer[::2],

dtype=tf.float32),

tf.cast(split_layer[1::2], dtype=tf.float32)])

add_layer = tf.keras.layers.Lambda(lambda x : tf.split(x, 3 , axis=-1))(add_layer)

layer3 = tf.keras.layers.concatenate(add_layer, axis=0)

layer3 = tf.keras.layers.Lambda(lambda x : tf.transpose(x , perm=[1,2,3,0,4])[:,:,:,:,0])(layer3)输出:

<KerasTensor: shape=(None, 80, 80, 384) dtype=float32 (created by layer 'lambda_1')>For 2 Iterations Your法取23 secs,我取1.7 secs。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/74428469

复制相关文章

相似问题