打印步骤打印错误,sess.run(w),sess.run(b)

打印步骤打印错误,sess.run(w),sess.run(b)

提问于 2022-10-08 03:33:23

https://gist.github.com/tomonari-masada/ed2fbc94a9f6252036eea507b7119045

我试图在JupyterLab笔记本中运行上面的代码,但是打印错误:打印步骤、sess.run(w)、sess.run(b)。

请提供帮助和建议。

谢谢

回答 1

Stack Overflow用户

发布于 2022-10-08 06:12:38

这在许多方面都是可能的。考虑优化问题。(二)。会话变量。

使用模型拟合或评估方法,他们创建了本地步骤和全局步骤,但简单地使用局部变量优化,您可以使用全局步骤或记录作为历史记录,此时,步骤培训可能不会指出真正的真相,因为您执行单个函数,然后全局步骤覆盖规则。

示例1:优化器问题。

import os

from os.path import exists

import tensorflow as tf

import tensorflow_io as tfio

import matplotlib.pyplot as plt

import numpy as np

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Variables

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

learning_rate = 0.001

global_step = 0

tf.compat.v1.disable_eager_execution()

history = [ ]

history_Y = [ ]

scale = 1.0

sigma = 1.0

min_size = 1.0

# logdir = 'F:\\models\\log'

# savedir = 'F:\\models\\save'

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Class / Function

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

class MyDenseLayer(tf.keras.layers.Layer):

def __init__(self, num_outputs, num_add):

super(MyDenseLayer, self).__init__()

self.num_outputs = num_outputs

self.num_add = num_add

def build(self, input_shape):

self.kernel = self.add_weight("kernel",

shape=[int(input_shape[-1]),

self.num_outputs])

def call(self, inputs):

temp = tf.add( inputs, self.num_add )

temp = tf.matmul(temp, self.kernel)

return temp

#####################################################################################################

class SeqmentationOptimization(tf.keras.layers.Layer):

def __init__(self):

super(SeqmentationOptimization, self).__init__()

scale_init = tf.keras.initializers.RandomUniform(minval=10, maxval=1000, seed=None)

sigma_init = tf.keras.initializers.RandomUniform(minval=0.001, maxval=1, seed=None)

min_size_init = tf.keras.initializers.RandomUniform(minval=10, maxval=1000, seed=None)

self.scale = self.add_weight(shape=[1],

initializer = scale_init,

trainable=True)

self.sigma = self.add_weight(shape=[1],

initializer = sigma_init,

trainable=True)

self.min_size = self.add_weight(shape=[1],

initializer = min_size_init,

trainable=True)

def call(self, inputs):

objects = Segmentation(self.scale , self.sigma , self.min_size ).objects

return

class Segmentation( ):

def __init__( self, scale , sigma , min_size ):

print( 'start __init__: ' )

self.scale = scale

self.sigma = sigma

self.min_size = min_size

scale = tf.compat.v1.get_variable('scale', dtype = tf.float32, initializer = tf.random.normal((1, 10, 1)))

sigma = tf.compat.v1.get_variable('sigma', dtype = tf.float32, initializer = tf.random.normal((1, 10, 1)))

min_size = tf.compat.v1.get_variable('min_size', dtype = tf.float32, initializer = tf.random.normal((1, 10, 1)))

Z = tf.nn.l2_loss( ( scale - sigma ) +( scale - min_size ) , name="loss")

loss = tf.reduce_mean(input_tensor=tf.square(Z))

optimizer = tf.compat.v1.train.ProximalAdagradOptimizer(

learning_rate,

initial_accumulator_value=0.1,

l1_regularization_strength=0.2,

l2_regularization_strength=0.1,

use_locking=False,

name='ProximalAdagrad'

)

training_op = optimizer.minimize(loss)

self.loss = loss

self.scale = scale

self.sigma = sigma

self.min_size = min_size

self.training_op = training_op

return

def create_loss_fn( self ):

print( 'start create_loss_fn: ' )

return self.loss, self.scale, self.sigma, self.min_size, self.training_op

X = np.reshape([ 500, -400, 400, -300, 300, -200, 200, -100, 100, 1 ], (1, 10, 1))

Y = np.reshape([ -400, 400, -300, 300, -200, 200, -100, 100, -50, 50 ], (1, 10, 1))

Z = np.reshape([ -100, 200, -300, 300, -400, 400, -500, 500, -50, 50 ], (1, 10, 1))

loss_segmentation = Segmentation( scale , sigma , min_size )

loss, scale, sigma, min_size, training_op = loss_segmentation.create_loss_fn( )

with tf.compat.v1.Session() as sess:

sess.run(tf.compat.v1.global_variables_initializer())

for i in range(1000):

global_step = global_step + 1

train_loss, temp = sess.run([loss, training_op], feed_dict={scale:X, sigma:Y, min_size:Z})

history.append(train_loss)

history_Y.append( history[0] - train_loss )

print( 'steps: ' + str(i) )

sess.close()

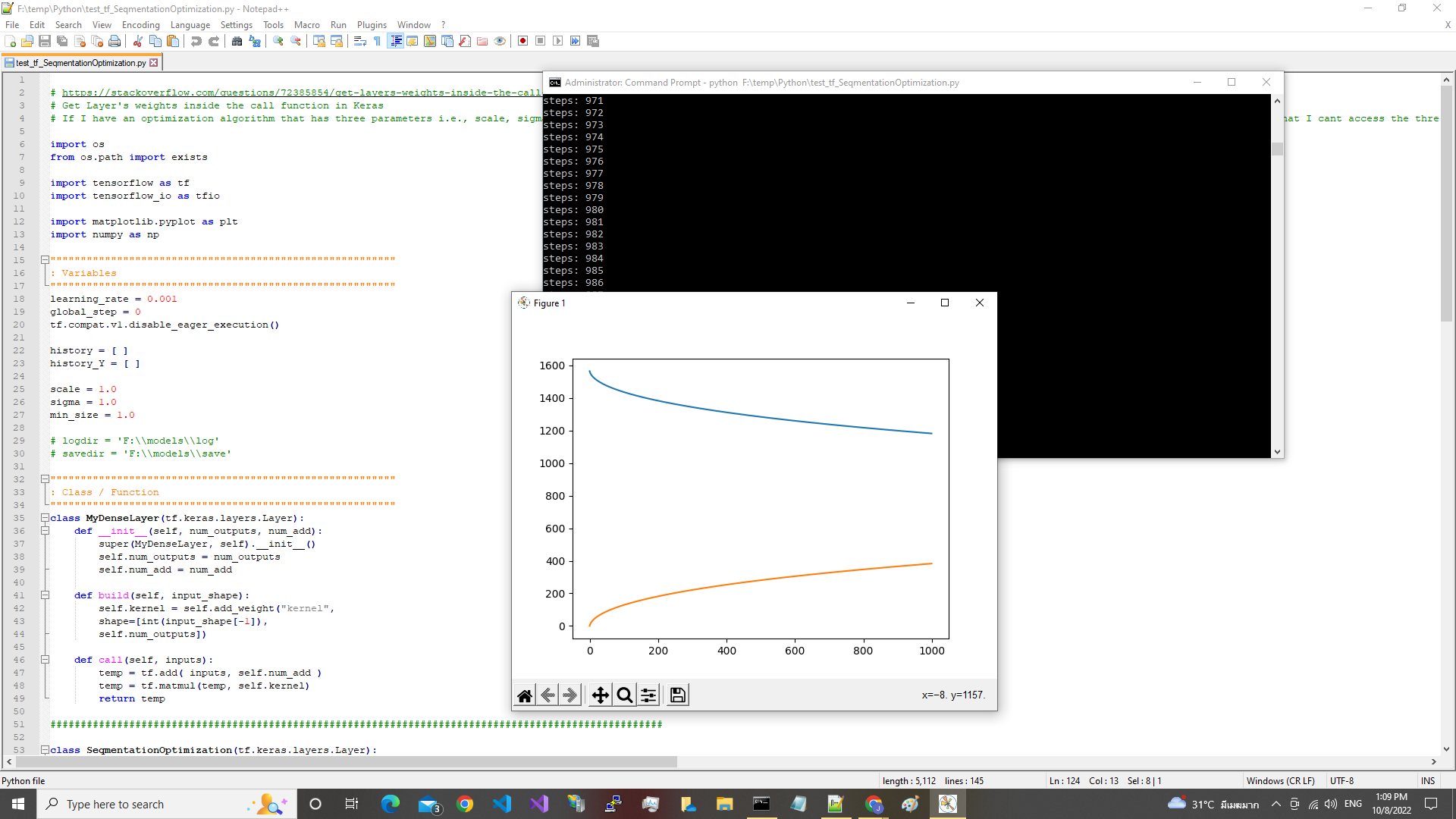

plt.plot(np.asarray(history))

plt.plot(np.asarray(history_Y))

plt.show()

plt.close()

input('...')示例2:优化变量

import tensorflow as tf

import numpy as np

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Variables

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

learning_rate = 0.001

global_step = 0

tf.compat.v1.disable_eager_execution()

start = 3

limit = 33

delta = 3.0

inputs = tf.range(start, limit, delta)

inputs = tf.expand_dims(inputs, axis=0)

inputs = tf.expand_dims(inputs, axis=0)

lstm = tf.keras.layers.LSTM(4)

output = lstm(inputs)

print(output.shape)

print(output)

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

: Training

"""""""""""""""""""""""""""""""""""""""""""""""""""""""""

optimizer = tf.compat.v1.train.ProximalAdagradOptimizer(

learning_rate,

initial_accumulator_value=0.1,

l1_regularization_strength=0.2,

l2_regularization_strength=0.1,

use_locking=False,

name='ProximalAdagrad'

)

var1 = tf.Variable(10.0)

var2 = tf.Variable(10.0)

X_var = tf.compat.v1.get_variable('X', dtype = tf.float32, initializer = tf.random.normal((1, 10, 1)))

y_var = tf.compat.v1.get_variable('Y', dtype = tf.float32, initializer = tf.random.normal((1, 10, 1)))

Z = tf.nn.l2_loss((var1 - X_var) ** 2 + (var2 - y_var) ** 2, name="loss")

cosine_loss = tf.keras.losses.CosineSimilarity(axis=1)

loss = tf.reduce_mean(input_tensor=tf.square(Z))

training_op = optimizer.minimize(cosine_loss(X_var, y_var))

X = np.reshape([ 500, -400, 400, -300, 300, -200, 200, -100, 100, 1 ], (1, 10, 1))

Y = np.reshape([ -400, 400, -300, 300, -200, 200, -100, 100, -50, 50 ], (1, 10, 1))

with tf.compat.v1.Session() as sess:

sess.run(tf.compat.v1.global_variables_initializer())

for i in range(1000):

global_step = global_step + 1

train_loss, temp = sess.run([loss, training_op], feed_dict={X_var:X, y_var:Y})

print( 'train_loss: ' + str( train_loss ) )

with tf.compat.v1.variable_scope("dekdee", reuse=tf.compat.v1.AUTO_REUSE):

print( X_var.eval() )

sess.close()

input('...')

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/73994175

复制相关文章

相似问题