如何使牛肝菌( kornia HomographyWarper )表现得像OpenCV warpPerspective?

如何使牛肝菌( kornia HomographyWarper )表现得像OpenCV warpPerspective?

提问于 2022-09-27 15:23:29

正如标题所述,我希望使用kornia中的HomographyWarper,以便它提供与OpenCV warpPerspective相同的输出。

import cv2

import numpy as np

import torch

from kornia.geometry.transform import HomographyWarper

from kornia.geometry.conversions import normalize_pixel_coordinates

image_cv = cv2.imread("./COCO_train2014_000000000009.jpg")

image_cv = image_cv[0:256, 0:256]

image = torch.tensor(image_cv).permute(2, 0, 1)

image = image.to('cuda:0')

image_reshaped = image.type(torch.float32).view(1, *image.shape)

height, width, _ = image_cv.shape

homography_0_1 = torch.tensor([[ 7.8783e-01, 3.6705e-02, 2.5139e+02],

[ 1.6186e-02, 1.0893e+00, -2.7614e+01],

[-4.3304e-04, 7.6681e-04, 1.0000e+00]], device='cuda:0',

dtype=torch.float64)

homography_0_2 = torch.tensor([[ 7.8938e-01, 3.5727e-02, 1.5221e+02],

[ 1.8347e-02, 1.0921e+00, -2.8547e+01],

[-4.3172e-04, 7.7596e-04, 1.0000e+00]], device='cuda:0',

dtype=torch.float64)

transform_h1_h2 = torch.linalg.inv(homography_0_1).matmul(

homography_0_2).type(torch.float32).view(1, 3, 3)

homography_warper_1_2 = HomographyWarper(height, width, padding_mode='zeros', normalized_coordinates=True)

warped_image_torch = homography_warper_1_2(image_reshaped, transform_h1_h2)

warped_image_1_2_cv = cv2.warpPerspective(

image_cv,

transform_h1_h2.cpu().numpy().squeeze(),

dsize=(width, height),

borderMode=cv2.BORDER_REFLECT101,

)

cv2.namedWindow("original image")

cv2.imshow("original image", image_cv)

cv2.imshow("OpenCV warp", warped_image_1_2_cv)

cv2.imshow("Korni warp", warped_image_torch.squeeze().permute(1, 2, 0).cpu().numpy())

cv2.waitKey(0)

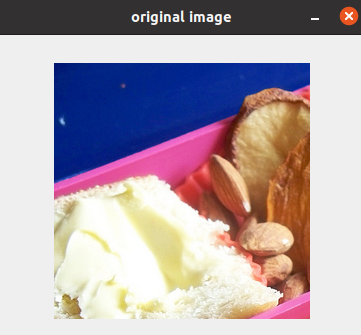

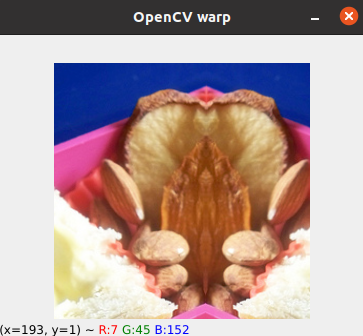

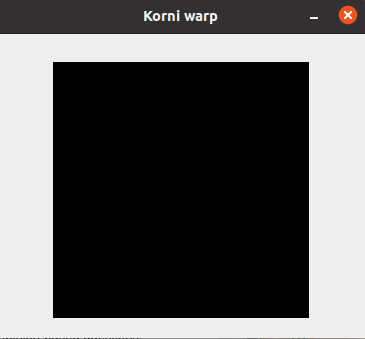

cv2.destroyAllWindows()通过上面的代码,我得到了以下输出:

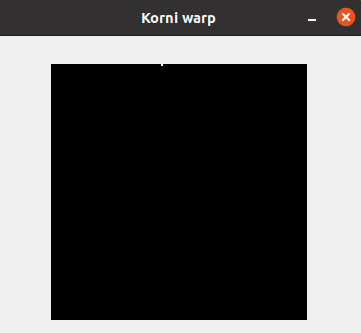

使用normalized_coordinates=False,我得到以下输出:

显然,同形变换的应用是不同的。我很想知道不同之处。

回答 1

Stack Overflow用户

回答已采纳

发布于 2022-09-27 22:48:02

您需要做两个更改:

- 使用相同的填充模式.

在您的示例中,cv2使用cv2.BORDER_REFLECT101,但是kornia zeros,因此在调用kornia时将zeros更改为padding_mode='reflection'。

需要指定normalized_homography=False.的

所以修改后的版本:

from kornia.geometry.transform.imgwarp import homography_warp

warped_image_torch = homography_warp(image_reshaped, transform_h1_h2, dsize=(height, width), padding_mode="reflection", normalized_homography=False)或者简单地说:

from kornia.geometry.transform.imgwarp import warp_perspective

warped_image_torch = warp_perspective(image_reshaped, transform_h1_h2, dsize=(height, width), padding_mode="reflection")结果(cv2/kornia):

HomographyWarper内部调用homography_warp函数https://github.com/kornia/kornia/blob/f696d2fb7313474bbaf5e73d8b5a56077248b508/kornia/geometry/transform/homography_warper.py#L96,但HomographyWarper不提供normalized_homography参数,homography_warp提供。

完整的例子:

import cv2

import numpy as np

import torch

from kornia.geometry.transform.imgwarp import warp_perspective, homography_warp

image_cv = cv2.imread("./000000000009.jpg")

image_cv = image_cv[0:256, 0:256]

image = torch.tensor(image_cv).permute(2, 0, 1)

image = image.to("cuda:0")

image_reshaped = image.type(torch.float32).view(1, *image.shape)

height, width, _ = image_cv.shape

homography_0_1 = torch.tensor(

[

[7.8783e-01, 3.6705e-02, 2.5139e02],

[1.6186e-02, 1.0893e00, -2.7614e01],

[-4.3304e-04, 7.6681e-04, 1.0000e00],

],

device="cuda:0",

dtype=torch.float64,

)

homography_0_2 = torch.tensor(

[

[7.8938e-01, 3.5727e-02, 1.5221e02],

[1.8347e-02, 1.0921e00, -2.8547e01],

[-4.3172e-04, 7.7596e-04, 1.0000e00],

],

device="cuda:0",

dtype=torch.float64,

)

transform_h1_h2 = (

torch.linalg.inv(homography_0_1)

.matmul(homography_0_2)

.type(torch.float32)

.view(1, 3, 3)

)

# warped_image_torch = homography_warp(image_reshaped, transform_h1_h2, dsize=(height, width), padding_mode="reflection", normalized_homography=False)

warped_image_torch = warp_perspective(image_reshaped, transform_h1_h2, dsize=(height, width), padding_mode="reflection")

warped_image_1_2_cv = cv2.warpPerspective(

image_cv,

transform_h1_h2.cpu().numpy().squeeze(),

dsize=(width, height),

borderMode=cv2.BORDER_REFLECT101,

)

warped_kornia = warped_image_torch.cpu().numpy().squeeze().transpose(1, 2, 0).astype(np.uint8)

cv2.imwrite("kornia_warp.png", np.hstack((warped_image_1_2_cv, warped_kornia)))页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/73870065

复制相关文章

相似问题