通过跳过子集小于或大于X的分区或多或小于Y子集的分区,加快集分区的生成

关于分区集的生成,有一个很好的答案:

(红宝石的另一个类似的答案是:Calculate set partitions with specific subset sizes,它也有一张照片)

问题是,当N增加时,它会很快变慢,可以从12或13开始注意到。

背景:

我需要设置分区,以便将一组图像放入肖像画或风景画中,以尽可能减少空白。

我尝试过像回答这里这样的bin打包算法,How is 2D bin packing achieved programmatically?

但是它会产生糟糕的解决方案(我用截图发表了一篇关于原因的评论)。其他垃圾箱包装解决方案过于复杂。

我发现的一个很好的解决方案是缩放图像集,使它们具有相同的高度,将它们排在一排,并在一个景观或肖像布局上组装几行。这里的描述:

我筛选出以下候选人:

- 放大或缩小图像太多,因为我希望每个图像都有几乎相等的表面分配

。

- 浪费了太多的空间

上下文结束

这项技术适用于10到11张图像,但在12岁及以后,它太慢了。

more-itertools的set_partitions()也能工作,但速度问题也是一样的。

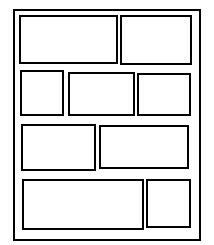

许多分区的子集大小为1,或者大子集太少。正如您在图像中所看到的,我不需要单块(大小为1的子集),它需要具有最小子集数的分区(它实际上不是N的平方根,这取决于它是横向还是纵向)。

我的问题是:

是否可以加速设置分区生成并直接跳过:

- 集分区,其子集大小小于X

和/或

具有小于Y子集的

- 集分区

最终:

- 集分区,其子集大小大于X

或者最终

具有多个Y子集的

- 集分区

这些X和Y可能有所不同,因为我想为N的不同值生成那些集分区。

编辑:我还发现了这个:https://codereview.stackexchange.com/questions/1526/finding-all-k-subset-partitions

这似乎有点快。

回答 3

Stack Overflow用户

发布于 2022-08-24 16:11:05

只需使用递归。

注意,为提高效率,每次返回的数组都进行了修改。如果你想用它们做任何有趣的事情,你可能想自己复制。

def split_partition (elements, min_size, max_size, partition=None, rest=None, i = 0):

if partition is None:

partition = []

if rest is None:

rest = []

# The first element always goes in.

if i == 0:

partition.append(elements[i])

yield from split_partition(elements, min_size, max_size, partition, rest, i+1)

elif len(partition) + len(elements) - i < min_size:

pass # Too few solutions.

elif max_size == len(partition):

for j in range(i, len(elements)):

rest.append(elements[j])

yield (partition, rest) # Just the one solution.

for j in range(i, len(elements)):

rest.pop()

elif i == len(elements):

yield (partition, rest) # Just the one solution.

else:

partition.append(elements[i])

yield from split_partition(elements, min_size, max_size, partition, rest, i+1)

rest.append(partition.pop())

yield from split_partition(elements, min_size, max_size, partition, rest, i+1)

rest.pop()

def desired_partitions (elements, min_size, max_size, min_count, max_count):

if len(elements) < min_size * min_count:

pass # No solutions possible.

elif max_size * max_count < len(elements):

pass # No solutions possible.

else:

for partition, rest in split_partition(elements, min_size, max_size):

if 0 == len(rest):

yield [partition]

else:

for split in desired_partitions(rest, min_size, max_size, min_count - 1, max_count - 1):

split.append(partition)

yield split

split.pop()

for split in desired_partitions([1, 2, 3, 4, 5, 6, 7, 8, 9, 10], 3, 5, 2, 3):

print(split)Stack Overflow用户

发布于 2022-08-28 21:04:15

这里有一个更快的方法。它仍然使用递归来计算分区的大小。但是对于生成分区的繁重工作,它避免了递归调用、不必要的检查和过多的数据复制。在我的测试中,它的速度大约是3倍。

不过,我不确定3的因子对组合爆炸有多大的帮助。

def desired_partition_sizes (element_count, min_size, max_size, min_count, max_count):

if max_size * max_count < element_count:

pass # No solutions.

elif element_count < min_size * min_count:

pass # No solutions.

elif max_count == 1 and 0 < element_count:

if min_size <= element_count:

yield [element_count]

elif 0 == element_count and 0 == min_count:

yield []

else:

for i in range(min_size, max_size+1):

for solution in desired_partition_sizes(

element_count-i, min_size, max_size,

max(min_count-1, 0), max_count-1):

solution.append(i)

yield solution

# The first partition contains the first element and has the first size

# The second partition contains the first element not in the first

# partition and has the second size.

# The third partition contains the first element not in the first 2

# partitions and has the third size.

# etc

def partitions_fitting_desired_sizes (elements, sizes):

partitions = [[] for _ in sizes]

assigned = []

while True:

# Attempt to assign.

i = len(assigned)

j = 0

while i < len(elements):

while sizes[j] <= len(partitions[j]):

j += 1

partitions[j].append(elements[i])

assigned.append(j)

i += 1

yield partitions

# Unassign the last element.

partitions[ assigned.pop() ].pop()

# Unassign until an element can be moved to a later partition.

while 0 < len(assigned):

k = assigned.pop()

partitions[k].pop()

# Remember, the k'th starts with first not in others.

found = False

if 0 < len(partitions[k]):

for j in range(k+1, len(sizes)):

if len(partitions[j]) < sizes[j]:

found = True

partitions[j].append(elements[len(assigned)])

assigned.append(j)

break # Inner loop.

if found: # EXIT HERE (we moved one

break

if 0 == len(assigned):

break # All done.

def desired_partitions (elements, min_size, max_size, min_count, max_count):

for sizes in desired_partition_sizes(len(elements), min_size, max_size, min_count, max_count):

yield from partitions_fitting_desired_sizes(elements, sizes)

for partitions in desired_partitions([1, 2, 3, 4, 5, 6, 7, 8, 9, 10], 3, 5, 2, 3):

print(partitions)Stack Overflow用户

发布于 2022-08-30 23:11:15

这是我对基本分区问题here的答案的改编。这里的想法是,如果所有子集的最小大小应该是2,则较小集合的递归分区应该包括大小为1的集合,因为随着递归的展开,这些子集的大小可以提高到大小。可以原谅的缺失元素的数量是仍需添加的元素数。

因此,我们可以避免重复,也可以在每一个层次上修剪,这样就可以减少病例数的急剧增长(当然,这仍然是指数增长)。我会说它跑得很快。如果你做任何时间的比较,请报告你发现了什么。

def partition_filtered(collection, minsize=1, forgive=0):

"""

Generate partitions that contain at least `minsize` elements per set;

allow `forgive` missing elements, which can get added in subsequent steps

"""

if len(collection) == 1:

yield [ collection ]

return

first = collection[0]

for smaller in partition_filtered(collection[1:], minsize, forgive=forgive+1):

# insert `first` in each of the subpartition's subsets

for n, subset in enumerate(smaller):

candidate = smaller[:n] + [[ first ] + subset] + smaller[n+1:]

if conforms(candidate, minsize, forgive):

yield candidate

# put `first` in its own subset

candidate = [ [ first ] ] + smaller

if conforms(candidate, minsize, forgive):

yield candidate

def conforms(candidate, minsize, forgive):

"""

Check if partition `candidate` is at most `forgive` additions from making

all its elements conform to having minimum size `minsize`

"""

deficit = 0

for p in candidate:

need = minsize - len(p)

if need > 0:

deficit += need

# Is the deficit small enough?

return (deficit <= forgive)

something = list(range(1,13)) # [1, ... 12]

for n, p in enumerate(partition_filtered(something, minsize=2), 1):

print(n, sorted(p))

# Alternately, save the list and check it for correctness

# clean_partitions = list(partition_filtered(something, minsize=2))https://stackoverflow.com/questions/73473074

复制相似问题