使用RGB或灰度数据有效地更新画布(但不使用RGBA)

我有一个<canvas>,我每100毫秒更新一次来自HTTP请求的位图图像数据:

var ctx = canvas.getContext("2d");

setInterval(() => {

fetch('/get_image_data').then(r => r.arrayBuffer()).then(arr => {

var byteArray = new Uint8ClampedArray(arr);

var imgData = new ImageData(byteArray, 500, 500);

ctx.putImageData(imgData, 0, 0);

});

}, 100);当/get_image_data给出RGBA数据时,这是可行的。在我的例子中,由于alpha总是100%,所以我不会通过网络发送通道。问题:

- 当请求传递RGB二进制数据时,如何有效地做到这一点?

- 也是当请求传递灰度二进制数据时?

(我们能避免for循环吗?对于兆字节每秒10次的数据来说,这个循环在Javascript中很慢吗?)

在灰度=> RGBA的例子中:每个输入值..., a, ...都应该被输出数组中的..., a, a, a, 255, ...替换。

这里有一个纯JS解决方案:~10 ms用于1000×1000 10灰度=> RGBA数组转换。

WASM解决方案的以下是一次尝试。

回答 4

Stack Overflow用户

发布于 2022-08-02 16:46:31

将ArrayBuffer从RGB转换为RGBA在概念上很简单:只需在每个RGB三叉后插入一个不透明的alpha通道字节(255)。(而灰度到RGBA也同样简单:对于每个灰度字节:复制3次,然后插入一个255。)

这个问题的(稍微)更具挑战性的部分是将工作卸载到另一个使用wasm或工人的线程。

因为您表达了对JavaScript的熟悉,所以我将提供一个示例,说明如何在一个工作人员中使用几个实用模块来完成它,我将展示的代码将使用TypeScript语法。

对于示例中使用的类型:它们非常弱(很多

any)--它们的存在仅仅是为了提供示例中涉及的数据结构的结构清晰性。在强类型的工作者应用程序代码中,需要根据每个环境中应用程序的具体情况(工人和主机)重新编写这些类型,因为消息传递所涉及的所有类型都只是契约性的。

面向任务的工作代码

您问题中的问题是面向任务的(对于二进制RGB数据的每个特定序列,您需要它的RGBA对应项)。在这种情况下,工人API是面向消息的,而不是面向任务的--这意味着我们只能获得一个接口,用于监听和响应每一条消息,而不管其原因或上下文如何--没有内置的方式将特定的一对消息与工作人员之间的消息关联起来。因此,第一步是在API之上创建一个面向任务的抽象:

task-worker.ts

export type Task<Type extends string = string, Value = any> = {

type: Type;

value: Value;

};

export type TaskMessageData<T extends Task = Task> = T & { id: string };

export type TaskMessageEvent<T extends Task = Task> =

MessageEvent<TaskMessageData<T>>;

export type TransferOptions = Pick<StructuredSerializeOptions, 'transfer'>;

export class TaskWorker {

worker: Worker;

constructor (moduleSpecifier: string, options?: Omit<WorkerOptions, 'type'>) {

this.worker = new Worker(moduleSpecifier, {...options ?? {}, type: 'module'});

this.worker.addEventListener('message', (

{data: {id, value}}: TaskMessageEvent,

) => void this.worker.dispatchEvent(new CustomEvent(id, {detail: value})));

}

process <Result = any, T extends Task = Task>(

{transfer, type, value}: T & TransferOptions,

): Promise<Result> {

return new Promise<Result>(resolve => {

const id = globalThis.crypto.randomUUID();

this.worker.addEventListener(

id,

(ev) => resolve((ev as unknown as CustomEvent<Result>).detail),

{once: true},

);

this.worker.postMessage(

{id, type, value},

transfer ? {transfer} : undefined,

);

});

}

}

export type OrPromise<T> = T | Promise<T>;

export type TaskFnResult<T = any> = { value: T } & TransferOptions;

export type TaskFn<Value = any, Result = any> =

(value: Value) => OrPromise<TaskFnResult<Result>>;

const taskFnMap: Partial<Record<string, TaskFn>> = {};

export function registerTask (type: string, fn: TaskFn): void {

taskFnMap[type] = fn;

}

export async function handleTaskMessage (

{data: {id, type, value: taskValue}}: TaskMessageEvent,

): Promise<void> {

const fn = taskFnMap[type];

if (typeof fn !== 'function') {

throw new Error(`No task registered for the type "${type}"`);

}

const {transfer, value} = await fn(taskValue);

globalThis.postMessage(

{id, value},

transfer ? {transfer} : undefined,

);

}我不会过多地解释这段代码:它主要是关于在对象之间选择和移动属性,这样您就可以避免应用程序代码中的所有样板。值得注意的是:它还抽象了为每个任务实例创建唯一it的必要性。我将谈谈这三项出口:

- 类

TaskWorker:用于主机--它是对实例化工人模块的抽象,并在其worker属性上公开工作人员。它还有一个process方法,它接受任务信息作为对象参数,并返回处理任务的结果的承诺。任务对象参数有三个属性:

- `type`: the type of task to be performed (more on this below). This is simply a key that points to a task processing function in the worker.

- `value`: the payload value that will be acted on by the associated task function

- `transfer`: an optional array of [transferable objects](https://developer.mozilla.org/en-US/docs/Glossary/Transferable_objects) (I'll bring this up again later)- 函数

registerTask:用于在worker中使用--在字典中将任务函数设置为与其关联的类型名称,以便当收到该类型的任务时,工作人员可以使用该函数处理有效负载。 - 函数

handleTaskMessage:用于工作者--这很简单,但很重要:必须在worker模块脚本中将其分配给self.onmessage。

RGB (或灰度)到RGBA的高效转换

第二个实用程序模块具有将alpha字节剪接到RGB数据的逻辑,还有一个从灰度转换到RGBA的函数:

rgba-conversion.ts

/**

* The bytes in the input array buffer must conform to the following pattern:

*

* ```

* [

* r, g, b,

* r, g, b,

* // ...

* ]

* ```

*

* Note that the byte length of the buffer **MUST** be a multiple of 3

* (`arrayBuffer.byteLength % 3 === 0`)

*

* @param buffer A buffer representing a byte sequence of RGB data elements

* @returns RGBA buffer

*/

export function rgbaFromRgb (buffer: ArrayBuffer): ArrayBuffer {

const rgb = new Uint8ClampedArray(buffer);

const pixelCount = Math.floor(rgb.length / 3);

const rgba = new Uint8ClampedArray(pixelCount * 4);

for (let iPixel = 0; iPixel < pixelCount; iPixel += 1) {

const iRgb = iPixel * 3;

const iRgba = iPixel * 4;

// @ts-expect-error

for (let i = 0; i < 3; i += 1) rgba[iRgba + i] = rgb[iRgb + i];

rgba[iRgba + 3] = 255;

}

return rgba.buffer;

}

/**

* @param buffer A buffer representing a byte sequence of grayscale elements

* @returns RGBA buffer

*/

export function rgbaFromGrayscale (buffer: ArrayBuffer): ArrayBuffer {

const gray = new Uint8ClampedArray(buffer);

const pixelCount = gray.length;

const rgba = new Uint8ClampedArray(pixelCount * 4);

for (let iPixel = 0; iPixel < pixelCount; iPixel += 1) {

const iRgba = iPixel * 4;

// @ts-expect-error

for (let i = 0; i < 3; i += 1) rgba[iRgba + i] = gray[iPixel];

rgba[iRgba + 3] = 255;

}

return rgba.buffer;

}我认为迭代的数学代码在这里是不言自明的(但是--如果这里使用的任何API接口或答案的其他部分都不熟悉- MDN有解释性文档)。我认为值得注意的是,输入和输出值(ArrayBuffer)都是可转让物体,这意味着它们基本上可以被移动,而不是复制到主机和工作环境之间,以提高内存和速度效率。

另外,感谢@ 提供信息,它被用来提高这种方法的效率,而不是在这个答案的早期版本中使用的技术。

创建工作人员

由于上面的抽象,实际的工作代码非常小:

worker.ts

import {

rgbaFromGrayscale,

rgbaFromRgb,

} from './rgba-conversion.js';

import {handleTaskMessage, registerTask} from './task-worker.js';

registerTask('rgb-rgba', (rgbBuffer: ArrayBuffer) => {

const rgbaBuffer = rgbaFromRgb(rgbBuffer);

return {value: rgbaBuffer, transfer: [rgbaBuffer]};

});

registerTask('grayscale-rgba', (grayscaleBuffer: ArrayBuffer) => {

const rgbaBuffer = rgbaFromGrayscale(grayscaleBuffer);

return {value: rgbaBuffer, transfer: [rgbaBuffer]};

});

self.onmessage = handleTaskMessage;每个任务函数所需要的就是将缓冲区结果移动到返回对象中的value属性,并发出信号表明它的底层内存可以传输到主机上下文。

示例应用程序代码

在这里,我不认为任何事情都会让您感到惊讶:唯一的样板是模仿fetch返回一个示例RGB缓冲区,因为您的问题中引用的服务器对此代码不可用:

main.ts

import {TaskWorker} from './task-worker.js';

const tw = new TaskWorker('./worker.js');

const buf = new Uint8ClampedArray([

/* red */255, 0, 0, /* green */0, 255, 0, /* blue */0, 0, 255,

/* cyan */0, 255, 255, /* magenta */255, 0, 255, /* yellow */255, 255, 0,

/* white */255, 255, 255, /* grey */128, 128, 128, /* black */0, 0, 0,

]).buffer;

const fetch = async () => ({arrayBuffer: async () => buf});

async function main () {

const canvas = document.createElement('canvas');

canvas.setAttribute('height', '3');

canvas.setAttribute('width', '3');

// This is just to sharply upscale the 3x3 px demo data so that

// it's easier to see the squares:

canvas.style.setProperty('image-rendering', 'pixelated');

canvas.style.setProperty('height', '300px');

canvas.style.setProperty('width', '300px');

document.body

.appendChild(document.createElement('div'))

.appendChild(canvas);

const context = canvas.getContext('2d', {alpha: false})!;

const width = 3;

// This is the part that would happen in your interval-delayed loop:

const response = await fetch();

const rgbBuffer = await response.arrayBuffer();

const rgbaBuffer = await tw.process<ArrayBuffer>({

type: 'rgb-rgba',

value: rgbBuffer,

transfer: [rgbBuffer],

});

// And if the fetched resource were grayscale data, the syntax would be

// essentially the same, except that you'd use the type name associated with

// the grayscale task that was registered in the worker:

// const grayscaleBuffer = await response.arrayBuffer();

// const rgbaBuffer = await tw.process<ArrayBuffer>({

// type: 'grayscale-rgba',

// value: grayscaleBuffer,

// transfer: [grayscaleBuffer],

// });

const imageData = new ImageData(new Uint8ClampedArray(rgbaBuffer), width);

context.putImageData(imageData, 0, 0);

}

main();这些TypeScript模块只需编译,main脚本就可以在HTML中作为模块脚本运行。

如果没有访问您的服务器数据,我就不能做出性能声明,所以我将把这一点留给您。如果我在解释中忽略了什么(或任何尚不清楚的内容),可以在评论中随意提问。

Stack Overflow用户

发布于 2022-08-05 12:23:21

类型化数组视图

可以使用类型化数组创建像素数据的视图。

因此,例如,您有一个字节数组const foo = new Uint8Array(size),您可以使用const foo32 = new Uint32Array(foo.buffer)将视图创建为32位字数组。

foo32是相同的数据,但是JS认为它是32位字而不是字节,创建它是零拷贝操作,几乎没有开销。

因此,您可以在一个操作中移动4个字节。

不幸的是,您仍然需要对其中一个数组中的字节数据进行索引和格式化(如灰度或RGB)。

然而,使用类型化数组视图仍有值得提高的性能。

移动灰度像素

示例移动灰度字节

// src array as Uint8Array one byte per pixel

// dest is Uint8Array 4 bytes RGBA per pixel

function moveGray(src, dest, width, height) {

var i;

const destW = new Uint32Array(dest.buffer);

const alpha = 0xFF000000; // alpha is the high byte. Bits 24-31

for (i = 0; i < width * height; i++) {

const g = src[i];

destW[i] = alpha + (g << 16) + (g << 8) + g;

}

}比

function moveBytes(src, dest, width, height) {

var i,j = 0;

for (i = 0; i < width * height * 4; ) {

dest[i++] = src[j];

dest[i++] = src[j];

dest[i++] = src[j++];

dest[i++] = 255;

}

}其中src和dest是指向源灰色字节和目标RGBA字节的Uint8Array。

移动RGB像素

若要将RGB移动到RGBA,可以使用

// src array as Uint8Array 3 bytes per pixel as red, green, blue

// dest is Uint8Array 4 bytes RGBA per pixel

function moveRGB(src, dest, width, height) {

var i, j = 0;

const destW = new Uint32Array(dest.buffer);

const alpha = 0xFF000000; // alpha is the high byte. Bits 24-31

for (i = 0; i < width * height; i++) {

destW[i] = alpha + src[j++] + (src[j++] << 8) + (src[j++] << 16);

}

}比移动字节快30%,如下所示

// src array as Uint8Array 3 bytes per pixel as red, green, blue

function moveBytes(src, dest, width, height) {

var i, j = 0;

for (i = 0; i < width * height * 4; ) {

dest[i++] = src[j++];

dest[i++] = src[j++];

dest[i++] = src[j++];

dest[i++] = 255;

}

}Stack Overflow用户

发布于 2022-08-11 15:00:38

关于你的主要关切:

- “如何使用循环避免.”

- “WASM或其他技术--__--我们能做得更好吗?”

- “我需要这样做,可能是10次或15次,或者是每秒30次,__”

我建议您尝试使用GPU来处理此任务中的像素。

你可以从中央处理器canvas.getContext("2d").使用canvas.getContext("webgl")转换成GPU

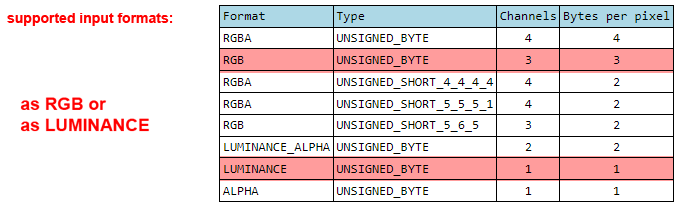

将<canvas>设置为WebGL ( GPU )模式意味着它现在可以接受更多格式的像素数据,包括RGB格式的值,甚至亮度值(其中一个灰色输入值是通过GPU画布的RGB通道自动写入的)。

您可以在这里阅读更多信息:WebGL“数据纹理”简介

WebGL设置起来并不有趣.这是一个很长的代码,但值得它的“几乎光速”的速度,它还。

下面是一个示例代码,它是从我的另一个答案中修改的(它本身就是从这个JSfiddle中修改的,我是从我还是GPU初学者的时候学到的)。

示例代码:创建一个1000x1000纹理,以"N“FPS的速率重新填充RGB/Grey。

变量:

pix_FPS:设置FPS速率(将用作1000/FPS)。pix_Mode:将输入像素的类型设置为“灰色”或"rgb“pix_FPS:设置FPS速率(将用作1000/FPS)。

测试一下..。

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>WebGL</title>

<style> body {background-color: white; } </style>

</head>

<body>

<br>

<button id="btn_draw" onclick="draw_Pixels()"> Draw Pixels </button>

<br><br>

<canvas id="myCanvas" width="1000" height="1000"></canvas>

<!-- ########## Shader code ###### -->

<!-- ### Shader code here -->

<!-- Fragment shader program -->

<script id="shader-fs" type="x-shader/x-fragment">

//<!-- //## code for pixel effects goes here if needed -->

//# these two vars will access

varying mediump vec2 vDirection;

uniform sampler2D uSampler;

void main(void)

{

//# reading thru current image's pixel colors (no FOR-loops needed like in JS 2D Canvas)

gl_FragColor = texture2D(uSampler, vec2(vDirection.x * 0.5 + 0.5, vDirection.y * 0.5 + 0.5));

///////////////////////////////////////////////////////

//# Example of basic colour effect on INPUT pixels ///////

/*

gl_FragColor.r = ( gl_FragColor.r * 0.95 );

gl_FragColor.g = ( gl_FragColor.g * 0.3333 );

gl_FragColor.b = ( gl_FragColor.b * 0.92 );

*/

}

</script>

<!-- Vertex shader program -->

<script id="shader-vs" type="x-shader/x-vertex">

attribute mediump vec2 aVertexPosition;

varying mediump vec2 vDirection;

void main( void )

{

gl_Position = vec4(aVertexPosition, 1.0, 1.0) * 2.0;

vDirection = aVertexPosition;

}

</script>

<!-- ### END Shader code... -->

<script>

//# WebGL setup

//# Pixel setup for transferring to GPU

//# pixel mode and the handlimg GPU formats...

//# set image width and height (also changes Canvas width/height)

var pix_Width = 1000;

var pix_Height = 1000;

var pix_data = new Uint8Array( pix_Width * pix_Height );

var pix_FPS = 30; //# MAX is 60-FPS (or 60-Hertz)

var pix_Mode = "grey" //# can be "grey" or "rgb"

var pix_Format;

var pix_internalFormat;

const pix_border = 0;

const glcanvas = document.getElementById('myCanvas');

const gl = ( ( glcanvas.getContext("webgl") ) || ( glcanvas.getContext("experimental-webgl") ) );

//# check if WebGL is available..

if (gl && gl instanceof WebGLRenderingContext) { console.log( "WebGL is available"); }

//# use regular 2D Canvas functions if this happens...

else { console.log( "WebGL is NOT available" ); alert( "WebGL is NOT available" ); }

//# change Canvas width/height to match input image size

//glcanvas.style.width = pix_Width+"px"; glcanvas.style.height = pix_Height+"px";

glcanvas.width = pix_Width; glcanvas.height = pix_Height;

//# create and attach the shader program to the webGL context

var attributes, uniforms, program;

function attachShader( params )

{

fragmentShader = getShaderByName(params.fragmentShaderName);

vertexShader = getShaderByName(params.vertexShaderName);

program = gl.createProgram();

gl.attachShader(program, vertexShader);

gl.attachShader(program, fragmentShader);

gl.linkProgram(program);

if (!gl.getProgramParameter(program, gl.LINK_STATUS))

{ alert("Unable to initialize the shader program: " + gl.getProgramInfoLog(program)); }

gl.useProgram(program);

// get the location of attributes and uniforms

attributes = {};

for (var i = 0; i < params.attributes.length; i++)

{

var attributeName = params.attributes[i];

attributes[attributeName] = gl.getAttribLocation(program, attributeName);

gl.enableVertexAttribArray(attributes[attributeName]);

}

uniforms = {};

for (i = 0; i < params.uniforms.length; i++)

{

var uniformName = params.uniforms[i];

uniforms[uniformName] = gl.getUniformLocation(program, uniformName);

gl.enableVertexAttribArray(attributes[uniformName]);

}

}

function getShaderByName( id )

{

var shaderScript = document.getElementById(id);

var theSource = "";

var currentChild = shaderScript.firstChild;

while(currentChild)

{

if (currentChild.nodeType === 3) { theSource += currentChild.textContent; }

currentChild = currentChild.nextSibling;

}

var result;

if (shaderScript.type === "x-shader/x-fragment")

{ result = gl.createShader(gl.FRAGMENT_SHADER); }

else { result = gl.createShader(gl.VERTEX_SHADER); }

gl.shaderSource(result, theSource);

gl.compileShader(result);

if (!gl.getShaderParameter(result, gl.COMPILE_STATUS))

{

alert("An error occurred compiling the shaders: " + gl.getShaderInfoLog(result));

return null;

}

return result;

}

//# attach shader

attachShader({

fragmentShaderName: 'shader-fs',

vertexShaderName: 'shader-vs',

attributes: ['aVertexPosition'],

uniforms: ['someVal', 'uSampler'],

});

// some webGL initialization

gl.clearColor(0.0, 0.0, 0.0, 0.0);

gl.clearDepth(1.0);

gl.disable(gl.DEPTH_TEST);

positionsBuffer = gl.createBuffer();

gl.bindBuffer(gl.ARRAY_BUFFER, positionsBuffer);

var positions = [

-1.0, -1.0,

1.0, -1.0,

1.0, 1.0,

-1.0, 1.0,

];

gl.bufferData(gl.ARRAY_BUFFER, new Float32Array(positions), gl.STATIC_DRAW);

var vertexColors = [0xff00ff88,0xffffffff];

var cBuffer = gl.createBuffer();

verticesIndexBuffer = gl.createBuffer();

gl.bindBuffer(gl.ELEMENT_ARRAY_BUFFER, verticesIndexBuffer);

var vertexIndices = [ 0, 1, 2, 0, 2, 3, ];

gl.bufferData(

gl.ELEMENT_ARRAY_BUFFER,

new Uint16Array(vertexIndices), gl.STATIC_DRAW

);

texture = gl.createTexture();

gl.bindTexture(gl.TEXTURE_2D, texture);

//# set FILTERING (where needed, used when resizing input data to fit canvas)

//# must be LINEAR to avoid subtle pixelation (double-check this... test other options like NEAREST)

//# for bi-linear filterin

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MAG_FILTER, gl.LINEAR);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MIN_FILTER, gl.LINEAR);

/*

// for non-filtered pixels

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MIN_FILTER, gl.NEAREST);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MAG_FILTER, gl.NEAREST);

*/

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_S, gl.CLAMP_TO_EDGE);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_T, gl.CLAMP_TO_EDGE);

gl.bindTexture(gl.TEXTURE_2D, null);

// update the texture from the video

function updateTexture()

{

gl.bindTexture(gl.TEXTURE_2D, texture);

gl.pixelStorei(gl.UNPACK_FLIP_Y_WEBGL, true);

gl.pixelStorei(gl.UNPACK_ALIGNMENT, 1); //1 == read one byte or 4 == read integers, etc

//# for RGV vs LUM

pix_Mode = "grey"; //pix_Mode = "rgb";

if ( pix_Mode == "grey") { pix_Format = gl.LUMINANCE; pix_internalFormat = gl.LUMINANCE; }

if ( pix_Mode == "rgb") { pix_Format = gl.RGB; pix_internalFormat = gl.RGB; }

//# update pixel Array with custom data

pix_data = new Uint8Array(pix_Width*pix_Height).fill().map(() => Math.round(Math.random() * 255));

//# next line fails in Safari if input video is NOT from same domain/server as this html code

gl.texImage2D(gl.TEXTURE_2D, 0, pix_internalFormat, pix_Width, pix_Height, pix_border, pix_Format, gl.UNSIGNED_BYTE, pix_data);

gl.bindTexture(gl.TEXTURE_2D, null);

};

</script>

<script>

//# Vars for video frame grabbing when system/browser provides a new frame

var requestAnimationFrame = (window.requestAnimationFrame || window.mozRequestAnimationFrame ||

window.webkitRequestAnimationFrame || window.msRequestAnimationFrame);

var cancelAnimationFrame = (window.cancelAnimationFrame || window.mozCancelAnimationFrame);

///////////////////////////////////////////////

function draw_Pixels( )

{

//# initialise GPU variables for usage

//# begin updating pixel data as texture

let testing = "true";

if( testing == "true" )

{

updateTexture(); //# update pixels with current video frame's pixels...

gl.useProgram(program); //# apply our program

gl.bindBuffer(gl.ARRAY_BUFFER, positionsBuffer);

gl.vertexAttribPointer(attributes['aVertexPosition'], 2, gl.FLOAT, false, 0, 0);

//# Specify the texture to map onto the faces.

gl.activeTexture(gl.TEXTURE0);

gl.bindTexture(gl.TEXTURE_2D, texture);

//gl.uniform1i(uniforms['uSampler'], 0);

//# Draw GPU

gl.bindBuffer(gl.ELEMENT_ARRAY_BUFFER, verticesIndexBuffer);

gl.drawElements(gl.TRIANGLES, 6, gl.UNSIGNED_SHORT, 0);

}

//# re-capture the next frame... basically make the function loop itself

//requestAnimationFrame( draw_Pixels );

setTimeout( requestAnimationFrame( draw_Pixels ), (1000 / pix_FPS) );

}

// ...the end. ////////////////////////////////////

</script>

</body>

</html>https://stackoverflow.com/questions/73191734

复制相似问题