尝试通过web抓取导入所有页面,但只获取4行

尝试通过web抓取导入所有页面,但只获取4行

提问于 2022-07-25 17:07:43

我对网络刮擦很陌生。我想要废除一个名为https://www.bproperty.com/的网站

在这里,我试图从一个特定区域的所有页面中获取所有数据。(在访问链接:https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/之后,您将得到一个想法),在链接的底部,您将能够看到分页。因此,我尝试将数组中的所有urls放在一个数组中,然后循环遍历它们,然后删除数据并导入CSV。在终端窗口中,所有数据都显示了find,但是当试图将其导入CSV时,我只能看到4行。

这是我的代码:

from bs4 import BeautifulSoup

import requests

from csv import writer

urls = [

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/"

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-2/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-3/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-4/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-5/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-6/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-7/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-8/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-9/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-10/",

"https://www.bproperty.com/en/dhaka/apartments-for-rent-in-gulshan/page-11/"

]

for u in urls:

page = requests.get(u)

soup = BeautifulSoup(page.content, 'html.parser')

lists = soup.find_all('article', class_="ca2f5674")

with open("bproperty-gulshan.csv", 'w', encoding="utf8", newline='') as f:

wrt = writer(f)

header = ["Title", "Location", "Price", "type", "Beds", "Baths", "Length"]

wrt.writerow(header)

for list in lists:

price = list.find('span', class_="f343d9ce").text.replace("/n", "")

location = list.find('div', class_="_7afabd84").text.replace("/n", "")

type = list.find('div', class_="_9a4e3964").text.replace("/n", "")

title = list.find('h2', class_="_7f17f34f").text.replace("/n", "")

beds = list.find('span', class_="b6a29bc0").text.replace("/n", "")

baths = list.find('span', class_="b6a29bc0").text.replace("/n", "")

length = list.find('span', class_="b6a29bc0").text.replace("/n", "")

info = [title, location, price, type, beds, baths, length]

wrt.writerow(info)

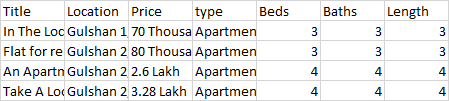

print(info)这是我的CSV

所以实际上,我想用一个脚本来显示页面数据。是否有办法这样做,还是有办法解决这个问题?

回答 1

Stack Overflow用户

回答已采纳

发布于 2022-07-25 17:18:58

list和type是python中的data structure type。不要将它们用作变量名。

使用它们作为变量名将屏蔽块范围内的内置函数"type“和"list”。因此,虽然这样做不会引发SyntaxError,但它并不被认为是良好的实践,因此我将避免这样做。

另外,由于您在同一个循环中打开和关闭文件,请使用附加模式。

下面的代码应该可以正常工作:

for u in urls:

page = requests.get(u)

soup = BeautifulSoup(page.content, 'html.parser')

lists = soup.find_all('article', class_="ca2f5674")

with open("bproperty-gulshan.csv", 'a', encoding="utf8", newline='') as f:

wrt = writer(f)

header = ["Title", "Location", "Price", "type", "Beds", "Baths", "Length"]

wrt.writerow(header)

for list_ in lists:

price = list_.find('span', class_="f343d9ce").text.replace("/n", "")

location = list_.find('div', class_="_7afabd84").text.replace("/n", "")

type_ = list_.find('div', class_="_9a4e3964").text.replace("/n", "")

title = list_.find('h2', class_="_7f17f34f").text.replace("/n", "")

beds = list_.find('span', class_="b6a29bc0").text.replace("/n", "")

baths = list_.find('span', class_="b6a29bc0").text.replace("/n", "")

length = list_.find('span', class_="b6a29bc0").text.replace("/n", "")

info = [title, location, price, type_, beds, baths, length]

wrt.writerow(info)

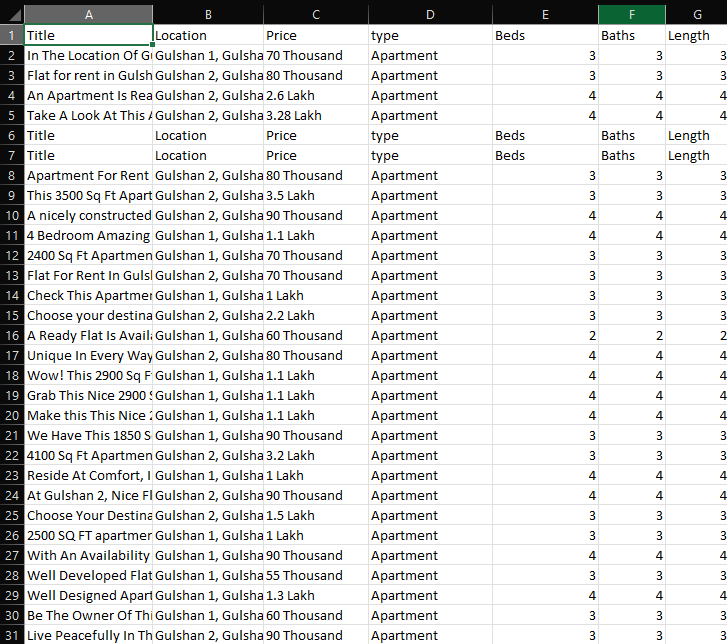

print(info)这给了我们

请记住,每次打开文件时都会编写标题,所以最好只打开文件的句柄一次,在循环中完成处理后再关闭。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/73112936

复制相关文章

相似问题