使用terraform的CoreDNS集群超时(20分钟)

使用terraform的CoreDNS集群超时(20分钟)

提问于 2022-07-10 14:32:50

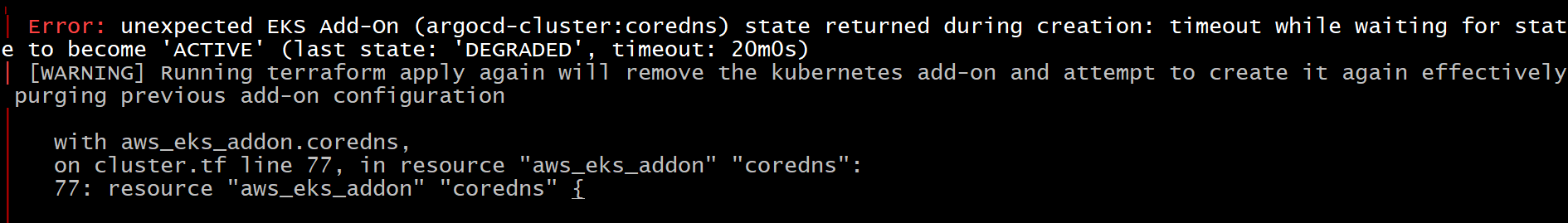

我试图在AWS环境中创建一个具有Fargate配置文件的EKS集群。但是,在核心DNS创建20分钟之后,进程就失败了。我只是想创建一个集群,这样我就可以在其中部署吊舱了。我得到了以下错误:

module "eks" {

source = "terraform-aws-modules/eks/aws"

version = "~> 18.0"

cluster_name = var.CLUSTER_NAME

cluster_version = "1.22"

cluster_endpoint_private_access = true

cluster_endpoint_public_access = true

cluster_enabled_log_types = ["api", "audit", "authenticator", "controllerManager", "scheduler"]

cluster_addons = {

kube-proxy = {}

vpc-cni = {

resolve_conflicts = "OVERWRITE"

}

}

cluster_encryption_config = [{

provider_key_arn = aws_kms_key.eks.arn

resources = ["secrets"]

}]

vpc_id = module.vpc.vpc_id

subnet_ids = module.vpc.private_subnets

# Fargate Profile(s)

fargate_profiles = {

coredns-fargate-profile = {

name = "coredns"

selectors = [

{

namespace = "kube-system"

},

{

namespace = "default"

},

{

namespace = var.ARGOCD_NAME_SPACE

},

]

pod_execution_role_arn = aws_iam_role.pod-execution-role.arn

service_account_name = local.k8s_service_account_name

subnets = module.vpc.private_subnets

}

}

}

resource "aws_eks_addon" "coredns" {

addon_name = "coredns"

addon_version = "v1.8.7-eksbuild.1"

cluster_name = var.CLUSTER_NAME

resolve_conflicts = "OVERWRITE"

depends_on = [module.eks]

}回答 1

Stack Overflow用户

回答已采纳

发布于 2022-07-10 15:10:31

这是因为默认情况下,代码不在Fargate上运行,但是您已经请求在Fargate上运行所有kube系统工作负载。目前,terraform-aws-eks并不像eksctl那样在Fargate上自动运行代码。

尝试从Fargate配置文件中删除kube-system命名空间,并添加一个节点组:

...

vpc_id = module.vpc.vpc_id

subnet_ids = module.vpc.private_subnets

eks_managed_node_groups = {

default = {

ami_type = "AL2_x86_64"

instance_types = ["t3.medium"]

min_size = 1

max_size = 1

desired_size = 1

}

}

...在规定完成后,运行补丁命令,然后在Fargate上运行coredns。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/72929259

复制相关文章

相似问题