Google云转录API

我想在双向通话中计算每一位发言者的通话时间,包括说话人的标记、转录、说话人持续时间的时间戳以及对此的信心。

例如:我有一个客户服务支持的mp3文件和两个扬声器计数。我想知道说话人带着说话人标签的时间,抄写的时间和信心。

我面临的问题是结束时间和对抄写的信心。我有信心,因为0在转录和结束时间是不适合的实际结束时间。

音频链接:DKP2unNxQzMIYlNW/view?usp=共享

**strong text**

#!pip install --upgrade google-cloud-speech

from google.cloud import speech_v1p1beta1 as speech

import datetime

tag=1

speaker=""

transcript = ''

client = speech.SpeechClient.from_service_account_file('#cloud_credentials')

audio = speech.types.RecognitionAudio(uri=gs_uri)

config = speech.types.RecognitionConfig(

encoding=speech.RecognitionConfig.AudioEncoding.LINEAR16,

sample_rate_hertz=16000,

language_code='en-US',

enable_speaker_diarization=True,

enable_automatic_punctuation=True,

enable_word_time_offsets=True,

diarization_speaker_count=2,

use_enhanced=True,

model='phone_call',

profanity_filter=False,

enable_word_confidence=True)

print('Waiting for operation to complete…')

operation = client.long_running_recognize(config=config, audio=audio)

response = operation.result(timeout=100000)

with open('output_file.txt', "w") as text_file:

for result in response.results:

alternative = result.alternatives[0]

confidence = result.alternatives[0].confidence

current_speaker_tag=-1

transcript = ""

time = 0

for word in alternative.words:

if word.speaker_tag != current_speaker_tag:

if (transcript != ""):

print(u"Speaker {} - {} - {} - {}".format(current_speaker_tag, str(datetime.timedelta(seconds=time)), transcript, confidence), file=text_file)

transcript = ""

current_speaker_tag = word.speaker_tag

time = word.start_time.seconds

transcript = transcript + " " + word.word

if transcript != "":

print(u"Speaker {} - {} - {} - {}".format(current_speaker_tag, str(datetime.timedelta(seconds=time)), transcript, confidence), file=text_file)

print(u"Speech to text operation is completed, output file is created: {}".format('output_file.txt'))

回答 1

Stack Overflow用户

发布于 2022-06-15 05:40:13

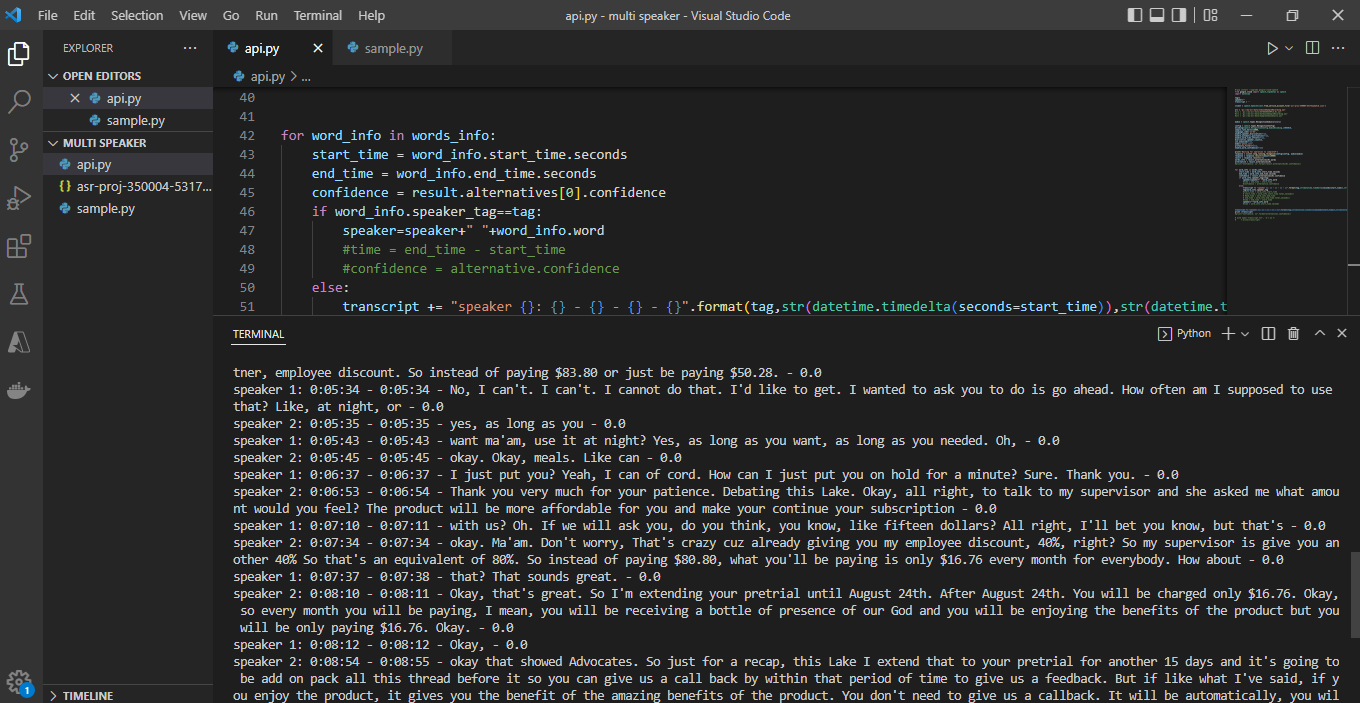

问题中的代码和屏幕截图各不相同。然而,从截图中可以理解,您正在使用speech to text speaker diarization方法创建单个演讲者的演讲。

在这里,您无法计算每个说话人的不同自信,因为response包含每个文本和单个单词的confidence值。一份记录可以包含也可以不包含多个说话者的话,取决于音频。

同样,根据文档,response在最后一个结果列表中包含所有带有speaker_tag的words。从医生那里

每个结果中的文字记录是分开的,每个结果都是顺序的。然而,备选案文中的清单一词包括迄今为止所有结果中的所有词语。因此,要获得带有扬声器标记的所有单词,只需从最后结果中获取单词列表即可。

对于最后一个结果列表,置信度为0。您可以在控制台或任何文件中写入响应并自己调试。

# Detects speech in the audio file

operation = client.long_running_recognize(config=config, audio=audio)

response = operation.result(timeout=10000)

# check the whole response

with open('output_file.txt', "w") as text_file:

print(response,file=text_file)或者你也可以打印个人成绩单和自信,以便更好地理解.eg:

#confidence for each transcript

for result in response.results:

alternative = result.alternatives[0]

print("Transcript: {}".format(alternative.transcript))

print("Confidence: {}".format(alternative.confidence))对于每个演讲者的持续时间问题,您计算的是每个单词的开始时间和结束时间,而不是单个发言者的起始时间和结束时间。这个想法应该是这样的:-

- 获取演讲者的第一个单词的起始时间作为持续时间开始时间。

- 总是将每个单词的结束时间设置为持续结束时间,因为我们不知道下一个单词是否有不同的发言人。

- 注意说话人的变化,如果说话人是相同的,那么只需在修改后的抄本中添加单词,否则也会做同样的事情,并重新设置新发言人的开始时间。例:

tag=1

speaker=""

transcript = ''

start_time=""

end_time=""

for word_info in words_info:

end_time = word_info.end_time.seconds #tracking the end time of speech

if start_time=='' :

start_time = word_info.start_time.seconds #setting the value only for first time

if word_info.speaker_tag==tag:

speaker=speaker+" "+word_info.word

else:

transcript += "speaker {}: {}-{} - {}".format(tag,str(datetime.timedelta(seconds=start_time)),str(datetime.timedelta(seconds=end_time)),speaker) + '\n'

tag=word_info.speaker_tag

speaker=""+word_info.word

start_time = word_info.start_time.seconds #resetting the starttime as we found a new speaker

transcript += "speaker {}: {}-{} - {}".format(tag,str(datetime.timedelta(seconds=start_time)),str(datetime.timedelta(seconds=end_time)),speaker) + '\n'我已经删除了修改成绩单中的信心部分,因为它将始终是0。还请记住,Speaker diarization仍处于beta开发阶段,您可能无法得到所需的确切输出。

https://stackoverflow.com/questions/72429536

复制相似问题