如何使用Pyspark加载复杂数据

如何使用Pyspark加载复杂数据

提问于 2022-05-26 09:08:14

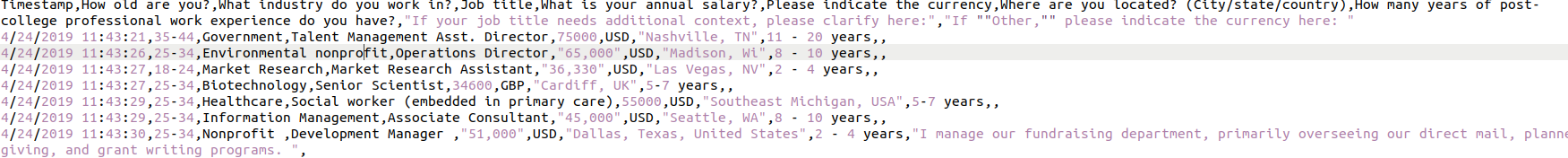

我有一个CSV dataset,如下所示:

此外,PFB数据以文本形式存在:

Timestamp,How old are you?,What industry do you work in?,Job title,What is your annual salary?,Please indicate the currency,Where are you located? (City/state/country),How many years of post-college professional work experience do you have?,"If your job title needs additional context, please clarify here:","If ""Other,"" please indicate the currency here: "

4/24/2019 11:43:21,35-44,Government,Talent Management Asst. Director,75000,USD,"Nashville, TN",11 - 20 years,,

4/24/2019 11:43:26,25-34,Environmental nonprofit,Operations Director,"65,000",USD,"Madison, Wi",8 - 10 years,,

4/24/2019 11:43:27,18-24,Market Research,Market Research Assistant,"36,330",USD,"Las Vegas, NV",2 - 4 years,,

4/24/2019 11:43:27,25-34,Biotechnology,Senior Scientist,34600,GBP,"Cardiff, UK",5-7 years,,

4/24/2019 11:43:29,25-34,Healthcare,Social worker (embedded in primary care),55000,USD,"Southeast Michigan, USA",5-7 years,,

4/24/2019 11:43:29,25-34,Information Management,Associate Consultant,"45,000",USD,"Seattle, WA",8 - 10 years,,

4/24/2019 11:43:30,25-34,Nonprofit ,Development Manager ,"51,000",USD,"Dallas, Texas, United States",2 - 4 years,"I manage our fundraising department, primarily overseeing our direct mail, planned giving, and grant writing programs. ",

4/24/2019 11:43:30,25-34,Higher Education,Student Records Coordinator,"54,371",USD,Philadelphia,8 - 10 years,equivalent to Assistant Registrar,

4/25/2019 8:35:51,25-34,Marketing,Associate Product Manager,"43,000",USD,"Cincinnati, OH, USA",5-7 years,"I started as the Marketing Coordinator, and was given the ""Associate Product Manager"" title as a promotion. My duties remained mostly the same and include graphic design work, marketing, and product management.",现在,我尝试了以下代码来加载数据:

df = spark.read.option("header", "true").option("multiline", "true").option(

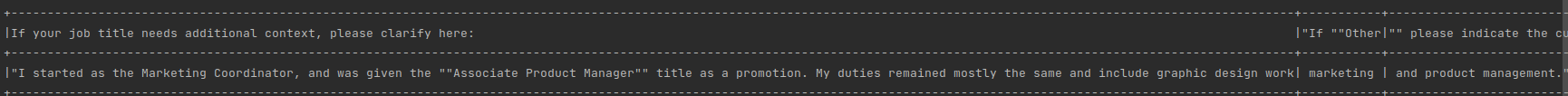

"delimiter", ",").csv("path")它给出了最后一个记录的输出,该记录对列进行了划分,并且输出也不是预期的那样:

值应为最后一列的null,即"If ""Other,"" please indicate the currency here: ",整个字符串应封装在前面的列中,即"If your job title needs additional context, please clarify here:"

我也尝试过.option('quote','/"').option('escape','/"'),但也没有成功。

但是,当我试图使用Pandas加载该文件时,它被正确加载。我感到惊讶的是,Pandas如何能够确定新列名的起始位置和所有内容。也许我可以为所有的列定义一个String schema,并将其加载回星火数据框架中,但是由于我使用的是较低的星火版本,它不能以分布式的方式工作,所以我正在探索一种如何有效地处理这个问题的方法。

任何帮助都是非常感谢的。

回答 1

Stack Overflow用户

回答已采纳

发布于 2022-05-26 11:28:37

主要问题是csv文件中连续的双引号。您必须在csv文件中规避额外的双引号,如下所示:

4/24/2019 11:43:30,25-34,Higher Education,Student Records Coordinator,"54,371",USD,Philadelphia,8 - 10 years,equivalent to Assistant Registrar,

4/25/2019 8:35:51,25-34,Marketing,Associate Product Manager,"43,000",USD,"Cincinnati, OH, USA",5-7 years,"I started as the Marketing Coordinator, and was given the \\" \ " Associate Product Manager \\" \ " title as a promotion. My duties remained mostly the same and include graphic design work, marketing, and product management.",在此之后,它将产生预期的结果:

df2 = spark.read.option("header",True).csv("sample1.csv")

df2.show(10,truncate=False)*产出*

|4/25/2019 8:35:51 |25-34 |Marketing |Associate Product Manager |43,000 |USD |Cincinnati, OH, USA |5-7 years |I started as the Marketing Coordinator, and was given the ""Associate Product Manager"" title as a promotion. My duties remained mostly the same and include graphic design work, marketing, and product management.|null |null |或者您可以使用blow代码

df2 = spark.read.option("header",True).option("multiline","true").option("escape","\"").csv("sample1.csv")页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/72389385

复制相关文章

相似问题