使用两个PySpark列通过GroupBy创建JSON字符串

使用两个PySpark列通过GroupBy创建JSON字符串

提问于 2022-04-25 11:25:13

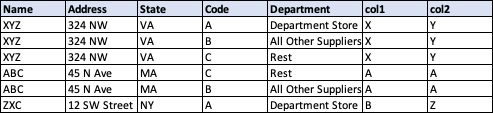

我有一个火花数据,如下所示。我想要创建一个列'new_col‘,它按除'Code’和'Department‘以外的所有列分组,并根据'Code’和'Department‘列分配一个JSON结构。

需要首先对数据进行排序。除了“代码”和“部门”列外,行1-3和4-5是重复的.因此,我希望为前3行创建new_col作为{“代码”:"A“、”部门“:”百货公司“}、{”代码“:"B”、“部门”:“所有其他供应商”}、{“代码”:"C“、”部门“:"Rest"}

我的输入数据:

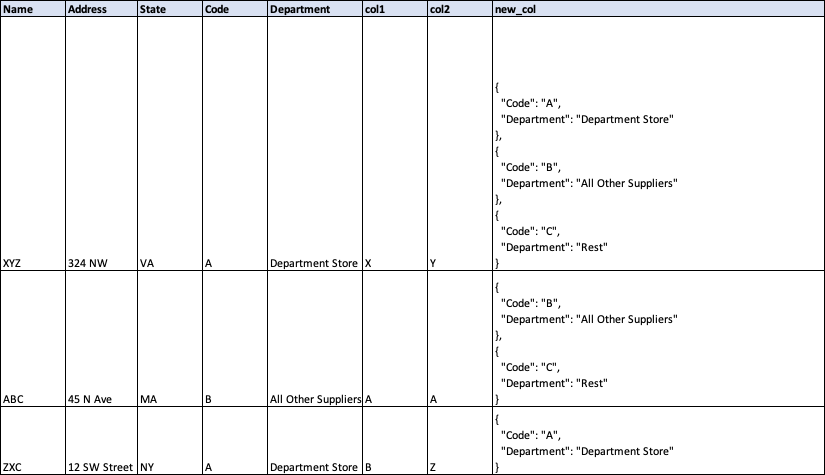

预期输出火花数据:

回答 1

Stack Overflow用户

回答已采纳

发布于 2022-04-25 12:03:50

像这样的事情应该可以做到:

from pyspark.sql import functions as F, Window as W

df = spark.createDataFrame(

[('XYZ', '324 NW', 'VA', 'A', 'Department Store', 'X', 'Y'),

('XYZ', '324 NW', 'VA', 'B', 'All Other Suppliers', 'X', 'Y'),

('XYZ', '324 NW', 'VA', 'C', 'Rest', 'X', 'Y'),

('ABC', '45 N Ave', 'MA', 'C', 'Rest', 'A', 'A'),

('ABC', '45 N Ave', 'MA', 'B', 'All Other Suppliers', 'A', 'A'),

('ZXC', '12 SW Street', 'NY', 'A', 'Department Store', 'B', 'Z')],

['Name', 'Address', 'State', 'Code', 'Department', 'col1', 'col2']

)

cols = [c for c in df.columns if c not in ['Code', 'Department']]

w1 = W.partitionBy(cols).orderBy('Code')

w2 = W.partitionBy(cols).orderBy(F.desc('Code'))

df = (df

.withColumn('_rn', F.row_number().over(w1))

.withColumn('new_col', F.collect_list(F.to_json(F.struct(['Code', 'Department']))).over(w2))

.withColumn("new_col", F.array_join("new_col", ","))

.filter('_rn=1')

.drop('_rn')

)df.show(truncate=False)

# +----+------------+-----+----+-------------------+----+----+-----------------------------------------------------------------------------------------------------------------------------+

# |Name|Address |State|Code|Department |col1|col2|new_col |

# +----+------------+-----+----+-------------------+----+----+-----------------------------------------------------------------------------------------------------------------------------+

# |ABC |45 N Ave |MA |B |All Other Suppliers|A |A |{"Code":"C","Department":"Rest"},{"Code":"B","Department":"All Other Suppliers"} |

# |XYZ |324 NW |VA |A |Department Store |X |Y |{"Code":"C","Department":"Rest"},{"Code":"B","Department":"All Other Suppliers"},{"Code":"A","Department":"Department Store"}|

# |ZXC |12 SW Street|NY |A |Department Store |B |Z |{"Code":"A","Department":"Department Store"} |

# +----+------------+-----+----+-------------------+----+----+-----------------------------------------------------------------------------------------------------------------------------+页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/71998770

复制相关文章

相似问题