如何使用scrapy中的FEED/FEED导出报废数据

如何使用scrapy中的FEED/FEED导出报废数据

提问于 2021-12-30 23:59:43

我是网络抓取/刮取和python的新手

版本:scrapy2.5.1操作系统: windows : pycharm

我试图在scrapy中使用FEEDS选项来自动从网站导出报废的数据,以便下载到excel中。

尝试了以下解决方案,但没有奏效,stackoverflow溶液不知道我做错了什么,我是不是遗漏了什么?

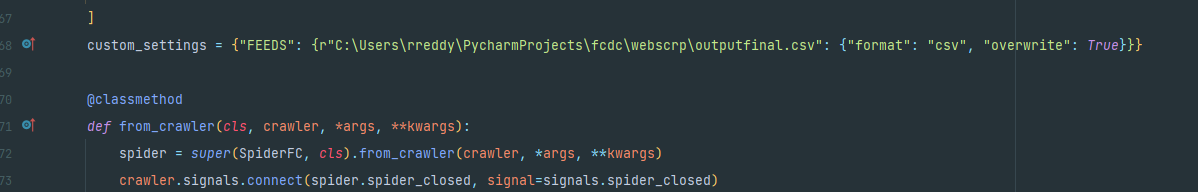

在对蜘蛛类中的settings.py文件进行注释之后,我还尝试在custom_settings文件中添加相同的内容,如文档:https://docs.scrapy.org/en/latest/topics/feed-exports.html?highlight=feed#feeds中提供的示例所示

目前,我已经实现了使用关着的不营业的 (信号)将数据写入CSV的要求,方法是将所有已擦伤的项数据存储在一个名为result的数组中。

class SpiderFC(scrapy.Spider):

name = "FC"

start_urls = [

url,

]

custom_setting = {"FEEDS": {r"C:\Users\rreddy\PycharmProjects\fcdc\webscrp\outputfinal.csv": {"format": "csv", "overwrite": True}}}

@classmethod

def from_crawler(cls, crawler, *args, **kwargs):

spider = super(SpiderFC, cls).from_crawler(crawler, *args, **kwargs)

crawler.signals.connect(spider.spider_closed, signal=signals.spider_closed)

return spider

def __init__(self, name=None):

super().__init__(name)

self.count = None

def parse(self, response, **kwargs):

# each item scrapped from parent page has links where the actual data need to be scrapped so i follow each link and scrape data

yield response.follow(notice_href_follow, callback=self.parse_item,

meta={'item': item, 'index': index, 'next_page': next_page})

def parse_item(self, response):

# logic for items to scrape goes here

# they are saved to temp list and appended to result array and then temp list is cleared

result.append(it) # result data is used at the end to write to csv

item.clear()

if next_page:

yield next(self.follow_next(response, next_page))

def follow_next(self, response, next_page):

next_page_url = urljoin(url, next_page[0])

yield response.follow(next_page_url, callback=self.parse)蜘蛛闭合信号

def spider_closed(self, spider):

with open(output_path, mode="a", newline='') as f:

writer = csv.writer(f)

for v in result:

writer.writerow([v["city"]])当所有数据都被抓取并且所有请求都完成时,spider_closed信号会将数据写入csv,但我试图避免这种逻辑或代码,并使用内置的从scrapy导出的导出程序,但我在导出数据方面遇到了困难

回答 1

Stack Overflow用户

回答已采纳

发布于 2021-12-31 02:30:29

检查一下你的路。如果您在windows上,那么在custom_settings中提供完整的路径,如下面所示

custom_settings = {

"FEEDS":{r"C:\Users\Name\Path\To\outputfinal.csv" : {"format" : "csv", "overwrite":True}}

}如果您在linux或MAC上,那么提供如下路径:

custom_settings = {

"FEEDS":{r"/Path/to/folder/fcdc/webscrp/outputfinal.csv" : {"format" : "csv", "overwrite":True}}

}或者,提供相对路径,如下所示,它将在运行蜘蛛的目录中创建fcdc>>webscrp>>outputfinal.csv的文件夹结构。

custom_settings = {

"FEEDS":{r"./fcdc/webscrp/outputfinal.csv" : {"format" : "csv", "overwrite":True}}

}页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/70537837

复制相关文章

相似问题