SIFT匹配计算机视觉

我需要确定超市里酸奶的位置。源照片看上去

有模板:

我使用SIFT提取模板的关键点:

img1 = cv.imread('train.jpg')

sift = cv.SIFT_create()# queryImage

kp1, des1 = sift.detectAndCompute(img1, None)

path = glob.glob("template.jpg")

cv_img = []

l=0

for img in path:

img2 = cv.imread(img) # trainImage

# Initiate SIFT detector

# find the keypoints and descriptors with SIFT

kp2, des2 = sift.detectAndCompute(img2,None)

# FLANN parameters

FLANN_INDEX_KDTREE = 1

index_params = dict(algorithm = FLANN_INDEX_KDTREE, trees = 5)

search_params = dict(checks=50) # or pass empty dictionary

flann = cv.FlannBasedMatcher(index_params,search_params)

matches = flann.knnMatch(des1,des2,k=2)

# Need to draw only good matches, so create a mask

# ratio test as per Lowe's paper

if (l < len(matches)):

l = len(matches)

image = img2

match = matches

h_query, w_query, _= img2.shape

matchesMask = [[0,0] for i in range(len(match))]

good_matches = []

good_matches_indices = {}

for i,(m,n) in enumerate(match):

if m.distance < 0.7*n.distance:

matchesMask[i]=[1,0]

good_matches.append(m)

good_matches_indices[len(good_matches) - 1] = i

bboxes = []

src_pts = np.float32([ kp1[m.queryIdx].pt for m in good ]).reshape(-1,2)

dst_pts = np.float32([ kp2[m.trainIdx].pt for m in good ]).reshape(-1,2)

model, inliers = initialize_ransac(src_pts, dst_pts)

n_inliers = np.sum(inliers)

matched_indices = [good_matches_indices[idx] for idx in inliers.nonzero()[0]]

print(len(matched_indices))

model, inliers = ransac(

(src_pts, dst_pts),

AffineTransform, min_samples=4,

residual_threshold=4, max_trials=20000

)

n_inliers = np.sum(inliers)

print(n_inliers)

matched_indices = [good_matches_indices[idx] for idx in inliers.nonzero()[0]]

print(matched_indices)

q_coordinates = np.array([(0, 0), (h_query, w_query)])

coords = model.inverse(q_coordinates)

print(coords)

h_query, w_query,_ = img2.shape

q_coordinates = np.array([(0, 0), (h_query, w_query)])

coords = model.inverse(q_coordinates)

print(coords)

# bboxes_list.append((i, coords))

M, mask = cv.findHomography(src_pts, dst_pts, cv.RANSAC, 2)

draw_params = dict(matchColor = (0,255,0),

singlePointColor = (255,0,0),

matchesMask = matchesMask,

flags = cv.DrawMatchesFlags_DEFAULT)

img3 = cv.drawMatchesKnn(img1,kp1,image,kp2,match,None,**draw_params)

plt.imshow(img3),plt.show()筛分的结果看起来

问题是什么是最好的方法,以获得代表每种酸奶的矩形点?我试过RANSAC,但这种方法在这种情况下行不通。

回答 4

Stack Overflow用户

发布于 2022-04-15 21:36:06

我提议一种基于这文件中讨论的方法。我对方法做了一些修改,因为用例并不完全相同,但是他们确实使用SIFT功能匹配来定位视频帧中的多个对象。他们使用PCA来缩短时间,但这可能不是静止图像所必需的。

对不起,我不能为此编写代码,因为这将需要很多时间,但我认为这应该可以找到所有出现的模板对象。

修改后的方法如下:

将模板图像划分为区域:左、中、右沿水平和顶部,底部沿垂直方向。

现在,当您在模板和源图像之间匹配功能时,您将从源图像上多个位置的这些区域中的一些关键点中获得匹配的功能。您可以使用这些关键点来识别模板的哪个区域存在于源图像中的哪个位置。如果存在重叠区域,即来自不同区域的关键点与源图像中的闭合关键点相匹配,那么这将意味着错误的匹配。

将源图像上邻域内的每一组匹配的关键点标记为左、中、右、上、下,这取决于它们是否具有模板图像中特定区域的关键点的多数匹配。

从源图像上的每个左区域开始向右移动,如果我们找到一个中心区域,然后是一个右区域,那么在标记为左和右的区域之间的源图像区域,可以标记为一个模板对象的位置。

当从左区域向右移动时,可能存在重叠的对象,这可能导致一个左区域,然后是另一个左区域。两个左区域之间的区域可以标记为一个模板对象。

对于进一步细化的位置,标记为一个模板对象的源图像的每个区域都可以被裁剪并与模板图像重新匹配。

Stack Overflow用户

发布于 2022-04-15 07:42:18

试着在空间上工作:对于img2中的每一个关键点,在周围设置一些边界框,并只考虑其中的点,以便您的ransac单元化来检查是否最合适。

您还可以使用重叠窗口,并在以后丢弃类似的结果同音字。

Stack Overflow用户

发布于 2022-04-17 11:32:23

这是你能做的

基本图像=架子全貌

模板图像=单个产品图像

- 从这两个图像中获取SIFT匹配。(基本图像和模板图像)

- 做特征匹配。

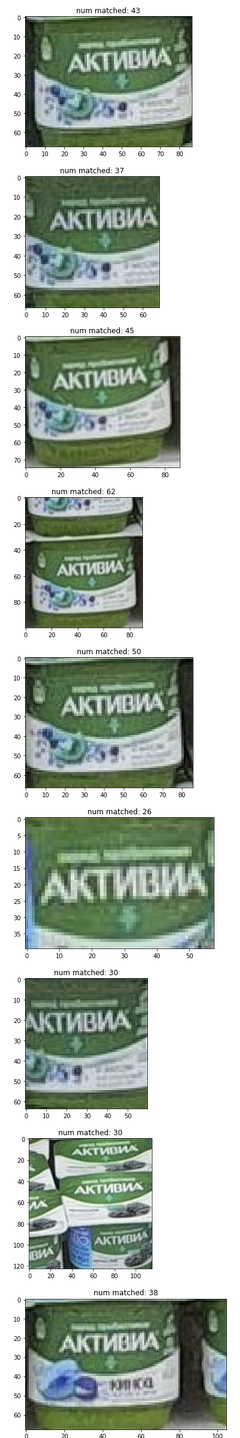

- 获取基本图像中的所有匹配点。(见图)

- 根据模板图像的大小创建群集。(这里的阈值为50 in )

- 找到包围盒的星团。

- 裁剪每个包围框,并检查匹配与模板图像。

- 接受至少有最小匹配百分比的所有集群。(这里至少取了10%的关键点)

def plot_pts(img, pts):

img_plot = img.copy()

for i in range(len(pts)):

img_plot = cv2.circle(img_plot, (int(pts[i][0]), int(pts[i][1])), radius=7, color=(255, 0, 0), thickness=-1)

plt.figure(figsize=(20, 10))

plt.imshow(img_plot)

def plot_bbox(img, bbox_list):

img_plot = img.copy()

for i in range(len(bbox_list)):

start_pt = bbox_list[i][0]

end_pt = bbox_list[i][2]

img_plot = cv2.rectangle(img_plot, pt1=start_pt, pt2=end_pt, color=(255, 0, 0), thickness=2)

plt.figure(figsize=(20, 10))

plt.imshow(img_plot)

def get_distance(pt1, pt2):

x1, y1 = pt1

x2, y2 = pt2

return np.sqrt(np.square(x1 - x2) + np.square(y1 - y2))

def check_centroid(pt, centroid):

x, y = pt

cx, cy = centroid

distance = get_distance(pt1=(x, y), pt2=(cx, cy))

if distance < max_distance:

return True

else:

return False

def update_centroid(pt, centroids_list):

new_centroids_list = centroids_list.copy()

flag_new_centroid = True

for j, c in enumerate(centroids_list):

temp_centroid = np.mean(c, axis=0)

if_close = check_centroid(pt, temp_centroid)

if if_close:

new_centroids_list[j].append(pt)

flag_new_centroid = False

break

if flag_new_centroid:

new_centroids_list.append([pt])

new_centroids_list = recheck_centroid(new_centroids_list)

return new_centroids_list

def recheck_centroid(centroids_list):

new_centroids_list = [list(set(c)) for c in centroids_list]

return new_centroids_list

def get_bbox(pts):

minn_x, minn_y = np.min(pts, axis=0)

maxx_x, maxx_y = np.max(pts, axis=0)

return [[minn_x, minn_y], [maxx_x, minn_y], [maxx_x, maxx_y], [minn_x, maxx_y]]

class RotateAndTransform:

def __init__(self, path_img_ref):

self.path_img_ref = path_img_ref

self.ref_img = self._read_ref_image()

#sift

self.sift = cv2.SIFT_create()

#feature matching

self.bf = cv2.BFMatcher()

# FLANN parameters

FLANN_INDEX_KDTREE = 1

index_params = dict(algorithm = FLANN_INDEX_KDTREE, trees = 5)

search_params = dict(checks=50) # or pass empty dictionary

self.flann = cv2.FlannBasedMatcher(index_params,search_params)

def _read_ref_image(self):

ref_img = cv2.imread(self.path_img_ref, cv2.IMREAD_COLOR)

ref_img = cv2.cvtColor(ref_img, cv2.COLOR_BGR2RGB)

return ref_img

def read_src_image(self, path_img_src):

self.path_img_src = path_img_src

# read images

# ref_img = cv2.imread(self.path_img_ref, cv2.IMREAD_COLOR)

src_img = cv2.imread(path_img_src, cv2.IMREAD_COLOR)

src_img = cv2.cvtColor(src_img, cv2.COLOR_BGR2RGB)

return src_img

def convert_bw(self, img):

img_bw = cv2.cvtColor(img, cv2.COLOR_RGB2GRAY)

return img_bw

def get_keypoints_descriptors(self, img_bw):

keypoints, descriptors = self.sift.detectAndCompute(img_bw,None)

return keypoints, descriptors

def get_matches(self, src_descriptors, ref_descriptors, threshold=0.6):

matches = self.bf.knnMatch(ref_descriptors, src_descriptors, k=2)

flann_matches = self.flann.knnMatch(ref_descriptors, src_descriptors,k=2)

good_matches = []

good_flann_matches = []

# Apply ratio test for Brute Force

for m,n in matches:

if m.distance <threshold*n.distance:

good_matches.append([m])

print(f'Numner of BF Match: {len(matches)}, Number of good BF Match: {len(good_matches)}')

# Apply ratio test for FLANN

for m,n in flann_matches:

if m.distance < threshold*n.distance:

good_flann_matches.append([m])

# matches = sorted(matches, key = lambda x:x.distance)

print(f'Numner of FLANN Match: {len(flann_matches)}, Number of good Flann Match: {len(good_flann_matches)}')

return good_matches, good_flann_matches

def get_src_dst_pts(self, good_flann_matches, ref_keypoints, src_keypoints):

pts_src = []

pts_ref = []

n = len(good_flann_matches)

for i in range(n):

ref_index = good_flann_matches[i][0].queryIdx

src_index = good_flann_matches[i][0].trainIdx

pts_src.append(src_keypoints[src_index].pt)

pts_ref.append(ref_keypoints[ref_index].pt)

return np.array(pts_src), np.array(pts_ref)

def extend_bbox(bbox, increment=0.1):

bbox_new = bbox.copy()

bbox_new[0] = [bbox_new[0][0] - int(bbox_new[0][0] * increment), bbox_new[0][1] - int(bbox_new[0][1] * increment)]

bbox_new[1] = [bbox_new[1][0] + int(bbox_new[1][0] * increment), bbox_new[1][1] - int(bbox_new[1][1] * increment)]

bbox_new[2] = [bbox_new[2][0] + int(bbox_new[2][0] * increment), bbox_new[2][1] + int(bbox_new[2][1] * increment)]

bbox_new[3] = [bbox_new[3][0] - int(bbox_new[3][0] * increment), bbox_new[3][1] + int(bbox_new[3][1] * increment)]

return bbox_new

def crop_bbox(img, bbox):

y, x = bbox[0]

h, w = bbox[1][0] - bbox[0][0], bbox[2][1] - bbox[0][1]

return img[x: x + w, y: y + h, :]

base_img = cv2.imread(path_img_base)

ref_img = cv2.imread(path_img_ref)

rnt = RotateAndTransform(path_img_ref)

ref_img_bw = rnt.convert_bw(img=rnt.ref_img)

ref_keypoints, ref_descriptors = rnt.get_keypoints_descriptors(ref_img_bw)

base_img = rnt.read_src_image(path_img_src = path_img_base)

base_img_bw = rnt.convert_bw(img=base_img)

base_keypoints, base_descriptors = rnt.get_keypoints_descriptors(base_img_bw)

good_matches, good_flann_matches = rnt.get_matches(src_descriptors=base_descriptors, ref_descriptors=ref_descriptors, threshold=0.6)

ref_points = []

for gm in good_flann_matches:

x, y = ref_keypoints[gm[0].queryIdx].pt

x, y = int(x), int(y)

ref_points.append((x, y))

max_distance = 50

centroids = [[ref_points[0]]]

for i in tqdm(range(len(ref_points))):

pt = ref_points[i]

centroids = update_centroid(pt, centroids)

bbox = [get_bbox(c) for c in centroi[![enter image description here][1]][1]ds]

centroids = [np.mean(c, axis=0) for c in centroids]

print(f'Number of Points: {len(good_flann_matches)}, centroids: {len(centroids)}')

data = []

for i in range(len(bbox)):

temp_crop_img = crop_bbox(ref_img, extend_bbox(bbox[i], 0.01))

temp_crop_img_bw = rnt.convert_bw(img=temp_crop_img)

temp_crop_keypoints, temp_crop_descriptors = rnt.get_keypoints_descriptors(temp_crop_img_bw)

good_matches, good_flann_matches = rnt.get_matches(src_descriptors=base_descriptors, ref_descriptors=temp_crop_descriptors, threshold=0.6)

temp_data = {'image': temp_crop_img,

'num_matched': len(good_flann_matches),

'total_keypoints' : len(base_keypoints),

}

data.append(temp_data)

filter_data = [{'num_matched' : i['num_matched'], 'image': i['image']} for i in data if i['num_matched'] > 25]

for i in range(len(filter_data)):

temp_num_match = filter_data[i]['num_matched']

plt.figure()

plt.title(f'num matched: {temp_num_match}')

plt.imshow(filter_data[i]['image'])

https://stackoverflow.com/questions/70357428

复制相似问题