如何获取同花顺的主力资金动向

这2天有同学问我,怎么获取同花顺的 主力资金动向。 这2天白天在外面溜达瞎逛看下周边的好山好水,晚上回到家写一写。

了解网站js逆向的同学就会知道,这个其实并不是太难。 如果你会一些js逆向知识就能很快搞定,关键是解决同花顺的hexin解密。

之前看到主力资金动向,我可能会推荐用akshare的个股资金流方法去解决。 但自从东方财富防爬 严格了, 我也很少用了。这里也列一下,需要的可以试一试

"stock_individual_fund_flow" # 个股资金流

"stock_individual_fund_flow_rank" # 个股资金流排名

"stock_market_fund_flow" # 大盘资金流

"stock_sector_fund_flow_rank" # 板块资金流排名

"stock_sector_fund_flow_summary" # xx行业个股资金流

"stock_sector_fund_flow_hist" # 行业历史资金流

"stock_concept_fund_flow_hist" # 概念历史资金流

"stock_main_fund_flow" # 主力净流入排名

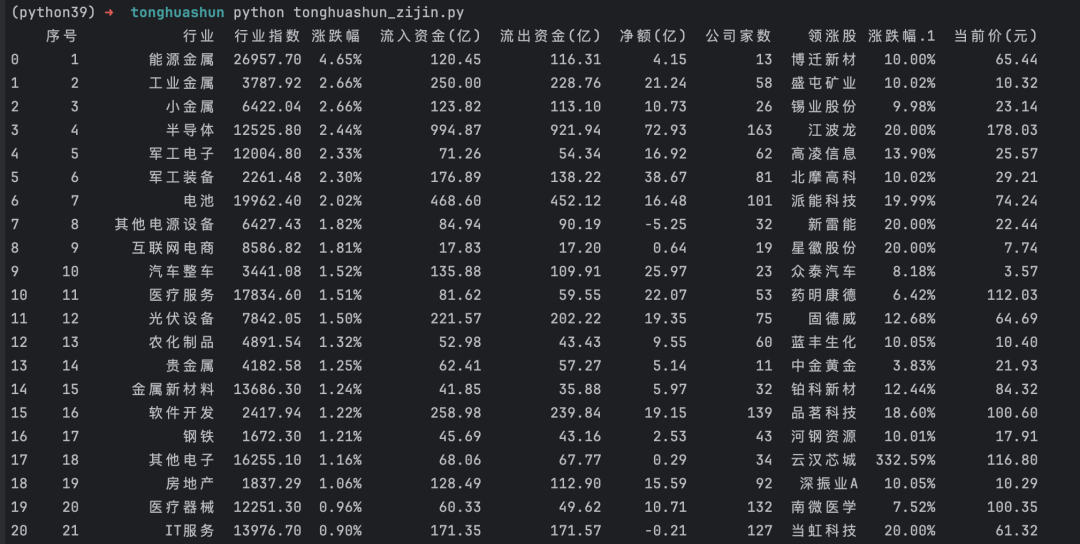

这里贴一下完整代码,参考下思路, 具体根据自己的实际情况改造。 备注:如果发现格式有多余的特殊字符,用普通浏览器打开复制应该没问题。 希望我的分享对大家有所帮助

from io import StringIO

import pandas as pd

import requests

from bs4 import BeautifulSoup

import py_mini_racer

from akshare.utils.tqdm import get_tqdm

from akshare.datasets import get_ths_js

# Setting up pandas display options

pd.set_option('display.unicode.ambiguous_as_wide', True)

pd.set_option('display.unicode.east_asian_width', True)

pd.set_option('display.max_rows', None)

pd.set_option('display.max_columns', None)

pd.set_option('display.expand_frame_repr', False)

pd.set_option('display.max_colwidth', 100)

def _get_file_content_ths(file: str = "ths.js") -> str:

"""

获取 JS 文件的内容

:param file: JS 文件名

:type file: str

:return: 文件内容

:rtype: str

"""

setting_file_path = get_ths_js(file)

with open(setting_file_path, encoding="utf-8") as f:

file_data = f.read()

return file_data

def getZijindongxiang():

js_code = py_mini_racer.MiniRacer()

js_content = _get_file_content_ths("ths.js")

js_code.eval(js_content)

v_code = js_code.call("v")

headers = {

"Accept": "text/html, */*; q=0.01",

"Accept-Encoding": "gzip, deflate",

"Accept-Language": "zh-CN,zh;q=0.9,en;q=0.8",

"Cache-Control": "no-cache",

"Connection": "keep-alive",

"hexin-v": v_code,

"Host": "data.10jqka.com.cn",

"Pragma": "no-cache",

"Referer": "http://data.10jqka.com.cn/funds/hyzjl/",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/90.0.4430.85 Safari/537.36",

"X-Requested-With": "XMLHttpRequest",

}

url = "http://data.10jqka.com.cn//funds/hyzjl/field/tradezdf/order/desc/ajax/1/free/1/"

r = requests.get(url, headers=headers)

soup = BeautifulSoup(r.text, features="lxml")

raw_page = soup.find(name="span", attrs={"class": "page_info"}).text

page_num = raw_page.split("/")[1]

url = "http://data.10jqka.com.cn//funds/hyzjl/field/tradezdf/order/desc/ajax/1/free/1/"

big_df = pd.DataFrame()

tqdm = get_tqdm()

for page in tqdm(range(1, int(page_num) + 1), leave=False):

js_code = py_mini_racer.MiniRacer()

js_content = _get_file_content_ths("ths.js")

js_code.eval(js_content)

v_code = js_code.call("v")

headers = {

"Accept": "text/html, */*; q=0.01",

"Accept-Encoding": "gzip, deflate",

"Accept-Language": "zh-CN,zh;q=0.9,en;q=0.8",

"Cache-Control": "no-cache",

"Connection": "keep-alive",

"hexin-v": v_code,

"Host": "data.10jqka.com.cn",

"Pragma": "no-cache",

"Referer": "http://data.10jqka.com.cn/funds/hyzjl/",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/90.0.4430.85 Safari/537.36",

"X-Requested-With": "XMLHttpRequest",

}

r = requests.get(url.format(page), headers=headers)

temp_df = pd.read_html(StringIO(r.text))[0]

big_df = pd.concat(objs=[big_df, temp_df], ignore_index=True)

return big_df

print(getZijindongxiang())如果我的分享对你投资有所帮助,不吝啬给个点赞关注呗。

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-10-04,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读