【优化篇】shell脚本实现GrayLog配置的定期备份

【优化篇】shell脚本实现GrayLog配置的定期备份

yuanfan2012

发布于 2026-04-21 20:53:34

发布于 2026-04-21 20:53:34

1、优化解决的问题点

在上面这个之前的文章所使用的脚本基础上,进行了优化,为了解决几个问题

- 1、需要备份/opt目录

PrometheusAlert 以及webhook服务、和一些常用联动的脚本均在此目录下

- 2、备份一下/etc/graylog/server/目录

- 3、由于Graylog可能在其他局点,与本地的NAS服务器之间的带宽有限,为了不占用专线的带宽,使用

sshpass+rsync命令的方式对/opt目录备份时只需要进行增量差异同步即可,不必每次都重新传输备份一次/opt目录

2、优化后的具体脚本

/opt/graylog_mongodb_backup.sh

#!/bin/bash

# LOCK_FILE文件路径

LOCK_FILE=/var/log/mongodb_backup_record.log

# 钉钉机器人 Webhook URL

WEBHOOK_URL="https://oapi.dingtalk.com/robot/send?access_token=XXXXXXXXXX"

# 获取当前日期作为变量

current_datetime=$(date +"%Y-%m-%d_%H_%M_%S")

#current_date=$(date +"%Y-%m-%d")

# 定义备份目录和文件名

backup_dir="/home/graylog_mongodb_backup"

backup_file="graylog_mongodb_backup$current_datetime"

# MongoDB 连接参数

#mongodb_host="localhost"

#mongodb_user="graylog"

#mongodb_password=""

mongodb_database="graylog"

# NAS_IP及 目标路径

nas_ip="192.168.31.100"

nas_username="nasadmin"

nas_target_dir="/volume1/FileServer/GraylogBackup/192.168.31.74"

nas_ssh_port="22"

nas_ssh_Password="XXXXXXXXX"

# 创建备份目录

mkdir -p "$backup_dir"

# 备份 MongoDB 数据库

#mongodump -h "$mongodb_host" -u "$mongodb_user" -p "$mongodb_password" -d "$mongodb_database" -o "$backup_dir" >> ${LOCK_FILE} 2>&1

mongodump -d "$mongodb_database" -o "$backup_dir" >> ${LOCK_FILE} 2>&1

# 检查mongodump 命令是否执行成功

if [ $? -eq 0 ]; then

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"MongoDB backup completed successfully." >> ${LOCK_FILE} 2>&1

else

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"Error occurred while performing MongoDB backup." >> ${LOCK_FILE} 2>&1

exit 1

fi

# 打包备份文件为 tar.gz 格式

cd$backup_dir

tar -zcvf /tmp/"$backup_file.tar.gz" graylog >> ${LOCK_FILE} 2>&1

# 检查打包命令是否执行成功

if [ $? -eq 0 ]; then

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"Backup files compressed successfully." >> ${LOCK_FILE} 2>&1

else

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"Error occurred while compressing backup files." >> ${LOCK_FILE} 2>&1

exit 1

fi

# 上传备份文件到 NAS

current_time=$(date +"%Y-%m-%d %H:%M:%S")

sshpass -p $nas_ssh_Password rsync -avzP scp -r -P "$nas_ssh_port" /opt "$nas_username@$nas_ip:$nas_target_dir" >> ${LOCK_FILE} 2>&1

sshpass -p $nas_ssh_Password rsync -avzP scp -r -P "$nas_ssh_port" /etc/graylog/server "$nas_username@$nas_ip:$nas_target_dir" >> ${LOCK_FILE} 2>&1

sshpass -p $nas_ssh_Password scp -P "$nas_ssh_port" /tmp/"$backup_file.tar.gz""$nas_username@$nas_ip:$nas_target_dir" >> ${LOCK_FILE} 2>&1

# 检查上传命令是否执行成功

if [ $? -eq 0 ]; then

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"Backup files uploaded to NAS successfully." >> ${LOCK_FILE} 2>&1

echo"备份文件上传成功,发送dingding通知" >> ${LOCK_FILE} 2>&1

notify_message="【通知】:Graylog服务器<font color=#FF0000> IP:($(hostname -I))</font> 的MongoDB数据库备份文件已上传至NAS <font color=#FF0000>IP:($nas_ip) </font>。\n\n【备份文件上传时间】:<font color=#FF0000> $current_time </font>\n\n【备份文件上传路径及文件名称】:<font color=#FF0000>$nas_target_dir/$backup_file.tar.gz</font>"

echo$notify_message >> ${LOCK_FILE} 2>&1

curl -s -H "Content-Type: application/json" -d "{\"msgtype\":\"markdown\",\"markdown\":{\"title\":\"通知\",\"text\":\"$notify_message\"}}""$WEBHOOK_URL" >> ${LOCK_FILE} 2>&1

# 删除临时备份文件和目录

rm -rf "$backup_dir" >> ${LOCK_FILE} 2>&1

rm /tmp/"$backup_file.tar.gz" >> ${LOCK_FILE} 2>&1

else

echo `date +"%Y-%m-%d %H:%M:%S"` >> ${LOCK_FILE} 2>&1

echo"Error occurred while uploading backup files to NAS.">> ${LOCK_FILE} 2>&1

# 删除临时备份目录

rm -rf "$backup_dir" >> ${LOCK_FILE} 2>&1

exit 1

fi

说明

- 1、需要提前yum install sshpass组件

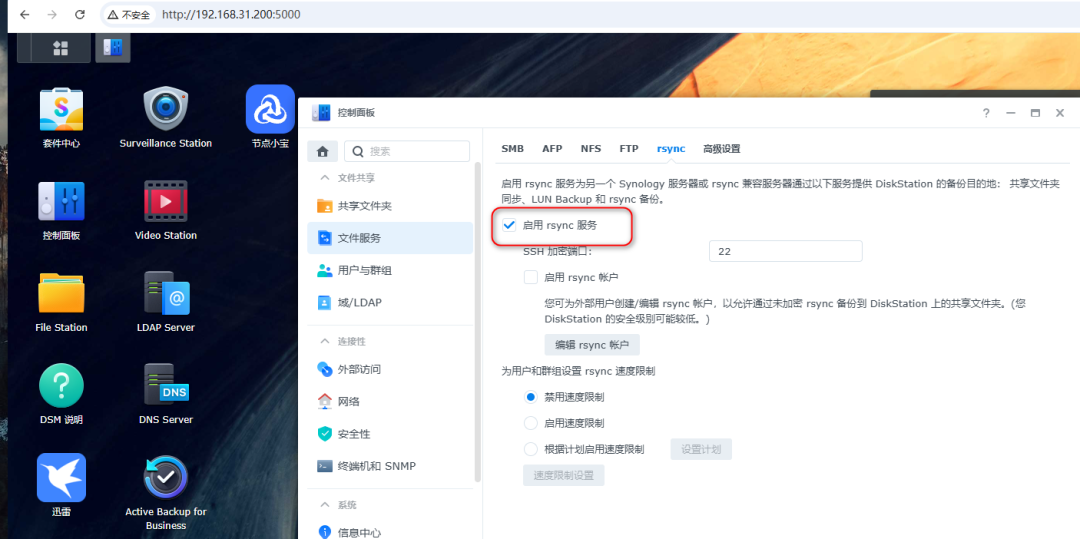

- 2、NAS要开启rsync服务

- 3、然后再配置crontab定时任务

crontab -l

# 每天 2:00 进行备份

0 3 * * * /opt/graylog_mongodb_backup.sh

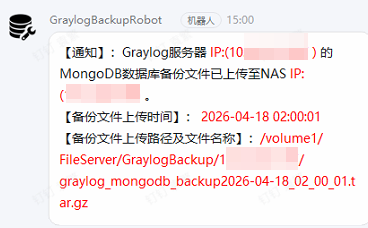

4、最终的效果如截图所示

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2026-04-18,如有侵权请联系 cloudcommunity@tencent.com 删除

本文分享自 WalkingCloud 微信公众号,前往查看

如有侵权,请联系 cloudcommunity@tencent.com 删除。

本文参与 腾讯云自媒体同步曝光计划 ,欢迎热爱写作的你一起参与!

评论

登录后参与评论

推荐阅读

目录