CUDA is the incumbent,but is it any good?-Democratizing AI Part4

CUDA is the incumbent,but is it any good?-Democratizing AI Part4

用户9732312

发布于 2026-03-18 20:49:14

发布于 2026-03-18 20:49:14

判断CUDA的“优劣”远非表面看起来那么简单。我们讨论的是其原始性能?功能特性?还是它在AI开发领域中的广泛影响?CUDA的“好坏”取决于提问者的身份与需求。本文将站在GenAI生态日常使用者的视角,对CUDA进行多维评估:

- 对依赖CUDA构建的AI工程师而言:它是不可或缺的工具,但伴随版本兼容问题、驱动程序行为的不可预测性,以及深度的平台依赖枷锁。

- 为NVIDIA硬件编写GPU代码的AI工程师:CUDA提供强大的优化能力,但代价是必须忍受实现顶尖性能所需的“痛苦修行”。

- 希望AI负载运行在多厂商GPU的用户:CUDA与其说是解决方案,不如说是阻碍。

- NVIDIA自身:这家围绕CUDA构建商业帝国的公司,凭借其巨额利润与对AI计算的统治地位,不断强化行业护城河。

那么,CUDA究竟“好”吗?让我们逐一拆解视角,寻找答案!

AI工程师:与CUDA共舞的双面体验

当前,许多工程师基于LlamaIndex、LangChain、AutoGen等AI框架构建应用,无需深究底层硬件细节。对他们而言,CUDA是强大的盟友。其成熟度与行业统治地位带来显著优势:多数AI框架与NVIDIA硬件天然兼容,且全行业对单一平台的聚焦推动了协作效率。

然而,CUDA的霸主地位亦伴随挥之不去的挑战:

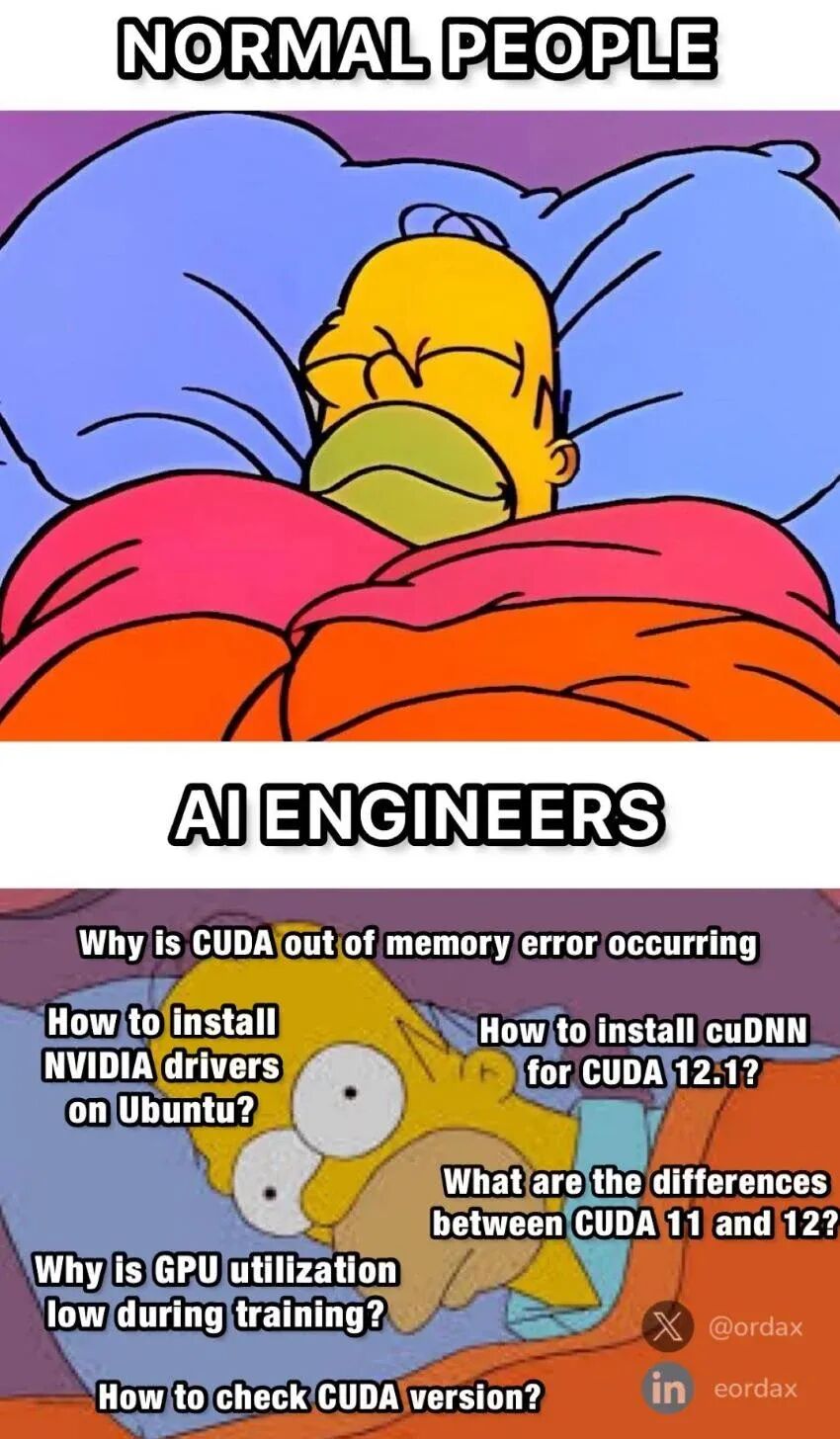

- 版本管理之痛 不同CUDA版本的兼容性问题堪称噩梦,正如开发者社群中广为流传的梗图(来源:x.com/ordax)所嘲——这不仅是段子,更是许多工程师的血泪史。AI从业者需不断确保CUDA工具包、NVIDIA驱动与AI框架的兼容性,版本错配可能导致构建失败或运行时错误,例如: “使用最新版NVIDIA PyTorch Docker镜像构建系统失败,原因是pip安装的PyTorch基于CUDA 11.7编译,而容器却依赖CUDA 12.1。” (github.com案例) 或: “升级CUDA版本或更新Linux系统可能导致GPU驱动崩溃,NVIDIA驱动与CUDA开发软件的配置犹如迷宫。” (dev.to案例) 此类问题屡见不鲜,修复往往需深厚经验与耗时调试。NVIDIA依赖晦涩的工具与复杂的配置流程,劝退新手并拖累创新。

- NVIDIA的治标不治本 面对上述问题,NVIDIA历来选择“向上堆砌”解决单点需求,而非根治CUDA层本身。例如近期推出的NIM(NVIDIA推理微服务)——一套容器化微服务,旨在简化模型部署。尽管此举可能优化特定场景,但NIM也抽象了底层操作,加剧了生态锁定,削弱了开发者对底层优化(CUDA核心价值)的掌控与创新空间。

底层开发者:性能巅峰的苦修

对于贴近硬件的AI模型开发者与性能工程师,CUDA的权衡截然不同……

AI模型开发者与性能工程师:在CUDA的枷锁下追寻极致性能

对追求AI模型极限的研究者和工程师而言,CUDA既是不可或缺的利器,也是令人窒息的枷锁。对他们来说,CUDA不是简单的API,而是每一行性能关键代码的基石。这群工程师深耕于最底层的优化领域:编写定制化CUDA内核、调整内存访问模式、从NVIDIA硬件中榨取每一丝性能——GenAI的规模与成本迫使他们必须如此。但CUDA究竟是助力创新,还是扼杀了他们的可能性?

CUDA的老化危机:生于2007,难承GenAI之重

尽管统治行业多年,CUDA已显疲态。它诞生于2007年,远早于深度学习乃至GenAI时代。此后GPU经历了巨变:张量核心(Tensor Cores)、稀疏计算等特性成为AI加速的核心。CUDA的早期贡献是简化了GPU编程,但未能适配Transformer和GenAI所需的现代GPU特性。工程师不得不在其限制下“戴着镣铐跳舞”,才能满足工作负载的性能需求。

现代GPU的潜能:CUDA力所不及

- 尖端技术需绕行PTX FlashAttention-3(示例代码)和DeepSeek等创新要求开发者绕过CUDA,直降至PTX(NVIDIA底层汇编语言)。PTX仅部分文档化,随硬件迭代不断变动,对开发者而言如同黑箱。更棘手的是,PTX比CUDA更深度绑定NVIDIA,且可用性更差。但追求极限性能的团队别无选择——他们被迫承受“绕道之痛”。

- 张量核心:性能刚需,却深藏魔法 当今AI模型的大部分算力来自“张量核心”,非传统CUDA核心。然而直接编程张量核心绝非易事。尽管NVIDIA提供抽象层(如cuBLAS和CUTLASS),要最大化GPU潜力仍需秘术般的知识、反复试错,甚至逆向工程未文档化的行为。每代新GPU的张量核心均有变化,但文档滞后,工程师资源有限,难以完全释放硬件潜能。

AI语言分裂之痛:Python的躯壳,C++的骨

现代AI开发以Python为主导,而CUDA编程强制使用C++。在PyTorch中优化模型与性能的工程师,不得不在两种思维迥异的语言间反复横跳——这拖慢迭代速度、制造无谓摩擦,迫使开发者分心于底层性能细节,而非专注模型改进。此外,CUDA对C++模板的依赖导致编译时间漫长,错误信息晦涩难解。

逃离NVIDIA垄断?

以上挑战仅针对甘愿绑定NVIDIA硬件的开发者。若你渴望跨厂商兼容……

追求跨平台兼容的工程师与研究者:挣脱NVIDIA锁定的困局

并非所有开发者都甘愿将软件绑定在NVIDIA硬件上,但现实挑战显而易见:CUDA无法在其他厂商硬件(例如我们口袋中的“超级计算机”)上运行,且尚无替代方案能匹敌CUDA在NVIDIA设备上的性能与能力。这迫使开发者需为多平台重复编写AI代码。

跨平台实践的残酷现实

- 历史教训TensorFlow与PyTorch早期曾尝试支持OpenCL后端,但无论在功能还是速度上都远落后于CUDA版本,最终用户仍被“锁”在NVIDIA生态中。

- 成本困境维护多套代码路径(NVIDIA用CUDA,其他平台用替代方案)代价高昂。AI技术迭代迅猛,仅大型机构有资源支撑此类努力。

CUDA引发的恶性循环

NVIDIA凭借最大用户基数与最强硬件,迫使开发者优先适配CUDA,并寄望其他平台未来跟进。此举进一步固化CUDA作为AI开发“默认平台”的地位,形成自我强化的垄断闭环:越多人用CUDA,生态越壮大;生态越壮大,越多人被迫用CUDA。

👉 破局希望何在?我们将在下篇文章深度剖析OpenCL、TritonLang、MLIR编译器等技术替代方案,揭示它们为何至今未能撼动CUDA的统治地位。

CUDA对NVIDIA自身是福是祸?

答案显然是肯定的:CUDA的护城河造就了赢家通吃的局面。截至2023年,NVIDIA占据数据中心GPU市场约98%份额,牢牢掌控AI算力霸权。正如前文所述,CUDA是连接NVIDIA过去与未来产品的桥梁,推动Blackwell等新架构的普及,巩固其在AI计算的领导地位。

然而,传奇硬件架构师Jim Keller(曾参与设计x86、Zen架构)提出尖锐观点:“CUDA是沼泽而非护城河”,将其比作拖累Intel的x86架构。

为何CUDA反成NVIDIA的桎梏?

- CUDA的可用性反噬NVIDIA 黄仁勋曾宣称NVIDIA的软件工程师数量远超硬件团队,其中大量人力投入CUDA开发。但CUDA的易用性与可扩展性缺陷拖慢创新步伐,迫使NVIDIA持续扩编团队“救火” 。

- CUDA的臃肿阻碍硬件迭代 CUDA无法在NVIDIA各代硬件间实现性能可移植性,其庞大库生态实为双刃剑。推出Blackwell等新GPU时,NVIDIA面临两难:重构CUDA,或让新架构性能受限上市。这解释了为何每代新品初期性能未达峰值。CUDA生态的扩张成本高昂且耗时。

- 创新者的窘境 CUDA早期卖点——向后兼容性——如今沦为技术债务,制约NVIDIA自身敏捷创新。尽管维护旧GPU兼容性对开发者至关重要,但NVIDIA被迫优先稳定性而非革命性变革。长期兼容性维护消耗时间与资源,可能限制未来灵活性。

即便NVIDIA承诺开发者延续性,Blackwell为实现性能目标仍不得不与Hopper PTX断代——部分Hopper PTX指令在Blackwell失效。这意味着绕过CUDA直抵PTX的开发者,需为次世代硬件重写代码。

垄断者的辩证法

尽管挑战重重,NVIDIA在软件端的强势执行与早期战略布局,仍为其未来增长铺平道路。随着GenAI崛起与CUDA生态的扩张,NVIDIA稳坐AI计算王座,并快速跻身全球最具价值企业之列。

CUDA替代方案何在?

综上,CUDA仍是一把双刃剑——其生态位的不同角色对它的评价截然相反。它的巨大成功铸就了NVIDIA的统治地位,但其复杂性、技术债务与厂商锁定,却为开发者与AI计算的未来埋下深层隐忧。

随着AI硬件飞速演进,一个核心问题浮出水面:CUDA的替代方案究竟何在?为何至今无人破局? 我们将在第五部分中深入剖析主流替代技术(如OpenCL、TritonLang、MLIR编译器),揭示其难以突破CUDA护城河的技术桎梏与战略短板。🚀

Answering the question of whether CUDA is “good” is much trickier than it sounds. Are we talking about its raw performance? Its feature set? Perhaps its broader implications in the world of AI development? Whether CUDA is “good” depends on who you ask and what they need. In this post, we’ll evaluate CUDA from the perspective of the people who use it day-in and day-out—those who work in the GenAI ecosystem:

- For AI engineers who build on top of CUDA, it’s an essential tool, but one that comes with versioning headaches, opaque driver behavior, and deep platform dependence.

- For AI engineers who write GPU code for NVIDIA hardware

, CUDA offers powerful optimization but only by accepting the pain necessary to achieve top performance.

- For those who want their AI workloads to run on GPU’s from multiple vendors, CUDA is more an obstacle than a solution.

- Then there’s NVIDIA itself—the company that has built its fortune around CUDA, driving massive profits and reinforcing their dominance over AI compute.

So, is CUDA “good?” Let’s dive into each perspective to find out!

AI Engineers

Many engineers today are building applications on top of AI frameworks—agentic libraries like LlamaIndex, LangChain, and AutoGen—without needing to dive deep into the underlying hardware details. For these engineers, CUDA is a powerful ally. Its maturity and dominance in the industry bring significant advantages: most AI libraries are designed to work seamlessly with NVIDIA hardware, and the collective focus on a single platform fosters industry-wide collaboration.

However, CUDA’s dominance comes with its own set of persistent challenges. One of the biggest hurdles is the complexity of managing different CUDA versions, which can be a nightmare. This frustration is the subject of numerous memes:

Credit: x.com/ordax

This isn’t just a meme—it’s a real, lived experience for many engineers. These AI practitioners constantly need to ensure compatibility between the CUDA toolkit, NVIDIA drivers, and AI frameworks. Mismatches can cause frustrating build failures or runtime errors, as countless developers have experienced firsthand:

"I failed to build the system with the latest NVIDIA PyTorch docker image. The reason is PyTorch installed by pip is built with CUDA 11.7 while the container uses CUDA 12.1." (github.com)

or:

"Navigating Nvidia GPU drivers and CUDA development software can be challenging. Upgrading CUDA versions or updating the Linux system may lead to issues such as GPU driver corruption." (dev.to)

Sadly, such headaches are not uncommon. Fixing them often requires deep expertise and time-consuming troubleshooting. NVIDIA's reliance on opaque tools and convoluted setup processes deters newcomers and slows down innovation.

In response to these challenges, NVIDIA has historically moved up the stack to solve individual point-solutions rather than fixing the fundamental problem: the CUDA layer itself. For example, it recently introduced NIM (NVIDIA Inference Microservices), a suite of containerized microservices aimed at simplifying AI model deployment. While this might streamline one use-case, NIM also abstracts away underlying operations, increasing lock-in and limiting access to the low-level optimization and innovation key to CUDA's value proposition.

While AI engineers building on top of CUDA face challenges with compatibility and deployment, those working closer to the metal—AI model developers and performance engineers—grapple with an entirely different set of trade-offs.

AI Model Developers and Performance Engineers

For researchers and engineers pushing the limits of AI models, CUDA is simultaneously an essential tool and a frustrating limitation. For them, CUDA isn’t an API; it’s the foundation for every performance-critical operation they write. These are engineers working at the lowest levels of optimization, writing custom CUDA kernels, tuning memory access patterns, and squeezing every last bit of performance from NVIDIA hardware. The scale and cost of GenAI demand it. But does CUDA empower them, or does it limit their ability to innovate?

Despite its dominance, CUDA is showing its age. It was designed in 2007, long before deep learning—let alone GenAI. Since then, GPUs have evolved dramatically, with Tensor Cores and sparsity features becoming central to AI acceleration. CUDA’s early contribution was to make GPU programming easy, but it hasn’t evolved with modern GPU features necessary for transformers and GenAI performance. This forces engineers to work around its limitations just to get the performance their workloads demand.

CUDA doesn’t do everything modern GPUs can do

Cutting-edge techniques like FlashAttention-3 (example code) and DeepSeek’s innovations require developers to drop below CUDA into PTX—NVIDIA’s lower-level assembly language. PTX is only partially documented, constantly shifting between hardware generations, and effectively a black box for developers.

More problematic, PTX is even more locked to NVIDIA than CUDA, and its usability is even worse. However, for teams chasing cutting-edge performance, there’s no alternative—they’re forced to bypass CUDA and endure significant pain.

Tensor Cores: Required for performance, but hidden behind black magic

Today, the bulk of an AI model’s FLOPs come from “Tensor Cores”, not traditional CUDA cores. However, programming Tensor Cores directly is no small feat. While NVIDIA provides some abstractions (like cuBLAS and CUTLASS), getting the most out of GPUs still requires arcane knowledge, trial-and-error testing, and often, reverse engineering undocumented behavior. With each new GPU generation, Tensor Cores change, yet the documentation is dated. This leaves engineers with limited resources to fully unlock the hardware’s potential.AI is Python, but CUDA is C++Another major limitation is that writing CUDA fundamentally requires using C++, while modern AI development is overwhelmingly done in Python. Engineers working on AI models and performance in PyTorch don’t want to switch back and forth between Python and C++—the two languages have very different mindsets. This mismatch slows down iteration, creates unnecessary friction, and forces AI engineers to think about low-level performance details when they should be focusing on model improvements. Additionally, CUDA's reliance on C++ templates leads to painfully slow compile times and often incomprehensible error messages.

Engineers and Researchers Building Portable Software

Not everyone is happy to build software locked to NVIDIA’s hardware, and the challenges are clear. CUDA doesn’t run on hardware from other vendors (like the supercomputer in our pockets), and no alternatives provide the full performance and capabilities CUDA provides on NVIDIA hardware. This forces developers to write their AI code multiple times, for multiple platforms.

In practice, many cross-platform AI efforts struggle. Early versions of TensorFlow and PyTorch had OpenCL backends, but they lagged far behind the CUDA backend in both features and speed, leading most users to stick with NVIDIA. Maintaining multiple code paths—CUDA for NVIDIA, something else for other platforms—is costly, and as AI rapidly progresses, only large organizations have resources for such efforts.

The bifurcation CUDA causes creates a self-reinforcing cycle: since NVIDIA has the largest user base and the most powerful hardware, most developers target CUDA first, and hope that others will eventually catch up. This further solidifies CUDA’s dominance as the default platform for AI.

👉 We’ll explore alternatives like OpenCL, TritonLang, and MLIR compilers in our next post, and come to understand why these options haven’t made a dent in CUDA's dominance.

Is CUDA Good for NVIDIA Itself?

Of course, the answer is yes: the “CUDA moat” enables a winner-takes-most scenario. By 2023, NVIDIA held ~98% of the data-center GPU market share, cementing its dominance in the AI space. As we've discussed in previous posts, CUDA serves as the bridge between NVIDIA’s past and future products, driving the adoption of new architectures like Blackwell and maintaining NVIDIA's leadership in AI compute.

However, legendary hardware experts like Jim Keller argue that "CUDA’s a swamp, not a moat,” making analogies to the X86 architecture that bogged Intel down.

"CUDA's a swamp, not a moat," argues Jim Keller

How could CUDA be a problem for NVIDIA? There are several challenges.

CUDA's usability impacts NVIDIA the most

Jensen Huang famously claims that NVIDIA employs more software engineers than hardware engineers, with a significant portion dedicated to writing CUDA. But the usability and scalability challenges within CUDA slow down innovation, forcing NVIDIA to aggressively hire engineers to fire-fight these issues.

CUDA’s heft slows new hardware rollout

CUDA doesn’t provide performance portability across NVIDIA’s own hardware generations, and the sheer scale of its libraries is a double-edged sword. When launching a new GPU generation like Blackwell, NVIDIA faces a choice: rewrite CUDA or release hardware that doesn’t fully unleash the new architecture’s performance. This explains why performance is suboptimal at launch of each new generation. Such expansion of CUDA’s surface area is costly and time-consuming.

The Innovator’s Dilemma

NVIDIA’s commitment to backward compatibility—one of CUDA’s early selling points—has now become “technical debt” that hinders their own ability to innovate rapidly. While maintaining support for older generations of GPUs is essential for their developer base, it forces NVIDIA to prioritize stability over revolutionary changes. This long-term support costs time, resources, and could limit their flexibility moving forward.

Though NVIDIA has promised developers continuity, Blackwell couldn't achieve its performance goals without breaking compatibility with Hopper PTX—now some Hopper PTX operations don’t work on Blackwell. This means advanced developers who have bypassed CUDA in favor of PTX may find themselves rewriting their code for the next-generation hardware.

Despite these challenges, NVIDIA’s strong execution in software and its early strategic decisions have positioned them well for future growth. With the rise of GenAI and a growing ecosystem built on CUDA, NVIDIA is poised to remain at the forefront of AI compute and has rapidly grown into one of the most valuable companies in the world.

Where Are the Alternatives to CUDA?

In conclusion, CUDA remains both a blessing and a burden, depending on which side of the ecosystem you’re on. Its massive success drove NVIDIA’s dominance, but its complexity, technical debt, and vendor lock-in present significant challenges for developers and the future of AI compute.

With AI hardware evolving rapidly, a natural question emerges: Where are the alternatives to CUDA? Why hasn’t another approach solved these issues already? In Part 5, we’ll explore the most prominent alternatives, examining the technical and strategic problems that prevent them from breaking through the CUDA moat. 🚀

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-06-15,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录