Democratizing AI Compute, Part 2: What exactly is “CUDA”?

Democratizing AI Compute, Part 2: What exactly is “CUDA”?

用户9732312

发布于 2026-03-18 20:47:34

发布于 2026-03-18 20:47:34

Chris Lattner Modular创始人,LLVM/Clang/Swift/MLIR之父

It seems like everyone has started talking about CUDA in the last year: It’s the backbone of deep learning, the reason novel hardware struggles to compete, and the core of NVIDIA’s moat and soaring market cap. With DeepSeek, we got a startling revelation: its breakthrough was made possible by “bypassing” CUDA, going directly to the PTX layer… but what does this actually mean? It feels like everyone wants to break past the lock-in, but we have to understand what we’re up against before we can formulate a plan.

This is Part 2 of Modular’s “Democratizing AI Compute” series. For more, see:

Part 1: DeepSeek’s Impact on AI

Part 2: What exactly is “CUDA”? (this article)

Part 3: How did CUDA succeed?

Part 4: CUDA is the incumbent, but is it any good?

Part 5: What about CUDA C++ alternatives like OpenCL?

Part 6: What about AI compilers (TVM and XLA)?

Part 7: What about Triton and Python eDSLs?

CUDA’s dominance in AI is undeniable—but most people don’t fully understand what CUDA actually is. Some think it’s a programming language. Others call it a framework. Many assume it’s just “that thing NVIDIA uses to make GPUs faster.” None of these are entirely wrong—and many brilliant people are trying to explain this—but none capture the full scope of “The CUDA Platform.”

CUDA is not just one thing. It’s a huge, layered Platform—a collection of technologies, software libraries, and low-level optimizations that together form a massive parallel computing ecosystem. It includes:

A low-level parallel programming model that allows developers to harness the raw power of GPUs with a C++-like syntax.

A complex set of libraries and frameworks—middleware that powers crucial vertical use cases like AI (e.g., cuDNN for PyTorch and TensorFlow).

A suite of high-level solutions like TensorRT-LLM and Triton, which enable AI workloads (e.g., LLM serving) without requiring deep CUDA expertise.

…and that’s just scratching the surface.

In this article, we’ll break down the key layers of the CUDA Platform, explore its historical evolution, and explain why it’s so integral to AI computing today. This sets the stage for the next part in our series, where we’ll dive into why CUDA has been so successful. Hint: it has a lot more to do with market incentives than it does the technology itself.

Let’s dive in.

The Road to CUDA: From Graphics to General-Purpose Compute

Before GPUs became the powerhouses of AI and scientific computing, they were graphics processors—specialized processors for rendering images. Early GPUs hardwired image rendering into silicon, meaning that every step of rendering (transformations, lighting, rasterization) was fixed. While efficient for graphics, these chips were inflexible—they couldn’t be repurposed for other types of computation.

Everything changed in 2001 when NVIDIA introduced the GeForce3, the first GPU with programmable shaders. This was a seismic shift in computing:

🎨 Before: Fixed-function GPUs could only apply pre-defined effects.

🖥️ After: Developers could write their own shader programs, unlocking programmable graphics pipelines.

This advancement came with Shader Model 1.0, allowing developers to write small, GPU-executed programs for vertex and pixel processing. NVIDIA saw where the future was heading: instead of just improving graphics performance, GPUs could become programmable parallel compute engines.

At the same time, it didn’t take long for researchers to ask:

“🤔 If GPUs can run small programs for graphics, could we use them for non-graphics tasks?”

One of the first serious attempts at this was the BrookGPU project at Stanford. Brook introduced a programming model that let CPUs offload compute tasks to the GPU—a key idea that set the stage for CUDA.

This move was strategic and transformative. Instead of treating compute as a side experiment, NVIDIA made it a first-class priority, embedding CUDA deeply into its hardware, software, and developer ecosystem.

The CUDA Parallel Programming Model

In 2006, NVIDIA launched CUDA (”Compute Unified Device Architecture”)—the first general-purpose programming platform for GPUs. The CUDA programming model is made up of two different things: the “CUDA programming language”, and the “NVIDIA Driver”.

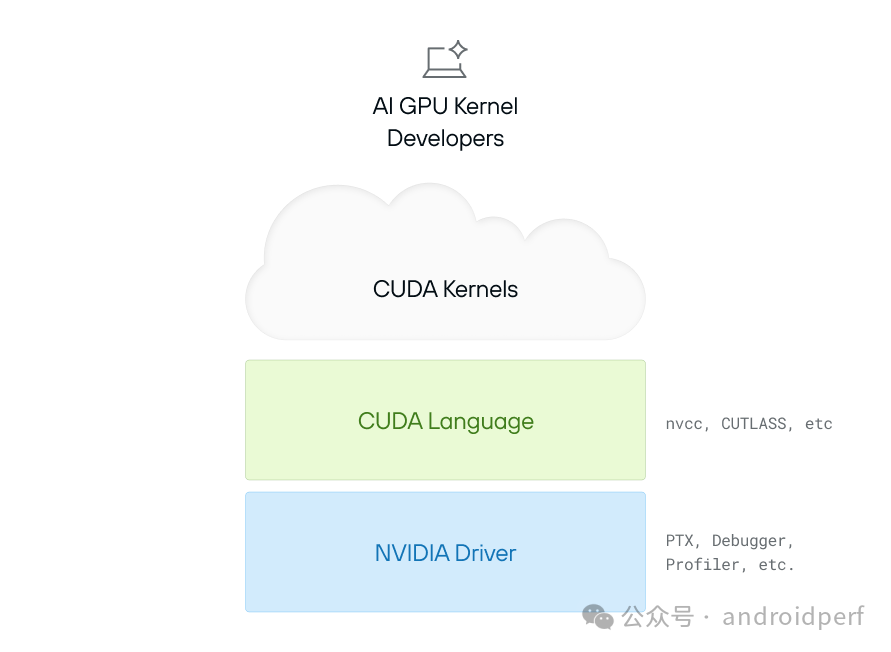

CUDA is a Layered Stack Requiring Deep Integration from Driver to Kernel

The CUDA language is derived from C++, with enhancements to directly expose low-level features of the GPU—e.g. its ideas of “GPU threads” and memory. A programmer can use this language to define a “CUDA Kernel”—an independent calculation that runs on the GPU. A very simple example is:

__global__ void addVectors(float *a, float *b, float *c, int n) {

int idx = threadIdx.x + blockIdx.x * blockDim.x;

if (idx < n) {

c[idx] = a[idx] + b[idx];

}

}

CUDA kernels allow programmers to define a custom computation that accesses local resources (like memory) and using the GPUs as very fast parallel compute units. This language is translated (”compiled”) down to “PTX”, which is an assembly language that is the lowest level supported interface to NVIDIA GPUs.

But how does a program actually execute code on a GPU? That’s where the NVIDIA Driver comes in. It acts as the bridge between the CPU and the GPU, handling memory allocation, data transfers, and kernel execution. A simple example is:

cudaMalloc(&d_A, size);

cudaMalloc(&d_B, size);

cudaMalloc(&d_C, size);

cudaMemcpy(d_A, A, size, cudaMemcpyHostToDevice);

cudaMemcpy(d_B, B, size, cudaMemcpyHostToDevice);

int threadsPerBlock = 256;

// Compute the ceiling of N / threadsPerBlock

int blocksPerGrid = (N + threadsPerBlock - 1) / threadsPerBlock;

addVectors<<<blocksPerGrid, threadsPerBlock>>>(d_A, d_B, d_C, N);

cudaMemcpy(C, d_C, size, cudaMemcpyDeviceToHost);

cudaFree(d_A);

cudaFree(d_B);

cudaFree(d_C);

Note that all of this is very low level—full of fiddly details (e.g. pointers and “magic numbers”). If you get something wrong, you’re most often informed of this by a difficult to understand crash. Furthermore, CUDA exposes a lot of details that are specific to NVIDIA hardware—things like the “number of threads in a warp” (which we won't explore here).

Despite the challenges, these components enabled an entire generation of hardcore programmers to get access to the huge muscle that a GPU can apply to numeric problems. For example, the AlexNET ignited modern deep learning in 2012. It was made possible by custom CUDA kernels for AI operations like convolution, activations, pooling and normalization and the horsepower a GPU can provide.

While the CUDA language and driver are what most people typically think of when they hear “CUDA,” this is far from the whole enchilada—it’s just the filling inside. Over time, the CUDA Platform grew to include much more, and as it did, the meaning of the original acronym fell away from being a useful way to describe CUDA.

High-Level CUDA Libraries: Making GPU Programming More Accessible

The CUDA programming model opened the door to general-purpose GPU computing and is powerful, but it brings two challenges:

CUDA is difficult to use, and even worse...

CUDA doesn’t help with performance portability

Most kernels written for generation N will “keep working” on generation N+1, but often the performance is quite bad—far from the peak of what N+1 generation can deliver, even though GPUs are all about performance. This makes CUDA a strong tool for expert engineers, but a steep learning curve for most developers. But is also means that significant rewrites are required every time a new generation of GPU comes out (e.g. Blackwell is now emerging).

As NVIDIA grew it wanted GPUs to be useful to people who were domain experts in their own problem spaces, but weren’t themselves GPU experts. NVIDIA’s solution to this problem was to start building rich and complicated closed-source, high-level libraries that abstract away low-level CUDA details. These include:

cuDNN (2014) – Accelerates deep learning (e.g., convolutions, activation functions).

cuBLAS – Optimized linear algebra routines.

cuFFT – Fast Fourier Transforms (FFT) on GPUs.

… and many others.

With these libraries, developers could tap into CUDA’s power without needing to write custom GPU code, with NVIDIA taking on the burden of rewriting these for every generation of hardware. This was a big investment from NVIDIA, but it worked.

The cuDNN library is especially important in this story—it paved the way for Google’s TensorFlow (2015) and Meta’s PyTorch (2016), enabling deep learning frameworks to take off. While there were earlier AI frameworks, these were the first frameworks to truly scale—modern AI frameworks now have thousands of these CUDA kernels and each is very difficult to write. As AI research exploded, NVIDIA aggressively pushed to expand these libraries to cover the important new use-cases.

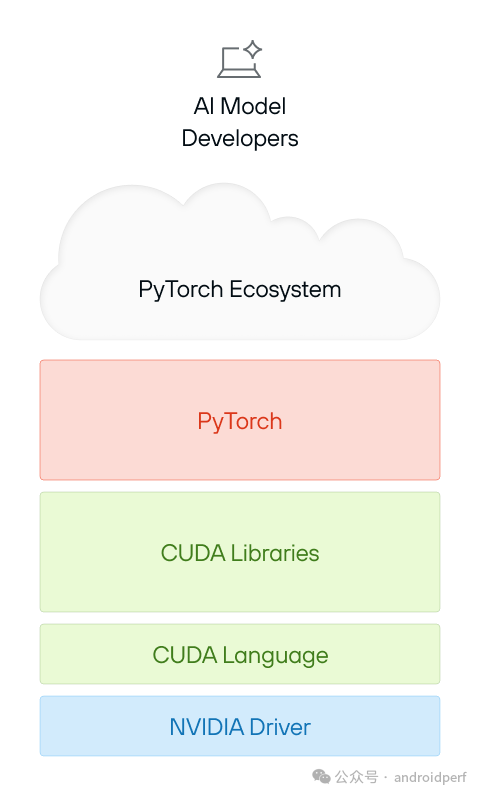

Image depicting a layered stack with AI Model Developers at the top, represented by a laptop icon with a sparkle. Below is a cloud labeled PyTorch Ecosystem, resting above a red block labeled PyTorch. Underneath are three more layers: a green block for CUDA Libraries, another green block for CUDA Language, and a blue block at the bottom labeled NVIDIA Driver. The structure highlights the deep dependency chain required to support PyTorch within the CUDA framework.

PyTorch on CUDA is Built on Multiple Layers of Dependencies

NVIDIA’s investment into these powerful GPU libraries enabled the world to focus on building high-level AI frameworks like PyTorch and developer ecosystems like HuggingFace. Their next step was to make entire solutions that could be used out of the box—without needing to understand the CUDA programming model at all.

Fully vertical solutions to ease the rapid growth of AI and GenAI

The AI boom went far beyond research labs—AI is now everywhere. From image generation to chatbots, from scientific discovery to code assistants, Generative AI (GenAI) has exploded across industries, bringing a flood of new applications and developers into the field.

At the same time, a new wave of AI developers emerged, with very different needs. In the early days, deep learning required highly specialized engineers who understood CUDA, HPC, and low-level GPU programming. Now, a new breed of developer—often called AI engineers—is building and deploying AI models without needing to touch low-level GPU code.

To meet this demand, NVIDIA went beyond just providing libraries—it now offers turnkey solutions that abstract away everything under the hood. Instead of requiring deep CUDA expertise, these frameworks allow AI developers to optimize and deploy models with minimal effort.

- Triton Serving – A high-performance serving system for AI models, allowing teams to efficiently run inference across multiple GPUs and CPUs.

- TensorRT – A deep learning inference optimizer that automatically tunes models to run efficiently on NVIDIA hardware.

- TensorRT-LLM – An even more specialized solution, built for large language model (LLM) inference at scale.

- … plus many (many) other things.

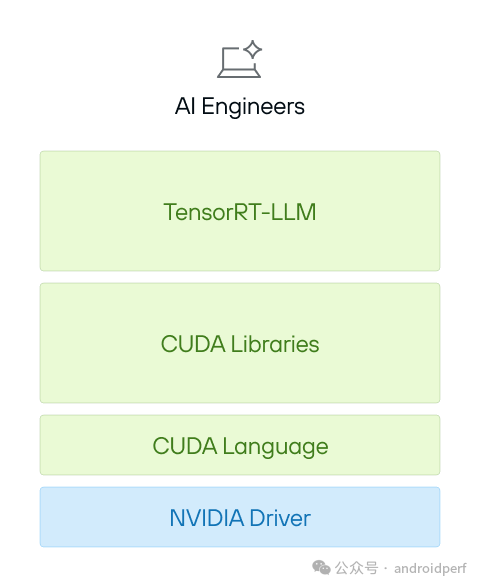

Image showing a vertical stack with AI Engineers at the top, represented by a laptop icon with a sparkle. Below are four layers: a green block labeled TensorRT-LLM, followed by CUDA Libraries, then CUDA Language, and finally a blue block at the bottom labeled NVIDIA Driver. The layered structure highlights the multiple dependencies required for AI development within the CUDA ecosystem.

These tools completely shield AI engineers from CUDA’s low-level complexity, letting them focus on AI models and applications, not hardware details. These systems provide significant leverage which has enabled the horizontal scale of AI applications.

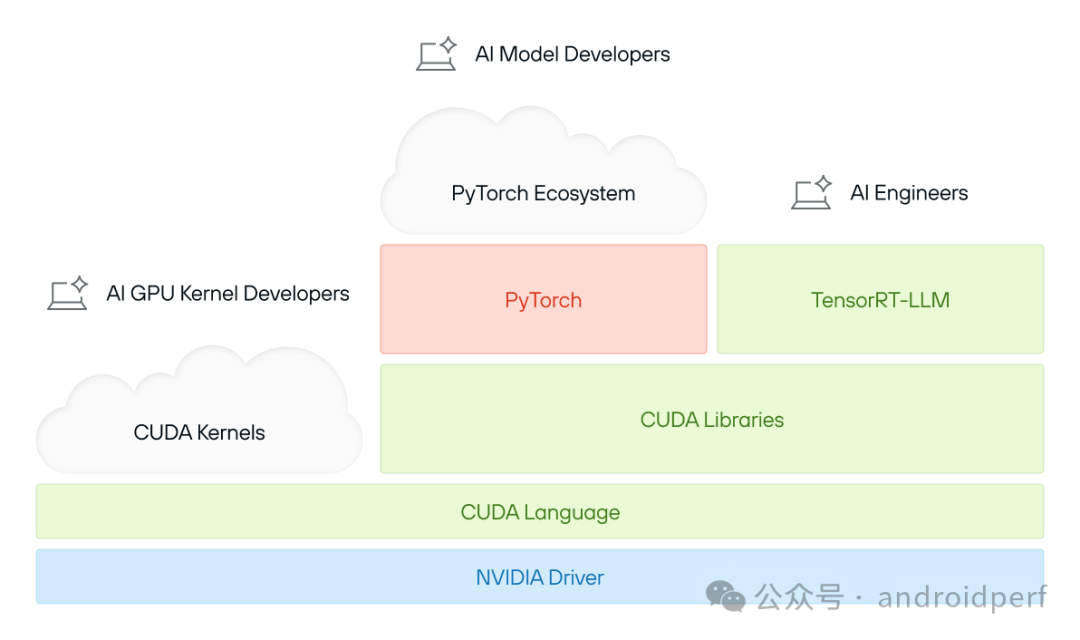

The “CUDA Platform” as a whole

CUDA is often thought of as a programming model, a set of libraries, or even just "that thing NVIDIA GPUs run AI on." But in reality, CUDA is much more than that—it is a unifying brand, a truly vast collection of software, and a highly tuned ecosystem, all deeply integrated with NVIDIA’s hardware. For this reason, the term “CUDA” is ambiguous—we prefer the term “The CUDA Platform” to clarify that we’re talking about something closer in spirit to the Java ecosystem, or even an operating system, than merely a programming language and runtime library.

Image showing a layered stack of the CUDA ecosystem. At the top are icons for AI GPU Kernel Developers, AI Model Developers, and AI Engineers, with clouds for CUDA Kernels and PyTorch Ecosystem. Below are PyTorch, TensorRT-LLM, CUDA Libraries, CUDA Language, and the foundational NVIDIA Driver, highlighting CUDA’s complex dependencies.

At its core, the CUDA Platform consists of:

- A massive codebase – Decades of optimized GPU software, spanning everything from matrix operations to AI inference.

- A vast ecosystem of tools & libraries – From cuDNN for deep learning to TensorRT for inference, CUDA covers an enormous range of workloads.

- Hardware-tuned performance – Every CUDA release is deeply optimized for NVIDIA’s latest GPU architectures, ensuring top-tier efficiency.

- Proprietary and opaque – When developers interact with CUDA’s library APIs, much of what happens under the hood is closed-source and deeply tied to NVIDIA’s ecosystem.

CUDA is a powerful but sprawling set of technologies—an entire software platform that sits at the foundation of modern GPU computing, even going beyond AI specifically.

Now that we know what “CUDA” is, we need to understand how it got to be so successful. Here’s a hint: CUDA’s success isn’t really about performance—it’s about strategy, ecosystem, and momentum. In the next post, we’ll explore what enabled NVIDIA’s CUDA software to shape and entrench the modern AI era.

似乎过去一年里,所有人都在谈论 CUDA:它是深度学习的支柱,是新型硬件难以竞争的原因,也是 NVIDIA 护城河与市值飙升的核心。但 DeepSeek 给了我们一个惊人的启示:它的突破是通过"绕过"CUDA,直接使用 PTX 层实现的……这究竟意味着什么?虽然人人都想突破这种技术锁定,但我们必须先理解面临的挑战才能制定计划。

这是 Modular《AI 算力民主化》系列的第二篇。其他内容请参考:

第一部分:DeepSeek 对 AI 的影响

第二部分:什么是 "CUDA"?(本文)

第三部分:CUDA 为何成功?

第四部分:CUDA 是现任霸主,但它足够好吗?

第五部分:OpenCL 等 CUDA C++ 替代品如何?

第六部分:AI 编译器(TVM 和 XLA)怎样?

第七部分:Triton 和 Python eDSL 又如何?

CUDA 在 AI 领域的统治地位无可争议,但大多数人并不完全理解 CUDA 究竟是什么。有人认为它是编程语言,有人称其为框架,更多人以为它只是"NVIDIA 让 GPU 更快运行的工具"。这些说法都不算错——许多聪明人试图解释过——但都没能完整描述"CUDA 平台"的全貌。

CUDA 不是单一事物,而是一个庞大的分层平台——由技术堆栈、软件库和底层优化组成的并行计算生态系统,包含:

允许开发者用类 C++ 语法释放 GPU 原始算力的底层并行编程模型

支撑 AI 等关键垂直场景(如 PyTorch/TensorFlow 依赖的 cuDNN)的复杂库与框架中间件

TensorRT-LLM 和 Triton 等高层解决方案,无需深厚 CUDA 知识即可运行 AI 工作负载(如 LLM 服务)

——而这只是冰山一角。

本文中,我们将拆解 CUDA 平台的关键层级,探索其历史演变,并解释为何它对当今 AI 计算如此不可或缺。这为系列后续内容奠定了基础:下一章我们将深入探讨 CUDA 成功的真正原因。提示:与其技术本身相比,市场激励机制起到了更关键的作用。

让我们开始探索。

CUDA 之路:从图形处理器到通用计算平台

在 GPU 成为 AI 和科学计算的算力引擎之前,它只是图形处理器——专为图像渲染而生的芯片。早期 GPU 将图像渲染流程固化在芯片中,这意味着渲染的每一步(几何变换、光照计算、光栅化)都是固定电路实现的。这种架构虽然图形处理效率高,但缺乏灵活性——无法用于其他类型的计算。

2001 年的转折点

2001 年,NVIDIA 推出首款搭载可编程着色器的 GeForce3 GPU,引发计算领域的范式革命:

🎨 传统模式:固定功能 GPU 只能执行预定义的图形特效。

🖥️ 新纪元:开发者可编写自定义着色器程序,解锁可编程图形管线。

这一突破伴随 Shader Model 1.0 实现,开发者能编写由 GPU 执行的小型程序,用于顶点和像素处理。此时 NVIDIA 已洞察未来趋势:GPU 不应仅优化图形性能,而应成为可编程的并行计算引擎。

非图形计算的萌芽

很快,研究者开始思考:

"🤔 既然 GPU 能运行图形处理的小程序,能否将其用于非图形任务?"

斯坦福大学的 BrookGPU 项目是早期重要尝试。Brook 提出了让 CPU 将计算任务卸载到 GPU 的编程模型,为 CUDA 的诞生奠定基础。

NVIDIA 的战略转型

NVIDIA 并未将通用计算视为边缘实验,而是将其提升为最高优先级战略:

- 硬件层面:设计支持通用计算的 GPU 架构。

- 软件生态:构建完整的开发者工具链和软件栈。

- 生态建设:培育开发者社区,推动 CUDA 成为行业标准。

CUDA 并行编程模型

2006 年,NVIDIA 正式发布 CUDA(Compute Unified Device Architecture)——首个面向 GPU 的通用编程平台。其编程模型包含两大核心组件:

- CUDA 编程语言:基于 C/C++ 扩展的语法,支持开发者编写 GPU 并行代码。

- NVIDIA 驱动程序:负责将 CUDA 代码编译为 GPU 可执行的机器指令,并管理 GPU 资源调度。

这一架构奠定了 CUDA 在通用 GPU 计算领域的基石地位。

CUDA 语言来源于 C++,通过增强功能直接暴露 GPU 的底层特性——例如「GPU 线程」和内存架构等概念。开发者可用该语言定义 CUDA Kernel(核函数)——一种在 GPU 上运行的独立计算单元。

CUDA kernel允许程序员自定义计算逻辑,该逻辑既能访问本地资源(如显存),又能将 GPU 作为超高速并行计算单元来使用。开发者编写的 CUDA 代码会被编译为 PTX(一种汇编语言),这是 NVIDIA GPU 支持的底层接口。

但程序如何在 GPU 上真正执行代码?这正是 NVIDIA 驱动程序的职责所在。它充当 CPU 与 GPU 之间的桥梁,负责管理显存分配、数据传输和kernel执行等关键任务。

需要特别注意的是,这一切都属于底层操作——充斥着繁琐细节(如指针操作和"magic number")。若代码存在错误,通常只能通过难以解读的系统崩溃来提示问题所在。此外,CUDA 暴露了大量 NVIDIA 硬件特有的细节,例如"number of threads in a warp".

突破性意义

尽管存在这些挑战,CUDA 的核心组件让一代硬核程序员得以释放 GPU 在数值计算中的澎湃动力。例如 2012 年点燃现代深度学习的 AlexNet,其核心正是针对卷积、激活函数、池化与归一化等 AI 运算定制的 CUDA 核函数,以及 GPU 提供的强大算力。

平台化演进

虽然多数人提及 CUDA 时仅联想到编程语言与驱动程序,但这只是整个生态系统的冰山一角——犹如墨西哥卷饼(enchilada)中的馅料。随着时间推移,CUDA 平台已发展成涵盖更广的生态系统,其原始缩写的字面含义(Compute Unified Device Architecture)早已无法完整描述当下的 CUDA 全貌。

CUDA 库:降低 GPU 编程门槛

- CUDA 编程模型虽为通用 GPU 计算打开大门,但其强大性能伴随两大挑战:

- 使用门槛高:CUDA 学习曲线陡峭,需深入理解 GPU 架构细节。

性能可移植性差:为第 N 代 GPU 编写的核函数虽能在第 N+1 代硬件运行,但性能往往严重劣化——即便新一代 GPU 峰值算力更高,实际表现却难以企及。这使得 CUDA 成为专家工程师的利器,却对多数开发者不够友好,更意味着每次 GPU 架构迭代(例如当前崭露头角的 Blackwell 架构)都需大规模代码重写。

NVIDIA 的破局之策

随着业务扩展,NVIDIA 希望让领域专家(非 GPU 专家)也能高效使用 GPU。其解决方案是构建闭源函数库,通过抽象底层 CUDA 细节降低使用门槛,代表作品包括:

- cuDNN(2014):深度学习加速库(卷积、激活函数等)

- cuBLAS:优化线性代数运算

- cuFFT:GPU 快速傅里叶变换

... 以及其他数十个专用库

这些库让开发者无需编写定制 GPU 代码即可调用 CUDA 算力,同时由 NVIDIA 承担每代硬件适配的重任。尽管研发投入巨大,该策略成效显著。

生态引爆点:cuDNN 与深度学习革命

其中 cuDNN 尤为关键:它为谷歌 TensorFlow(2015)和 Meta PyTorch(2016)铺平道路,推动深度学习框架真正实现规模化。尽管早期 AI 框架已存在,但这些框架首次证明大规模可扩展性——现代 AI 框架集成了数千个 CUDA 核函数,每个都需极高开发成本。随着 AI 研究爆炸式增长,NVIDIA 持续扩展库函数覆盖新兴关键场景,巩固其生态主导地位。

NVIDIA 对这些强大 GPU 库的投入让全球开发者能够专注于构建像 PyTorch 这样的高级 AI 框架和 HuggingFace 这样的开发者生态系统。他们的下一步是打造开箱即用的完整解决方案——完全无需理解 CUDA 编程模型。

垂直整合解决方案:加速AI与生成式AI的规模化发展

AI 的热潮早已突破研究实验室的边界——如今,AI 无处不在。从图像生成到智能对话机器人,从科学发现到代码助手,**生成式AI(GenAI)**在各行业呈现爆发式增长,吸引大量新应用与开发者涌入这一领域。

开发者群体的演变

与此同时,新一代 AI 开发者应运而生,他们的需求截然不同。在深度学习早期,开发者需精通 CUDA、高性能计算(HPC)及底层 GPU 编程的专家。如今 ,AI 工程师这一新兴群体正崛起——他们无需触碰底层 GPU 代码即可构建与部署模型。

NVIDIA 的应对策略:从基础库到开箱即用方案

为满足这一需求,NVIDIA 不再局限于提供基础库 ,而是推出交钥匙解决方案,将底层细节完全封装。这些框架使得 AI 开发者能以最小成本优化与部署模型,无需深厚的 CUDA 专业知识。核心工具包括:

- Triton Serving:高性能 AI 模型服务系统,支持跨多 GPU/CPU 的高效推理部署。

- TensorRT:深度学习推理优化器,自动调整模型以在 NVIDIA 硬件上高效运行。

- TensorRT-LLM:专为大规模语言模型(LLM)推理设计的专项优化方案。

- …… 以及数十种其他工具。

这些方案共同构筑了从模型训练到部署的全栈式生态,显著降低了生成式 AI 的应用门槛。

这些工具彻底将 AI 工程师与 CUDA 的底层复杂性隔离,使其能够专注于 AI 模型与应用开发,而非硬件细节。这些系统提供了关键的技术杠杆,使得 AI 应用的横向规模化成为可能。

人们通常将 CUDA 视为一种编程模型、一组函数库,甚至仅仅是“NVIDIA GPU 运行 AI 的工具”。但事实上,CUDA 远不止于此——它是一个整合品牌、庞大的软件集合与高度调优的生态系统,所有组件都与 NVIDIA 硬件深度集成。因此,“CUDA”一词具有多义性,我们更倾向使用**「CUDA 平台」**这一术语——它更接近 Java 生态体系甚至操作系统的范畴,而不仅是编程语言或运行时库。

CUDA 平台的核心构成

CUDA 平台的核心包含以下要素:

- 庞大的代码库

—— 历经数十年优化的 GPU 软件集合,覆盖从矩阵运算到 AI 推理的各类场景。

- 丰富的工具与库生态

—— 从深度学习的 cuDNN 到推理优化的 TensorRT,CUDA 覆盖海量工作负载。

- 硬件深度调优的性能

—— 每个 CUDA 版本均针对 NVIDIA 最新 GPU 架构优化,确保顶级能效。

- 闭源且不透明的特性

—— 开发者调用 CUDA 库 API 时,底层逻辑多为闭源实现,并与 NVIDIA 生态深度绑定。

超越 AI 的基石地位

CUDA 是一个强大但庞杂的技术集合体——它不仅是现代 GPU 计算的软件平台基石,其影响力更辐射至 AI 之外的广泛领域。

下篇预告

至此,我们厘清了 CUDA 的实质。但为何它能如此成功?关键提示:

CUDA 的成功并非单纯源于性能优势,而在于战略布局、生态构建与行业惯性。

在下一篇文章中,我们将深入探讨 NVIDIA 的 CUDA 软件如何塑造并巩固现代 AI 时代。

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-04-07,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读