ICLR 2026 | 时间序列(Time Series)论文总结[下]【分类,异常检测,生成,插补,LLM与基础模型】

ICLR 2026 | 时间序列(Time Series)论文总结[下]【分类,异常检测,生成,插补,LLM与基础模型】

时空探索之旅

发布于 2026-03-10 16:05:13

发布于 2026-03-10 16:05:13

ICLR 2026将在2025年4月24日到28日于巴西里约热内卢(Rio de Janeiro, Brazil)举行。ICLR 2026共有19,000多篇投稿,录用5,359篇,录取率28.18%。本文总结了2026 ICLR上有关时间序列(time series)相关论文。如有疏漏,欢迎大家补充。

本文总结了ICLR 2026时间序列(Time Series)的论文,总计87篇,本文涉及48篇,如有疏漏,欢迎补充。

根据OpenReview显示,3,34为Oral,其余为Poster(如有错误,还请大家评论区更正)。

注:由于论文数目较多,分为上下篇,此为下篇,主要涵盖时间序列分类,异常检测,生成,插补,基础模型与大语言模型,多模态,表示学习,医疗时序,Benchmark等。

上篇传送门:ICLR 2026 | 时间序列(Time Series)论文总结(上)【预测,多模态,预测×LLM,基础模型】

观察:均分≥6的有7篇,其余统计值如表所示。

最大均分 | 均值 | 最小均分 |

|---|---|---|

6.5 | 5.21 | 3.5 |

1. PYRREGULAR: A Unified Framework for Irregular Time Series, with Classification Benchmarks2. MambaSL: Exploring Single-Layer Mamba for Time Series Classification3. [Oral]TIMESLIVER : SYMBOLIC-LINEAR DECOMPOSITION FOR EXPLAINABLE TIME SERIES CLASSIFICATION4. CauKer: Classification Time Series Foundation Models Can Be Pretrained on Synthetic Data5. Repurposing Foundation Model for Generalizable Medical Time Series Classification6. Towards Multimodal Time Series Anomaly Detection with Semantic Alignment and Condensed Interaction7. Low Rank Transformer for Multivariate Time Series Anomaly Detection and Localization8. Contextual and Seasonal LSTMs for Time Series Anomaly Detection9. ICDiffAD: Implicit Conditioning Diffusion Model for Time Series Anomaly Detection10. Adaptive Conformal Anomaly Detection with Time Series Foundation Models for Signal Monitoring.11. When Foundation Models are One-Liners: Limitations and Future Directions for Time Series Anomaly Detection12. Complexity- and Statistics-Guided Anomaly Detection in Time Series Foundation Models13. Beyond Accuracy: Are Time Series Foundation Models Well-Calibrated?14. Understanding the Implicit Biases of Design Choices for Time Series Foundation Models15. UniCA: Unified Covariate Adaptation for Time Series Foundation Model16. TimeOmni-1: Incentivizing Complex Reasoning with Time Series in Large Language Models17. Rating Quality of Diverse Time Series Data by Meta-learning from LLM Judgment18. Adapt Data to Model: Adaptive Transformation Optimization for Domain-shared Time Series Foundation Models19. FeDaL: Federated Dataset Learning for General Time Series Foundation Models20. SciTS: Scientific Time Series Understanding and Generation with LLMs21. CTBench: Cryptocurrency Time Series Generation Benchmark22. Latent-to-Data Cascaded Diffusion Models for Unconditional Time Series Generation23. Functional MRI Time Series Generation via Wavelet-Based Image Transform and Spectral Flow Matching for Brain Disorder Identification24. Multi-Scale Hypergraph Meets LLMs: Aligning Large Language Models for Time Series Analysis25. Can we generate portable representations for clinical time series data using LLMs?26. Understanding Transformers for Time Series: Rank Structure, Flow-of-ranks, and Compressibility27. Decentralized Attention Fails Centralized Signals: Rethinking Transformers for Medical Time Series28. SRT: Super-Resolution for Time Series via Disentangled Rectified Flow29. PINFDiT: Energy-Based Physics-Informed Diffusion Transformers for General-purpose Time Series Tasks30. GARLIC: Graph Attention-based Relational Learning of Multivariate Time Series in Intensive Care31. AutoDA-Timeseries: Automated Data Augmentation for Time Series32. SwiftTS: A Swift Selection Framework for Time Series Pre-trained Models via Multi-task Meta-Learning33. DeNOTS: Stable Deep Neural ODEs for Time Series34. [Oral]TCD-Arena: Assessing Robustness of Time Series Causal Discovery Methods Against Assumption Violations35. Lost in the Non-convex Loss Landscape: How to Fine-tune the Large Time Series Model?36. HiMAE: Hierarchical Masked Autoencoders Discover Resolution-Specific Structure in Wearable Time Series37. Designing Time Series Experiments in A/B Testing with Transformer Reinforcement Learning38. PGRF-Net: A Prototype-Guided Relational Fusion Network for Diagnostic Multivariate Time-Series Anomaly Detection39. Language in the Flow of Time: Time-Series-Paired Texts Weaved into a Unified Temporal Narrative40. TSPulse: Tiny Pre-Trained Models with Disentangled Representations for Rapid Time-Series Analysis41. GTM: A General Time-series Model for Enhanced Representation Learning of Time-Series data42. PaAno: Patch-Based Representation Learning for Time-Series Anomaly Detection43. A Study of Posterior Stability in Time-Series Latent Diffusion44. Structure Learning from Time-Series Data with Lag-Agnostic Structural Prior45. T1: One-to-One Channel-Head Binding for Multivariate Time-Series Imputation46. Reasoning on Time-Series for Financial Technical Analysis47. Time-Gated Multi-Scale Flow Matching for Time-Series Imputation48. Enhancing Sparse Event Detection in Healthcare Time-Series via Adaptive Gate of Context–Detail Interaction |

|---|

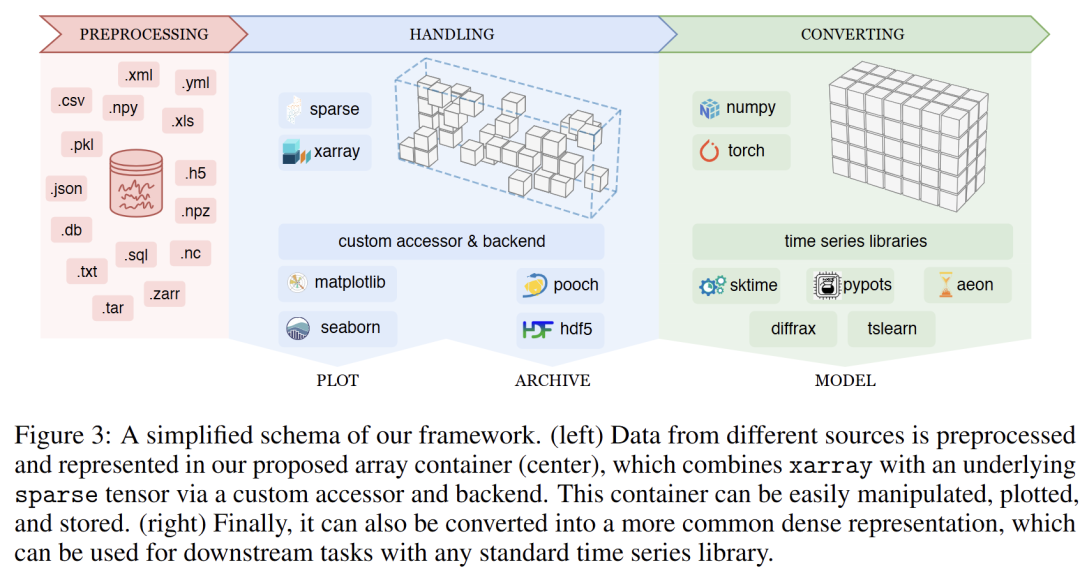

1 PYRREGULAR: A Unified Framework for Irregular Time Series, with Classification Benchmarks

链接:https://openreview.net/forum?id=qetBM8nLkf

关键词:irregular time series, classification

作者:Francesco Spinnato,Cristiano Landi

分数:6, 6, 6

信心:4, 4, 4

均分:6.0

TL; DR:This work introduces a unified framework and the first standardized repository for irregular time series classification, enabling consistent evaluation of 12 classifiers across 34 datasets to address fragmented approaches to irregular temporal data.

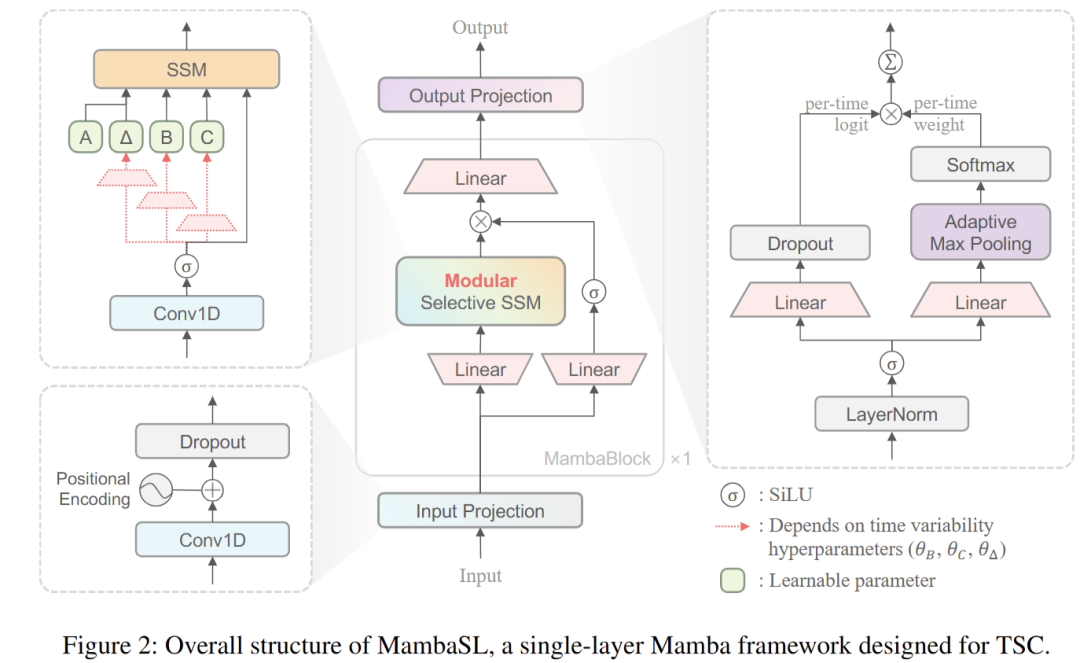

2 MambaSL: Exploring Single-Layer Mamba for Time Series Classification

链接:https://openreview.net/forum?id=YDl4vqQqGP

关键词:modular selective SSM, multi-head adaptive pooling, skip connection, single-layer Mamba, time series classification

作者:Yoo-Min Jung,Leekyung Kim

分数:4, 6, 8

信心:4, 3, 2

均分:6.0

TL; DR:We introduce MambaSL, a minimally redesigned single-layer Mamba that achieves state-of-the-art accuracy on the UEA30 benchmark, with reproducible evaluation covering all baselines.

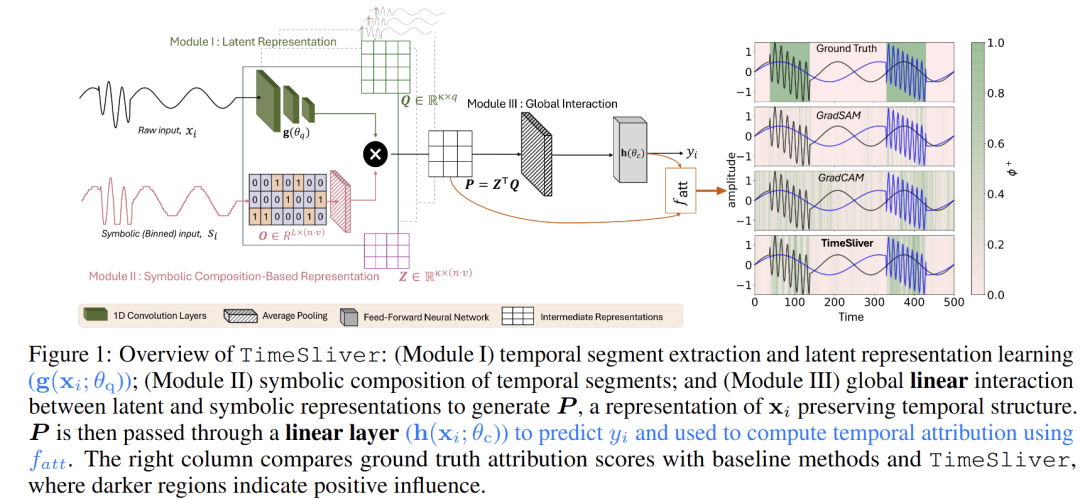

3 TIMESLIVER : SYMBOLIC-LINEAR DECOMPOSITION FOR EXPLAINABLE TIME SERIES CLASSIFICATION

链接:https://openreview.net/forum?id=MDRp9XhGtS

关键词:Time-series, Interpretability, Temporal Attribution

作者:Akash Pandey,Payal Mohapatra,Wei Chen,Qi Zhu,Sinan Keten

分数:4, 4, 6, 6

信心:3, 4, 3, 3

均分:5.0

TL; DR:Using a linear composition of symbolic and latent representations of multivariate time series, we provide temporal attribution scores that improve explainability without reducing predictive performance.

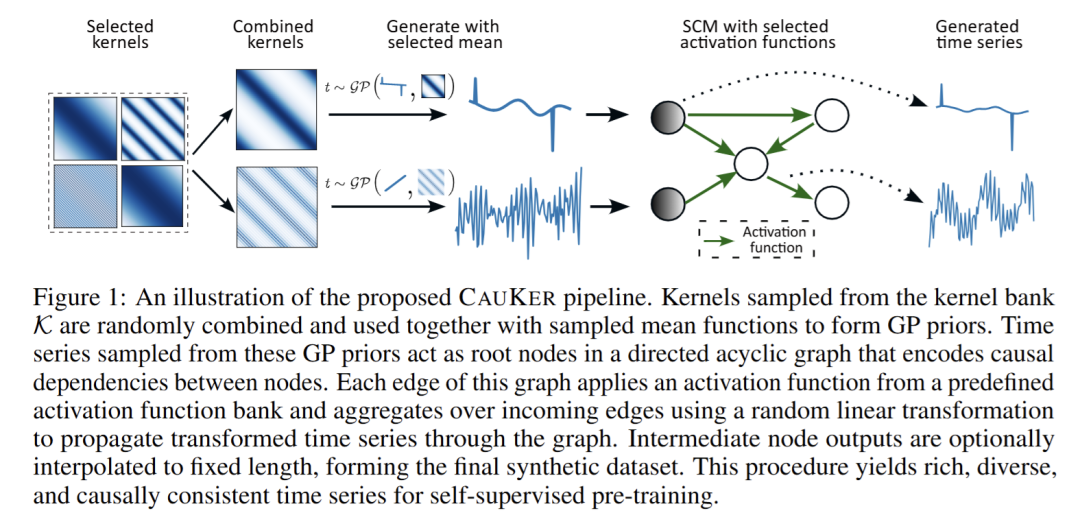

4 [Oral] CauKer: Classification Time Series Foundation Models Can Be Pretrained on Synthetic Data

链接:https://openreview.net/forum?id=xBW2FIfswU

录用类型:Oral

关键词:Time Series Foundation Model, Time Series Classification

作者:Shifeng Xie,Vasilii Feofanov,Jianfeng Zhang,Themis Palpanas,Ievgen Redko

分数:8, 4, 6, 6

信心:4, 4, 5, 4

均分:6.0

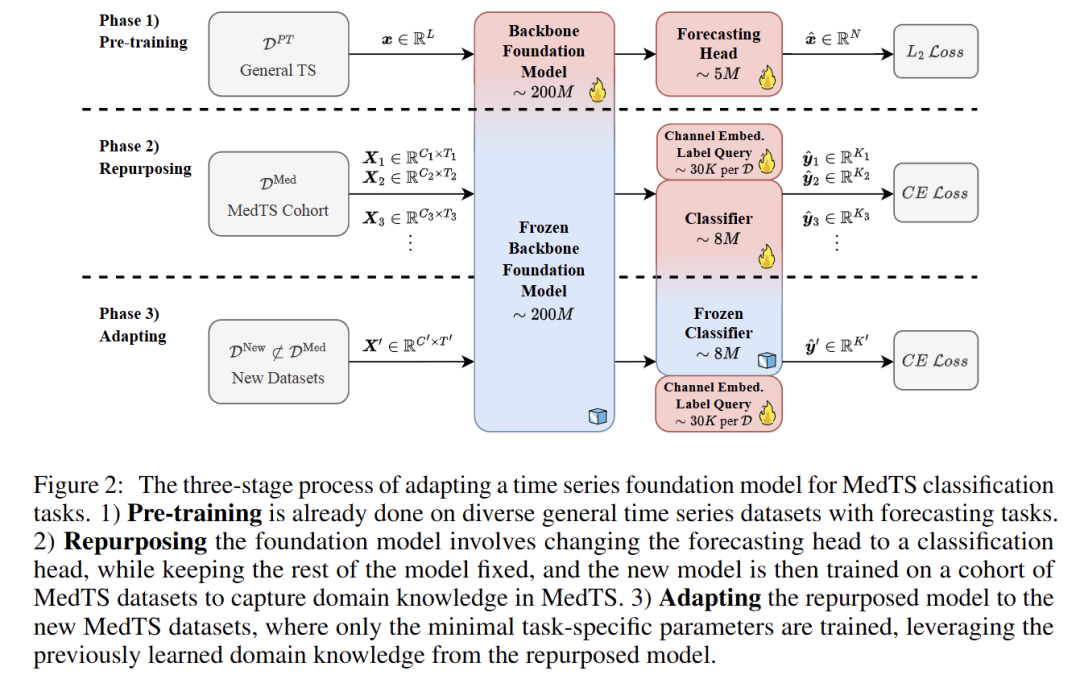

5 Repurposing Foundation Model for Generalizable Medical Time Series Classification

链接:https://openreview.net/forum?id=wNEzRYiyZM

关键词:Medical Time Seris, Classification, Time Series Foundation Model

作者:Nan Huang,Haishuai Wang,Zihuai He,Marinka Zitnik,Xiang Zhang

分数:6, 4, 8, 4

信心:4, 4, 3, 4

均分:5.5

TL; DR:FORMED repurposes pre-trained time series models for medical classification, achieving 35% F1-score improvement through lightweight adaptation across diverse datasets.

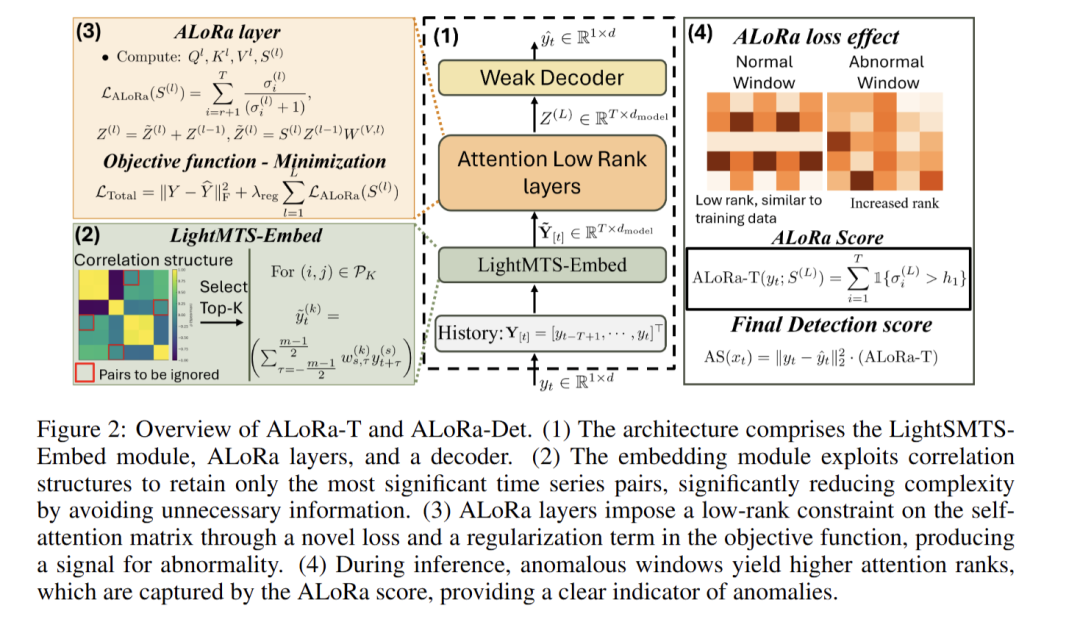

6 Low Rank Transformer for Multivariate Time Series Anomaly Detection and Localization

链接:https://openreview.net/forum?id=ZtPIBpVojC

关键词:Anomaly detection, Anomaly localization, Multivariate time series, Space-time autoregression, Transformer

作者:Charalampos Shimillas,Kleanthis Malialis,Konstantinos Fokianos,Marios Polycarpou

分数:6, 6, 4, 6

信心:3, 4, 4, 5

均分:5.5

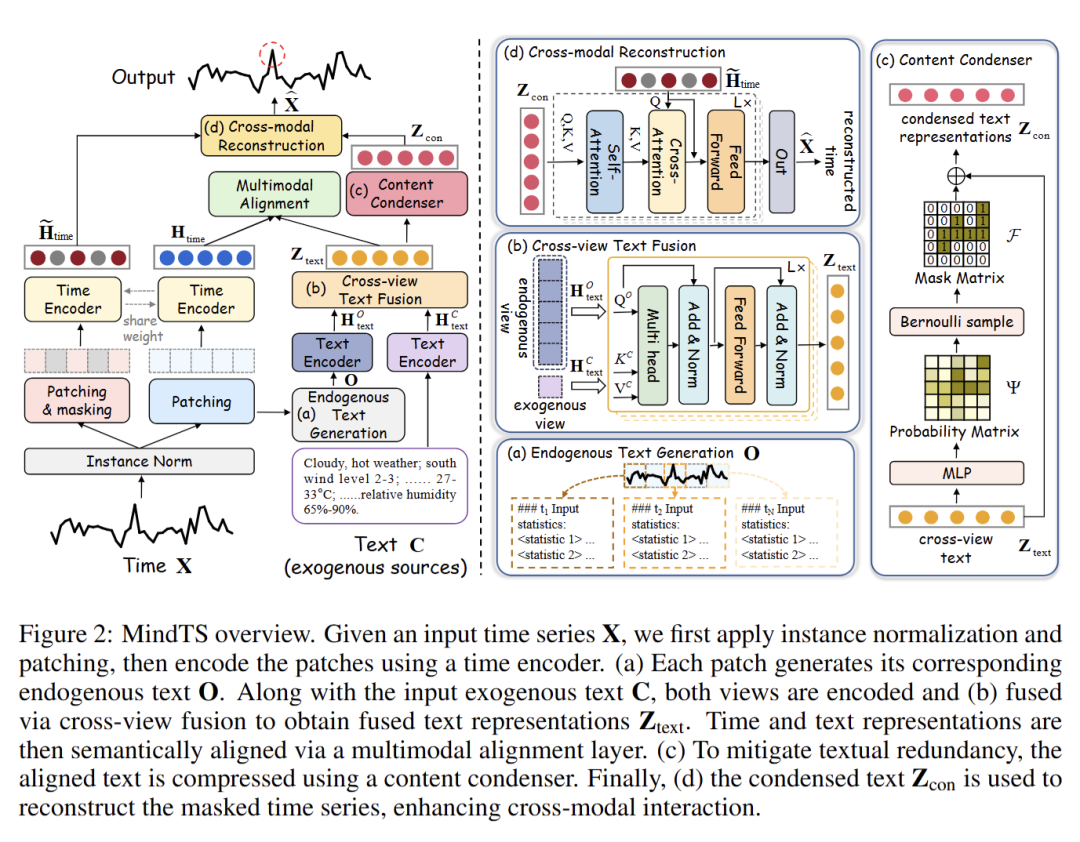

7 Towards Multimodal Time Series Anomaly Detection with Semantic Alignment and Condensed Interaction

链接:https://openreview.net/forum?id=fNFbGqu6Rg

关键词:multimodal time series; anomaly detection

作者:Shiyan Hu,Jianxin Jin,Yang Shu,Peng Chen,Bin Yang, Chenjuan Guo

分数:6, 6, 4, 6

信心:3, 4, 4, 4

均分:5.5

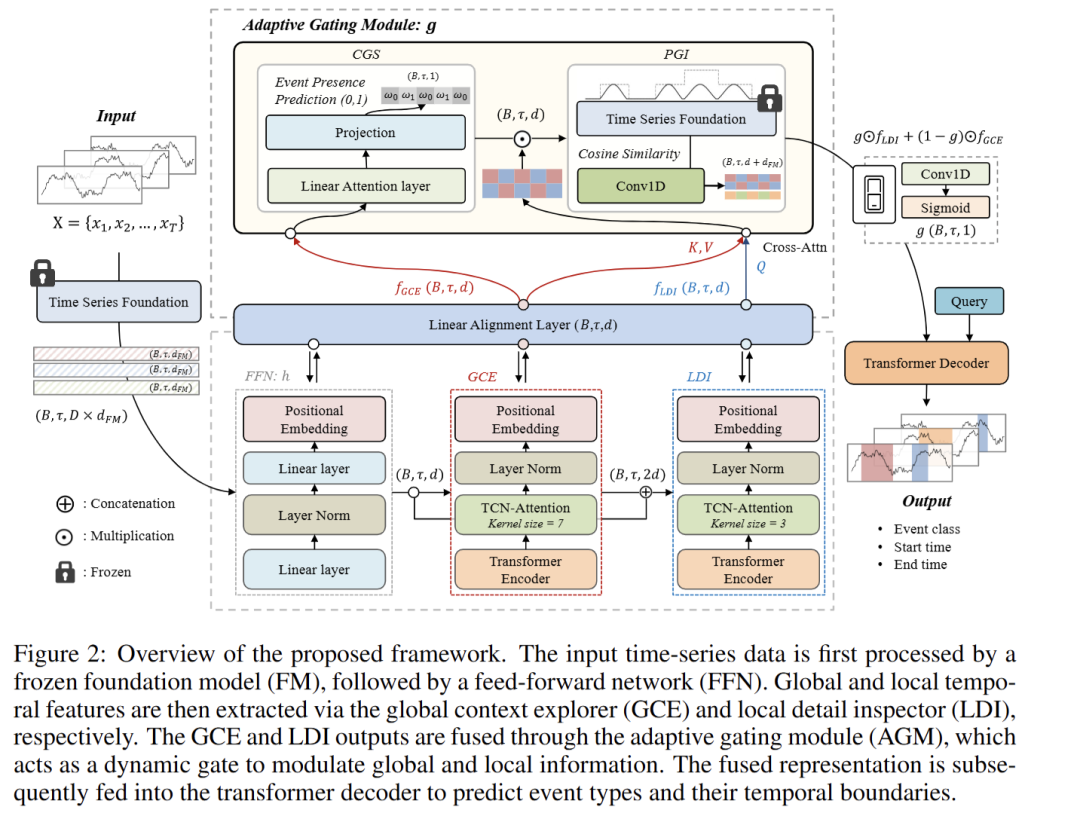

8 Enhancing Sparse Event Detection in Healthcare Time-Series via Adaptive Gate of Context–Detail Interaction

链接:https://openreview.net/forum?id=DulnZ7Dv82

关键词:Event detection, Time series analysis, Healthcare

作者:Beomjun Bark, Yun Kwan Kim

分数:6, 4, 6, 6

信心:2, 5, 3, 4

均分:5.5

TL; DR:We propose an adaptive gating framework that improves sparse event detection in healthcare time-series by selectively fusing context and detail features.

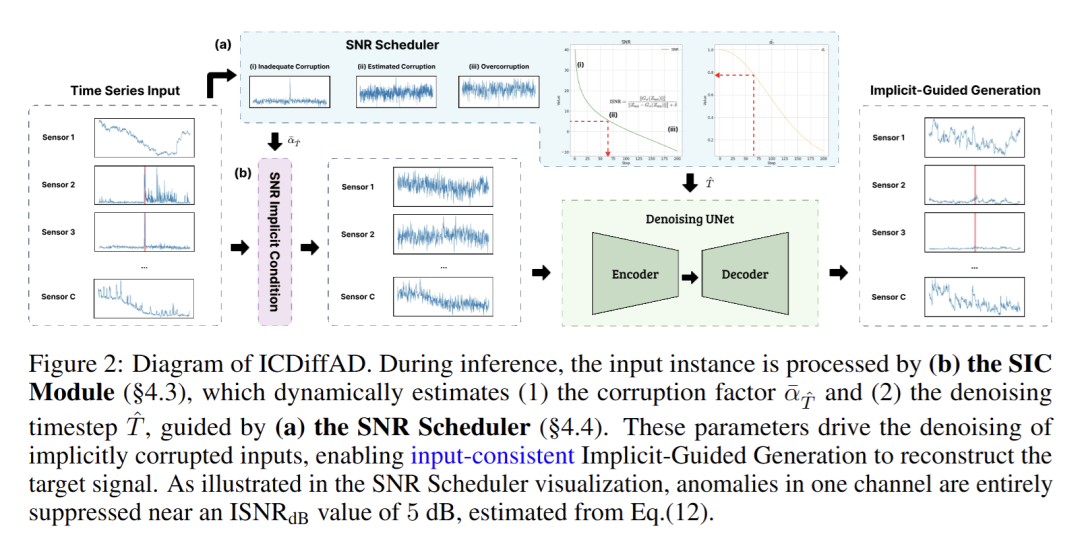

9 ICDiffAD: Implicit Conditioning Diffusion Model for Time Series Anomaly Detection

链接:https://openreview.net/forum?id=HIkuWAikXC

关键词:Time Series, Anomaly Detection, Diffusion Model, Implicit Conditioning

作者:Fan Zhang,Sinchee Chin,Jing-Hao Xue,Wenming Yang

分数:6, 4, 4, 2

信心:4, 4, 4, 3

均分:4.0

TL; DR:We propose a fix to current diffusion models in time series anomaly detection, guided by Signal to Noise Ratio both in training and inference, improving current Diffusion Models by 20.2% F1 Scores.

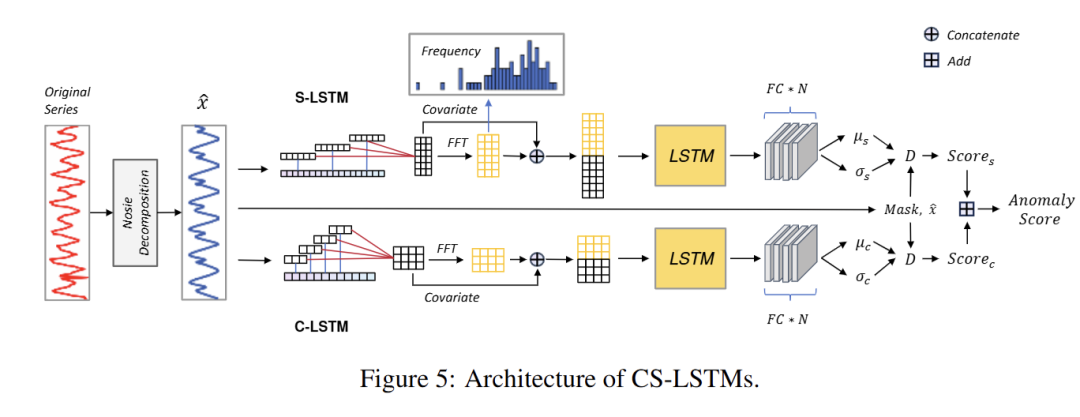

10 Contextual and Seasonal LSTMs for Time Series Anomaly Detection

链接:https://openreview.net/forum?id=2VtveTkmzW

关键词:time series anomaly detection

作者:Lingpei Zhang,Qingming Li,Yong Yang,Jiahao Chen,Rui Zeng,Chenyang Lyu,Shouling Ji

分数:4, 6, 4

信心:3, 4, 2

均分:4.666666667

TL; DR:We present CS-LSTMs that accomplishes anomaly detection for univariate time series with unified framework.

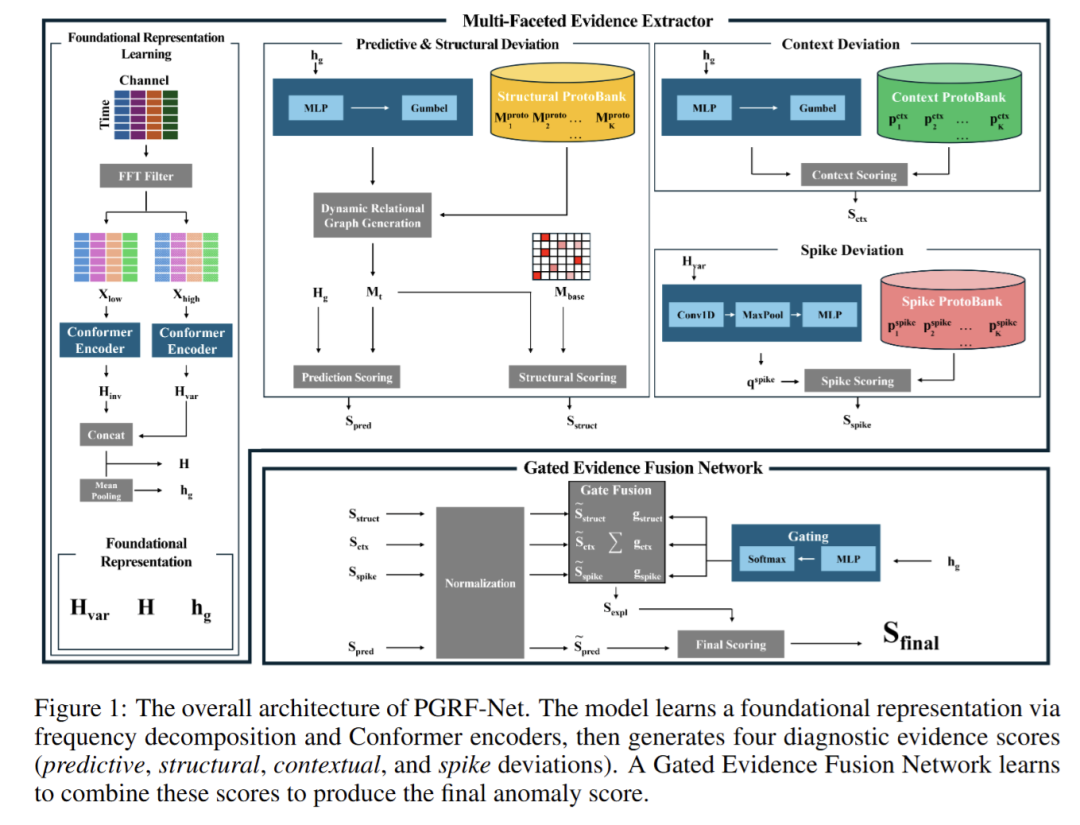

11 PGRF-Net: A Prototype-Guided Relational Fusion Network for Diagnostic Multivariate Time-Series Anomaly Detection

链接:https://openreview.net/forum?id=3hS7EtL4bV

关键词:Multivariate Timeseries Anomaly Detection, Time-Series Diagnostics, Prototype Learning, Relational Time-Series Modeling

作者:Jahoon Jeong,Hyunsoo Yoon

分数:2, 6, 4, 6

信心:4, 3, 3, 4

均分:4.5

TL; DR:PGRF-Net: A 2-stage unsupervised MTSAD model. It generates 4 prototype/relational evidence types, adaptively fused for detection. Achieves SOTA performance & provides diagnostic scores to aid root cause analysis.

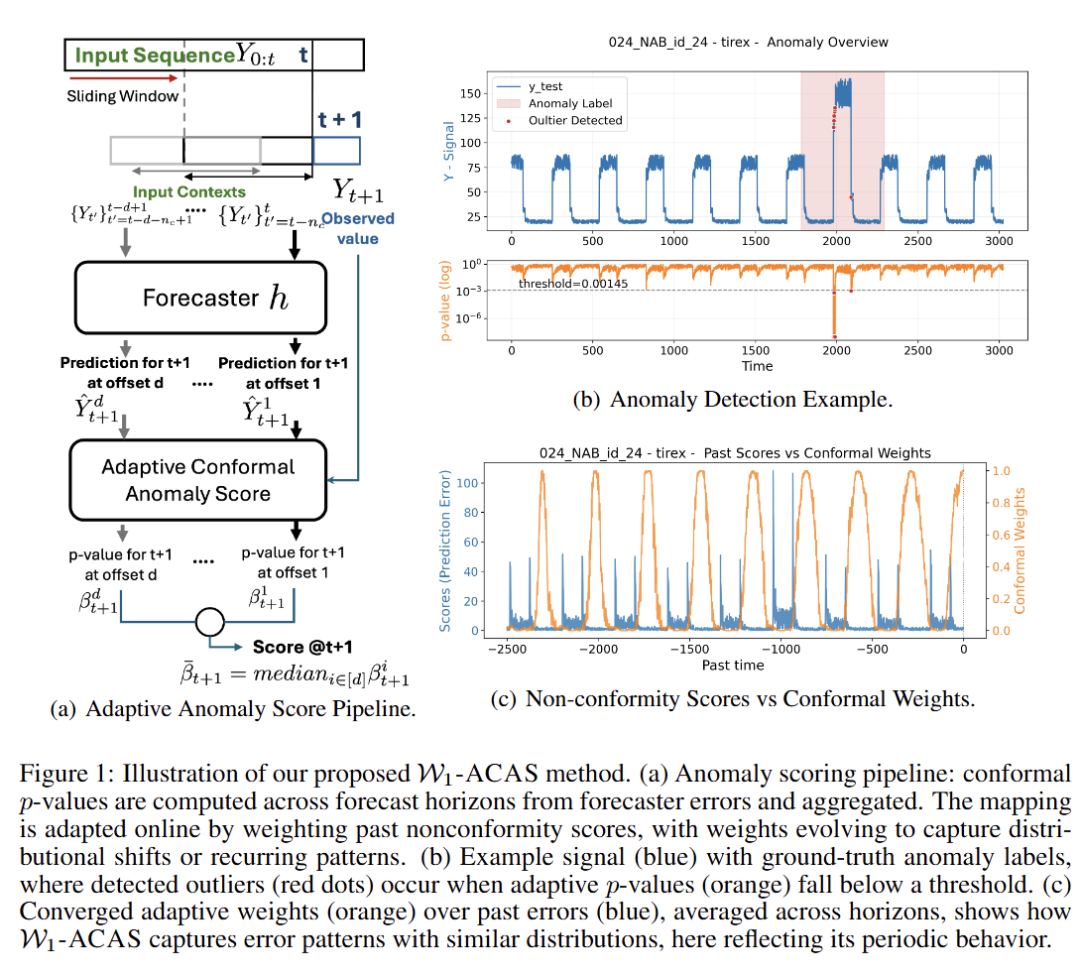

12 Adaptive Conformal Anomaly Detection with Time Series Foundation Models for Signal Monitoring.

链接:https://openreview.net/forum?id=7uFbs68MSI

关键词:time series anomaly detection; conformal prediction; anomaly detection; monitoring sequential signals

作者:Natalia Martinez,Fearghal O'Donncha,Wesley Gifford,Nianjun Zhou,Dhaval Patel,Roman Vaculin

分数:6, 6, 4

信心:3, 3, 4

均分:5.333333333

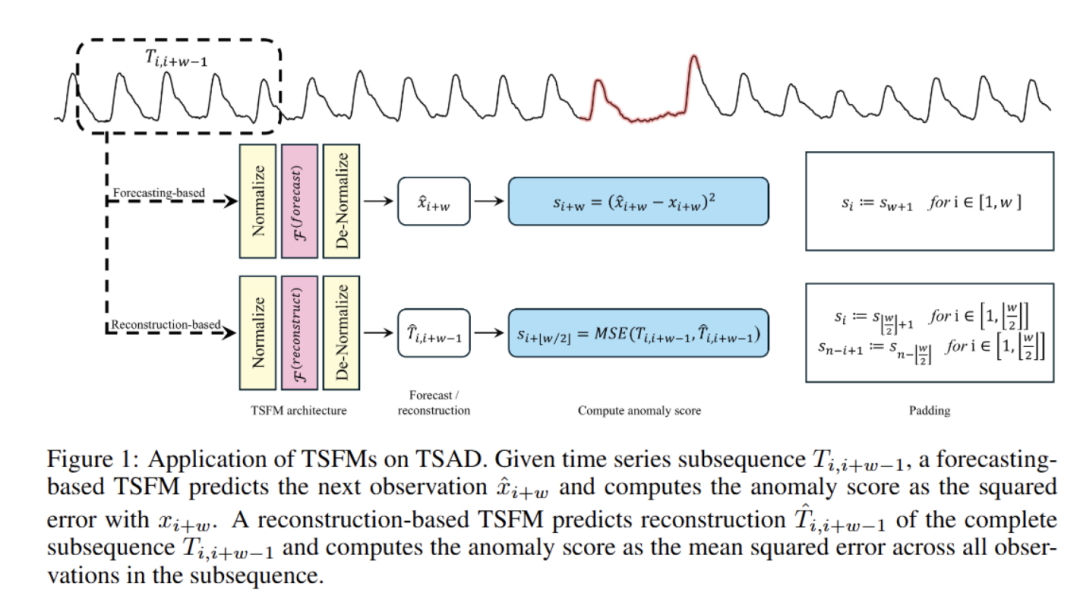

13 When Foundation Models are One-Liners: Limitations and Future Directions for Time Series Anomaly Detection

链接:https://openreview.net/forum?id=H27kvyG4qf

关键词:Time Series, Foundation Model, Anomaly Detection

作者:Xiaokun Zhu,Louis Carpentier,Mathias Verbeke

分数:4, 6, 6, 4

信心:4, 4, 5, 3

均分:5.0

TL; DR:We show that the current methodologies of applying time-series foundation models to time-series anomaly detection are flawed, and suggest alternative directions to make foundation models effective.

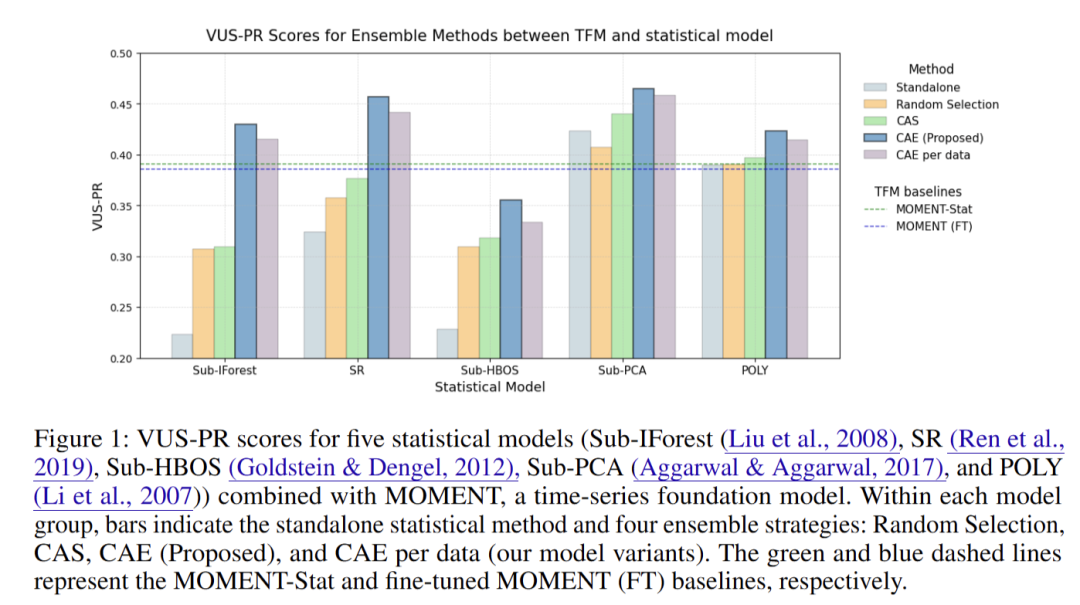

14 Complexity- and Statistics-Guided Anomaly Detection in Time Series Foundation Models

链接:https://openreview.net/forum?id=rBt9aW3Mx7

关键词:Timeseries anomaly detection, Timeseries foundation model, Reconstruction based anomaly detection

作者:Jongwon Kim,Young Ko,Samuel Yoon,Yerin Kim,Sung Kim, JAEUNG TAE

分数:4, 2, 6, 4

信心:4, 4, 3, 4

均分:4.0

TL; DR:We propose solutions based on a complexity measure, that captures high-frequency complexity and restores statistical features removed by RevIN, leading to theoretical and empirical improvements in anomaly detection.

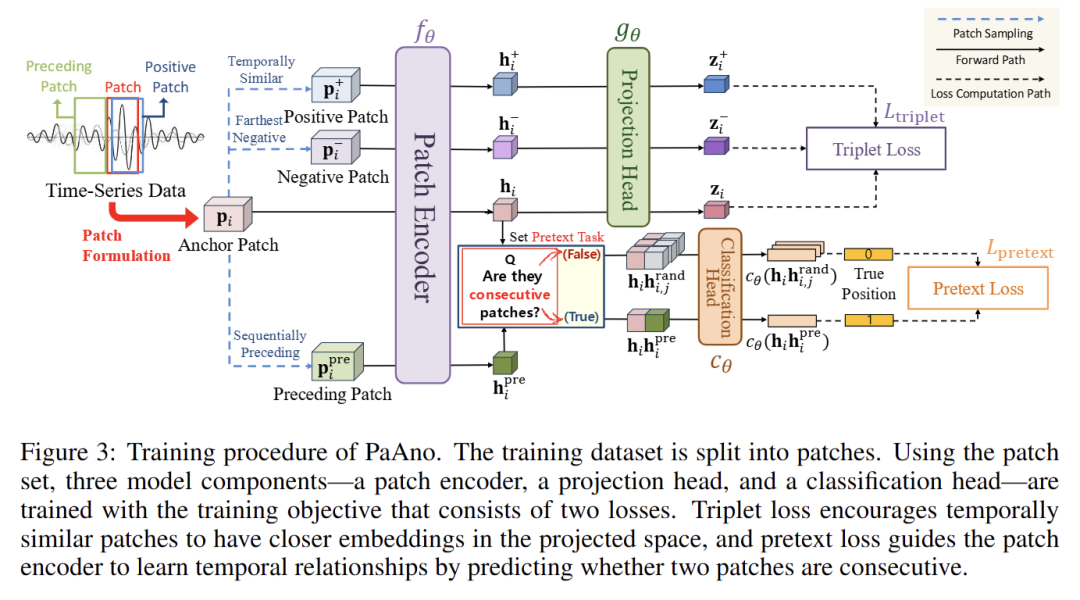

15 PaAno: Patch-Based Representation Learning for Time-Series Anomaly Detection

链接:https://openreview.net/forum?id=NXThkM7Iym

关键词: Time-Series Anomaly Detection, Representation Learning

作者:Jinju Park,Seokho Kang

分数:4, 4, 6, 6

信心:3, 4, 4, 3

均分:5.0

TL; DR: PaAno is a lightweight yet effective method for time-series anomaly detection, leveraging patch-based representation learning with a simple 1D-CNN. It outperforms heavyweight methods based on transformers and foundation models.

16 Understanding the Implicit Biases of Design Choices for Time Series Foundation Models

链接:https://openreview.net/forum?id=5jkzTzV5Ao

关键词:time series, foundation models, inductive bias, frequency, uncertainty, geometry

作者:Annan Yu,Danielle Maddix,Boran Han,Xiyuan Zhang,Abdul Fatir Ansari,Oleksandr Shchur,Christos Faloutsos,Andrew Gordon Wilson,Michael W Mahoney,Bernie Wang

分数:6, 6, 6, 4

信心:3, 4, 3, 4

均分:5.5

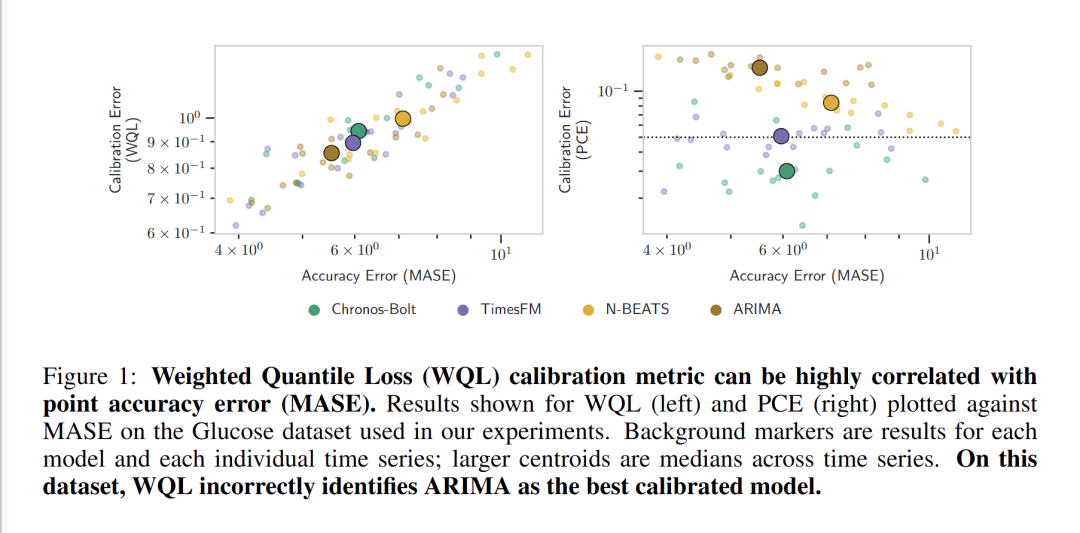

17 Beyond Accuracy: Are Time Series Foundation Models Well-Calibrated?

链接:https://openreview.net/forum?id=nGBN7UjHcy

关键词:Time Series, Foundation Models, Calibration, Confidence

作者:Coen Adler,Yuxin Chang,Samar Abdi,Felix Draxler,Padhraic Smyth

分数:4, 8, 4, 6

信心:4, 3, 4, 3

均分:5.5

TL; DR:We evaluate model calibration of time series foundation models and find that they are generally well-calibrated.

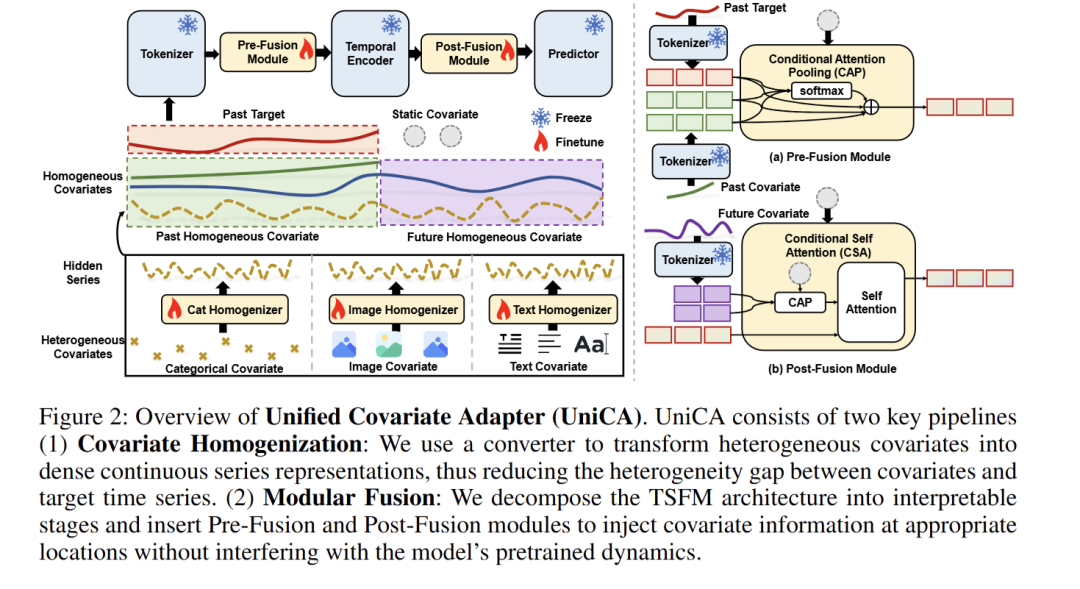

18 UniCA: Unified Covariate Adaptation for Time Series Foundation Model

链接:https://openreview.net/forum?id=I8q4MZb4OP

关键词:time series foundation model, adaptation, covariate-aware forecasting, heterogeneous covariates

作者:Lu Han,Yu Liu,Lan Li,Qiwen Deng,Jian Jiang,Yinbo sun,Zhe Yu,Binfeng Wang,Xingyu Lu,Lintao Ma,Han-Jia Ye,De-Chuan Zhan

分数:4, 6, 6, 6

信心:5, 3, 5, 3

均分:5.5

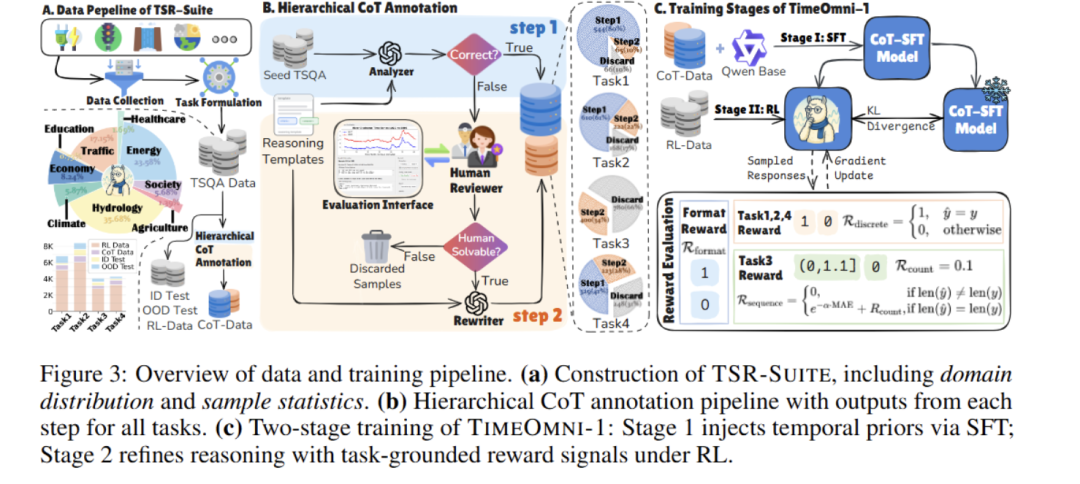

19 TimeOmni-1: Incentivizing Complex Reasoning with Time Series in Large Language Models

链接:https://openreview.net/forum?id=kOIclg7muL

关键词:Time series reasoning, multimodal time series, time series models, time series

作者:Tong Guan,Zijie Meng,Dianqi Li,Shiyu Wang,Chao-Han Huck Yang,Qingsong Wen,Zuozhu Liu,Sabato Siniscalchi,Ming Jin,Shirui Pan

分数:4, 4, 6, 6

信心:3, 3, 4, 4

均分:5.0

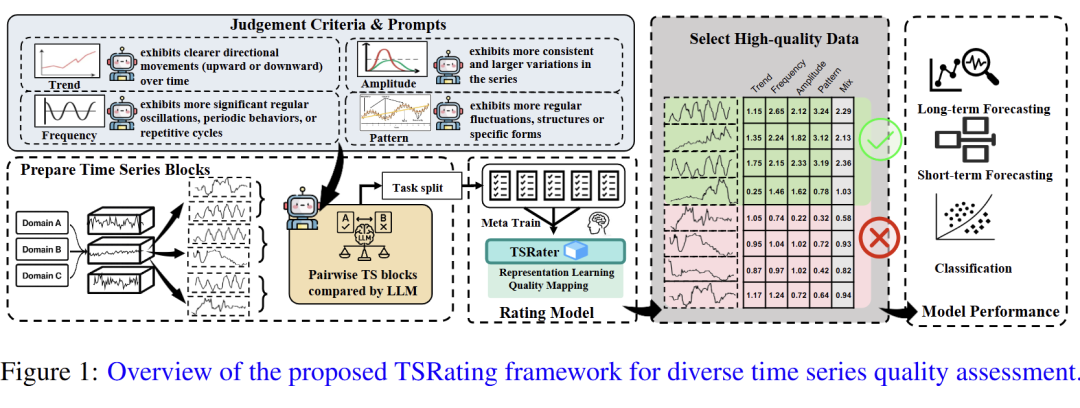

20 Rating Quality of Diverse Time Series Data by Meta-learning from LLM Judgment

链接:https://openreview.net/forum?id=TwrgmA1tw0

关键词:Data quality assessment, Data selection, Time series data, Large language models

作者:Shunyu Wu,Dan Li,Wenjie Feng,Haozheng Ye,Jian Lou,See-Kiong Ng

分数:4, 4, 6

信心:5, 3, 4

均分:4.666666667

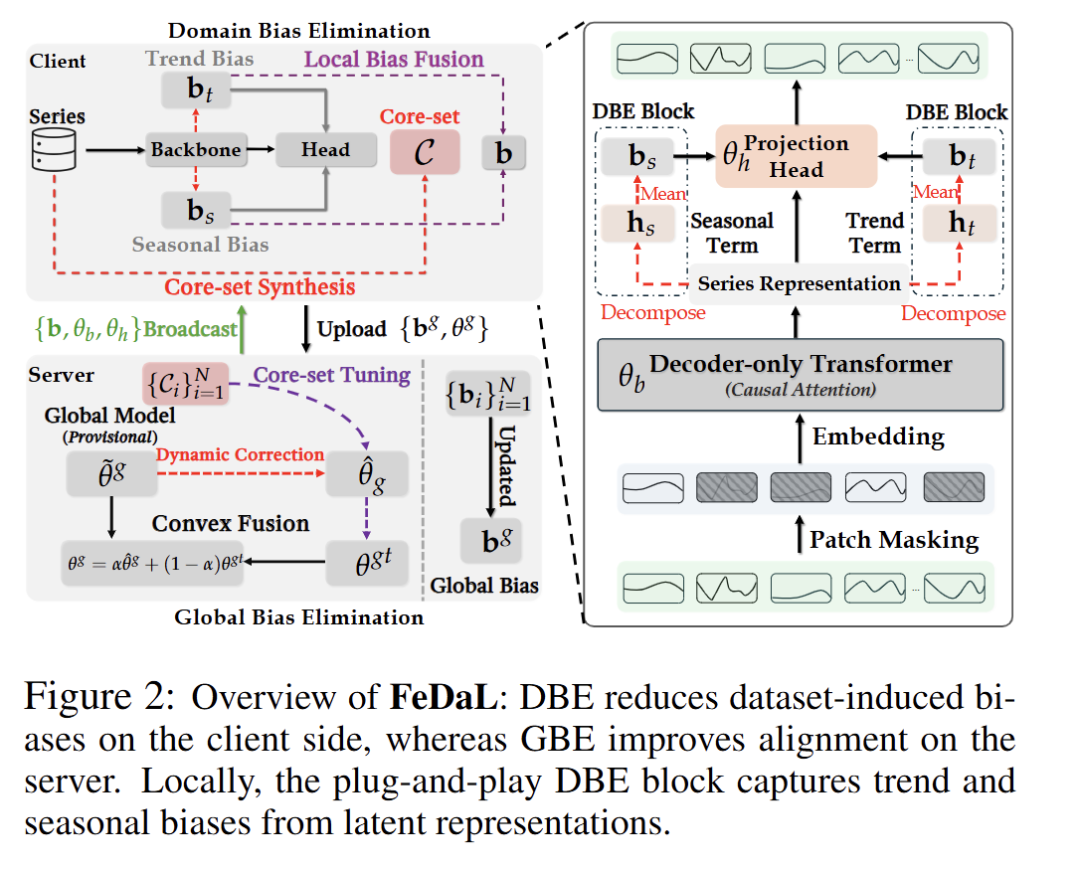

21 FeDaL: Federated Dataset Learning for General Time Series Foundation Models

链接:https://openreview.net/forum?id=HK6t5x5gJq

关键词:Time Series Analysis, Time Series Foundation Models, Federated Learning

作者:Shengchao Chen,Guodong Long,Michael Blumenstein,Jing Jiang

分数:4, 4, 2, 6

信心:4, 3, 4, 4

均分:4.0

TL; DR:We propose FeDaL, a federated framework for TSFM pretraining that mitigates dataset-level biases via DBE and GBE, enabling domain-invariant representations transferable to regression and classification tasks.

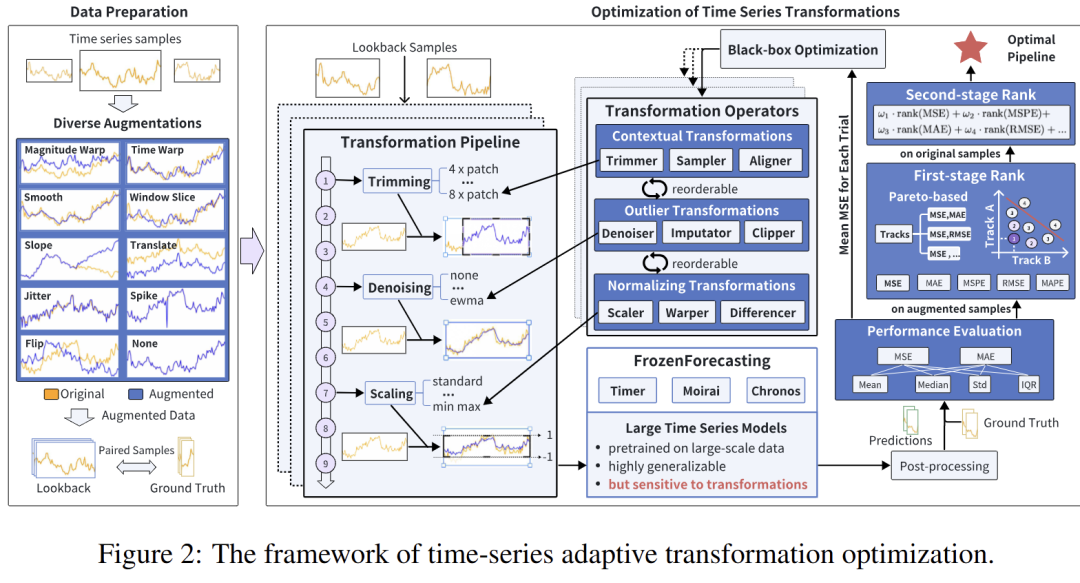

22 Adapt Data to Model: Adaptive Transformation Optimization for Domain-shared Time Series Foundation Models

链接:https://openreview.net/forum?id=uTK1SNgi1N

关键词:time series foundation models, transformation, adaptation

作者:Yunzhong Qiu,Zhiyao Cen,Zhongyi Pei,Chen Wang,Jianmin Wang

分数:2, 4, 6, 6

信心:4, 4, 4, 4

均分:4.5

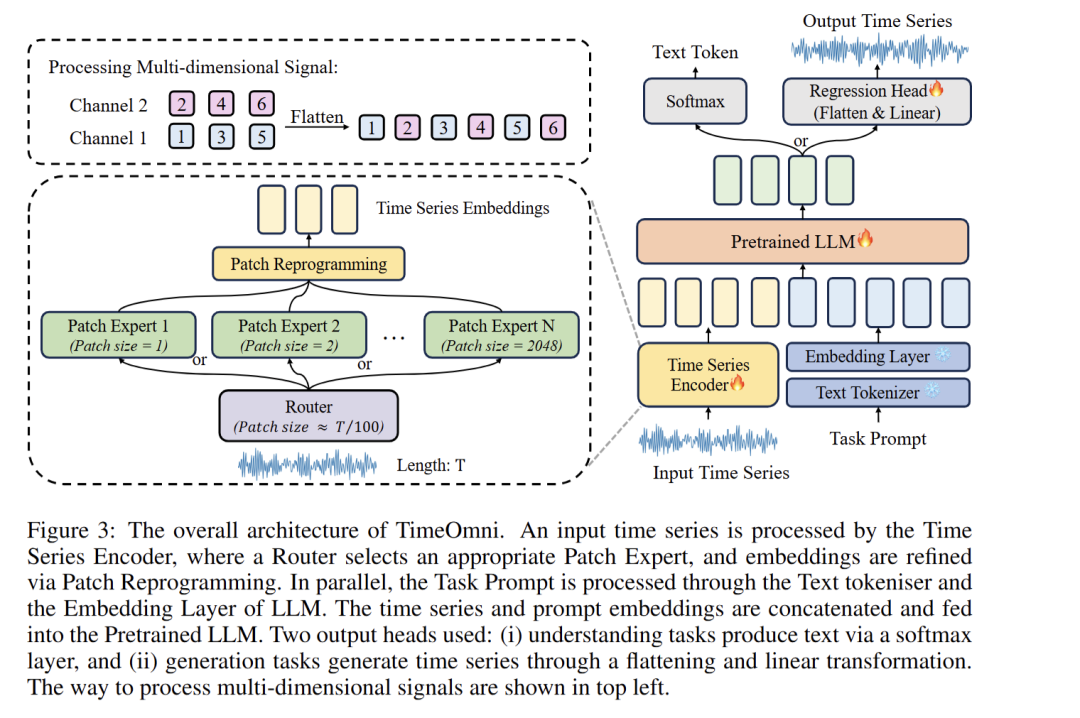

23 SciTS: Scientific Time Series Understanding and Generation with LLMs

链接:https://openreview.net/forum?id=5YXccEP6uc

关键词:time series, large language model, benchmark

作者:Wen Wu,Ziyang Zhang,Liwei Liu,Xuenan Xu,Junlin Liu,Ke Fan,Qitan Lv,Jimin Zhuang,Chen Zhang,Zheqi Yuan,Siyuan Hou,Tianyi Lin,Kai Chen,Bowen Zhou,Chao Zhang

分数:6, 2, 4, 6

信心:3, 4, 4, 3

均分:4.5

TL; DR:We introduce SciTS, a comprehensive scientific time-series benchmark, and TimeOmni, an LLM-based framework for time series understanding and generation.

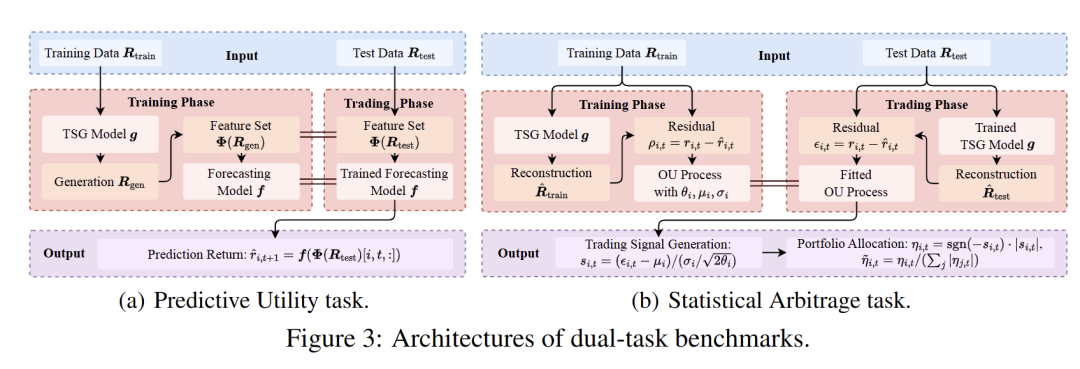

24 CTBench: Cryptocurrency Time Series Generation Benchmark

链接:https://openreview.net/forum?id=RzT2sombPD

关键词:Time Series Generation, Crypto-centric Benchmark, Cryptocurrency Markets, Financial Evaluation Measure Suite

作者:Yihao Ang,Qiang Wang,Qiang Huang,Yifan Bao,Xinyu Xi,Anthony Tung,Chen Jin,Zhiyong Huang

分数:6, 8, 4

信心:4, 4, 3

均分:6.0

TL; DR:In this work, we introduce CTBench, the first open time series generation benchmark tailored to cryptocurrency markets.

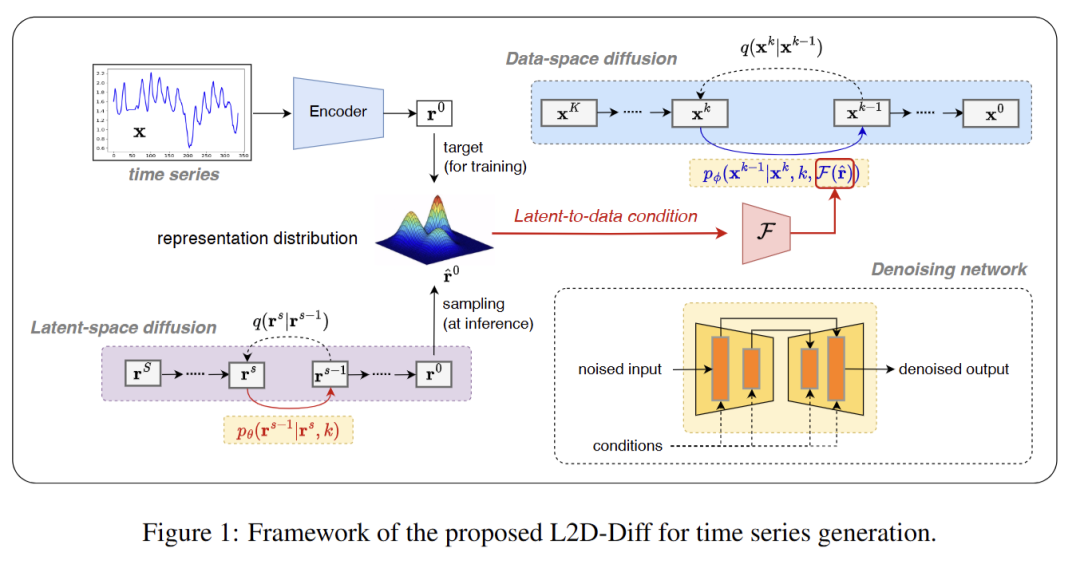

25 Latent-to-Data Cascaded Diffusion Models for Unconditional Time Series Generation

链接:https://openreview.net/forum?id=nAyeE7cAS0

关键词:time series, unconditional, synthetic

作者:Lifeng Shen,Kai Syun Hou,Weiyu Chen,James Kwok

分数:6, 6, 4, 4

信心:3, 4, 3, 4

均分:5.0

26 Functional MRI Time Series Generation via Wavelet-Based Image Transform and Spectral Flow Matching for Brain Disorder Identification

链接:https://openreview.net/forum?id=Dgphd9qizu

关键词:Generative Models, Time Series, Flow Matching

作者:Hwa Hui Tew,Junn Yong Loo,Leong Yu,Julia Lau,Ding Fan,Hernando Ombao,Raphal Phan,Chee Tan,Chee-Ming Ting

分数:2, 4, 4, 8

信心:3, 4, 4, 5

均分:4.5

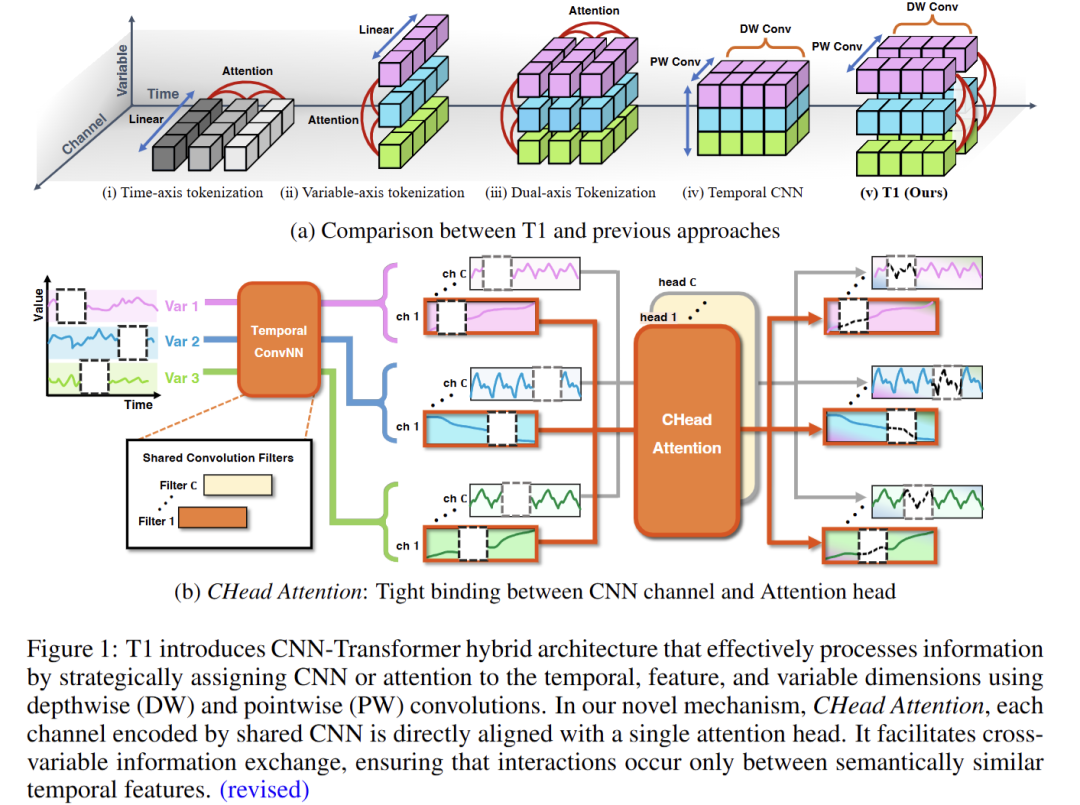

27 T1: One-to-One Channel-Head Binding for Multivariate Time-Series Imputation

链接:https://openreview.net/forum?id=IAnIlFsPEW

关键词:Time Series, Imputation

作者:Dongik Park,Hyunwoo Ryu,Suahn Bae,Keondo Park,Hyung-Sin Kim

分数:6, 6, 6, 4

信心:3, 3, 4, 3

均分:5.4

TL; DR:T1 is a CNN-Transformer hybrid that binds channels to attention heads for robust time series imputation, achieving 46% better performance than existing methods, especially under extreme missingness.

28 Time-Gated Multi-Scale Flow Matching for Time-Series Imputation

链接:https://openreview.net/forum?id=txvc61ONbs

关键词:Time-series imputation, Flow matching, ODE-based generative models, Transformers, Multi-scale modeling

作者:Hangtian Wang,Mahito Sugiyama

分数:4, 6, 6, 6, 6

信心:4, 3, 3, 4, 4

均分:5.6

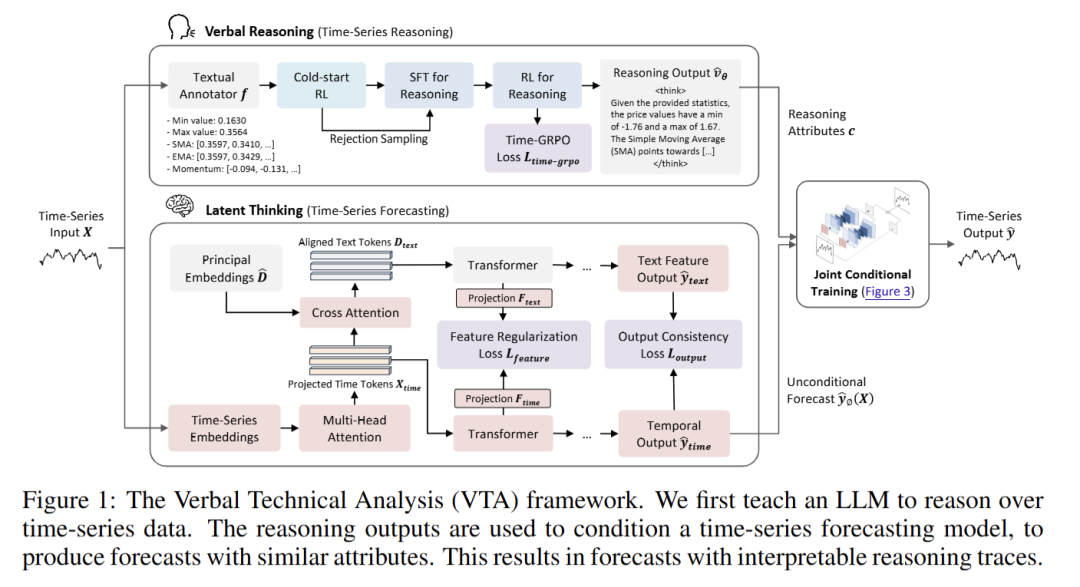

29 Reasoning on Time-Series for Financial Technical Analysis

链接:https://openreview.net/forum?id=PcjIe5xNaY

关键词:Time-Series, Large Language Models, Stock Prediction

作者:Kelvin Koa,Jan Chen,Yunshan Ma,Zheng Huanhuan,Tat-Seng Chua

分数:6, 4, 4, 4

信心:4, 3, 3, 4

均分:5.4

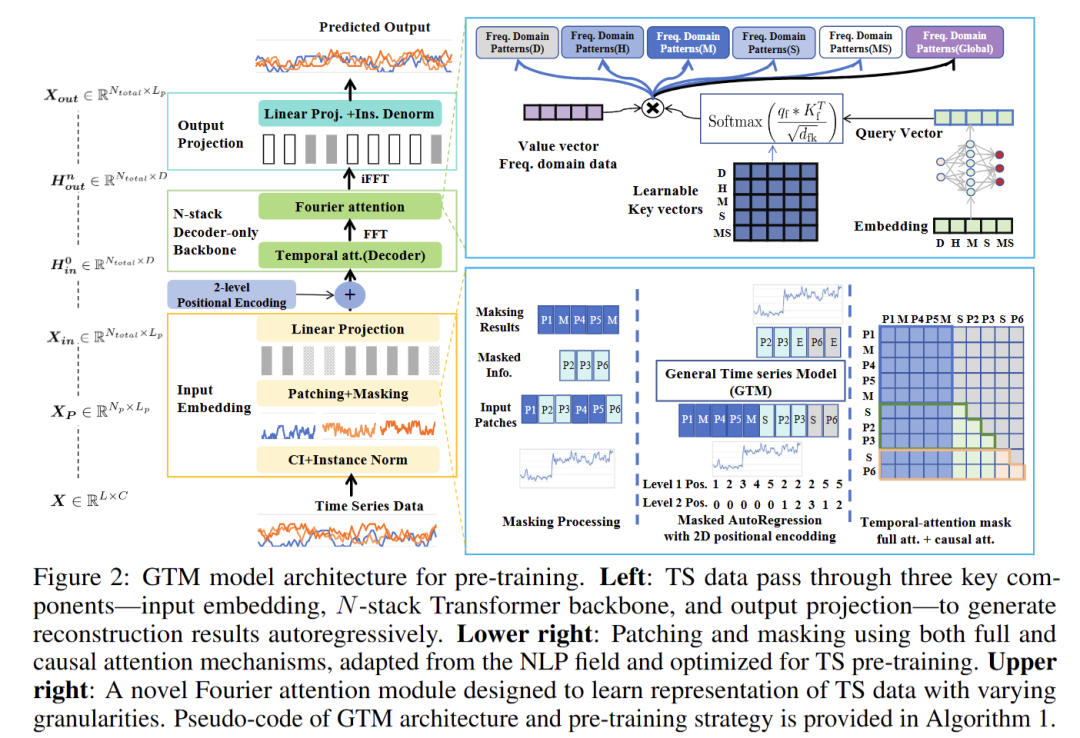

30 GTM: A General Time-series Model for Enhanced Representation Learning of Time-Series data

链接:https://openreview.net/forum?id=PWM6FERWz9

关键词:Time series; Fundation Model;Representation learning;Pre-training strategy

作者:Cheng HE,Xu Huang,Gangwei Jiang,Zhaoyi Li,Defu Lian,Hong Xie,Enhong Chen,xijie liang,Zhengzengrong,Patrick P. C. Lee

分数:4, 4, 8, 6

信心:4, 3, 3, 4

均分:5.4

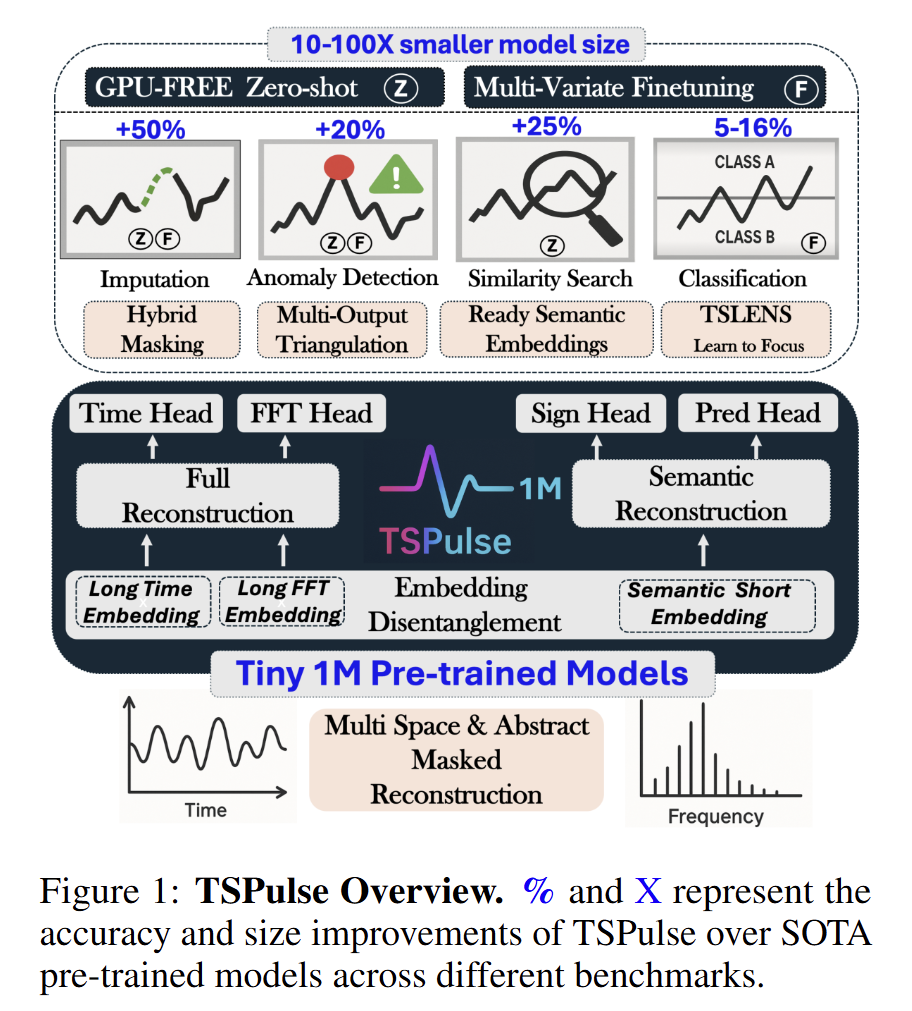

31 TSPulse: Tiny Pre-Trained Models with Disentangled Representations for Rapid Time-Series Analysis

链接:https://openreview.net/forum?id=Kw2mvnzCoc

关键词:time series foundation models, pretrained models, time series, foundation models, TSFM

作者:Vijay Ekambaram,Subodh Kumar,Arindam Jati,Sumanta Mukherjee,Tomoya Sakai,Pankaj Dayama,Wesley Gifford,Jayant Kalagnanam

分数:2, 4, 6, 6

信心:5, 3, 4 ,5

均分:4.5

TL; DR:Ultra-lightweight time-series pre-trained models (1M parameters) with disentangled embeddings across spaces and abstraction levels, delivering state-of-the-art performance in anomaly detection, classification, imputation, and similarity search.

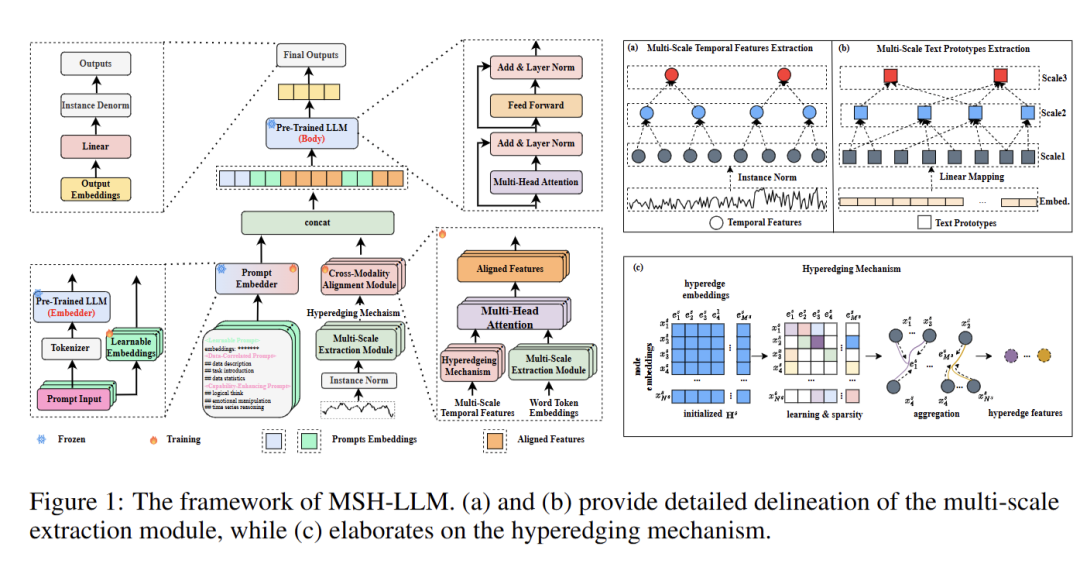

32 Multi-Scale Hypergraph Meets LLMs: Aligning Large Language Models for Time Series Analysis

链接:https://openreview.net/forum?id=SbBX2dCw3y

关键词:Time series forecasting, large language models, multi-scale modeling, hypergraph neural network, hypergraph learning, transformer

作者:Zongjiang Shang,Dongliang Cui,Binqing Wu,Ling Chen

分数:4, 6, 2, 6

信心:4, 3, 5, 4

均分:4.5

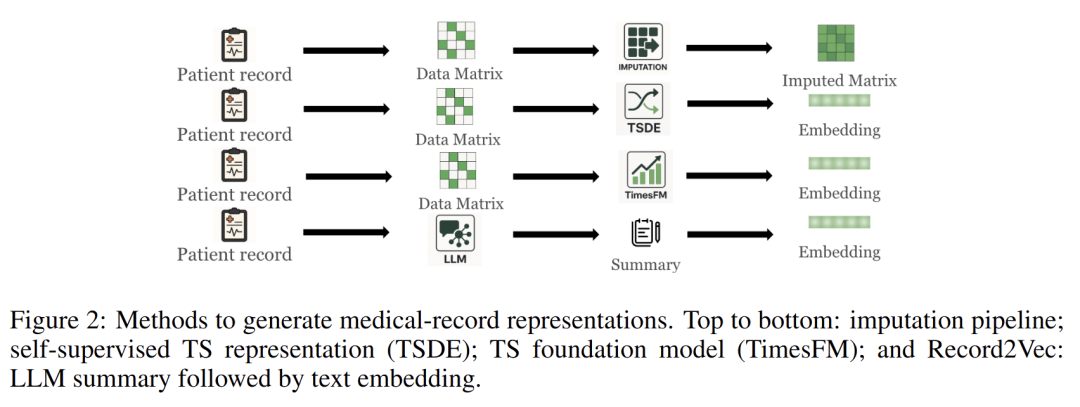

33 Can we generate portable representations for clinical time series data using LLMs?

链接:https://openreview.net/forum?id=pXw0uRTSKT

关键词:Machine Learning for Healthcare, ICU Time-series, LLMs, Representation Learning

作者:Zongliang Ji,Yifei Sun,Andre Amaral,Anna Goldenberg,Rahul G. Krishnan

分数:4, 8, 4, 8

信心:5, 3, 4, 3

均分:6.0

TL; DR:Explore the ability of LLMs to generate portable and transferrable representations for ICU time-series

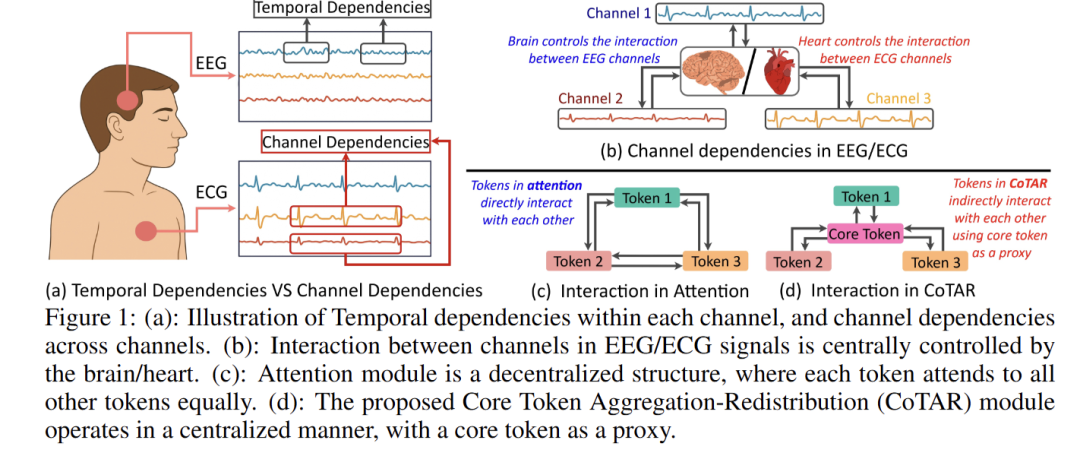

34 [Oral] Decentralized Attention Fails Centralized Signals: Rethinking Transformers for Medical Time Series

链接:https://openreview.net/forum?id=oZJFY2BQt2

关键词:EEG, ECG, Deep learning, Transformer

录用类型:Oral

作者:Yu Guoqi,Juncheng Wang,Chen Yang,Jing Qin,Angelica Aviles-Rivero,Shujun Wang

分数:8, 6, 4

信心:4, 5, 4

均分:6.0

TL; DR:We propose a centralized module to replace decentralized attention in Transformer for centralized medical time series like EEG and ECG.

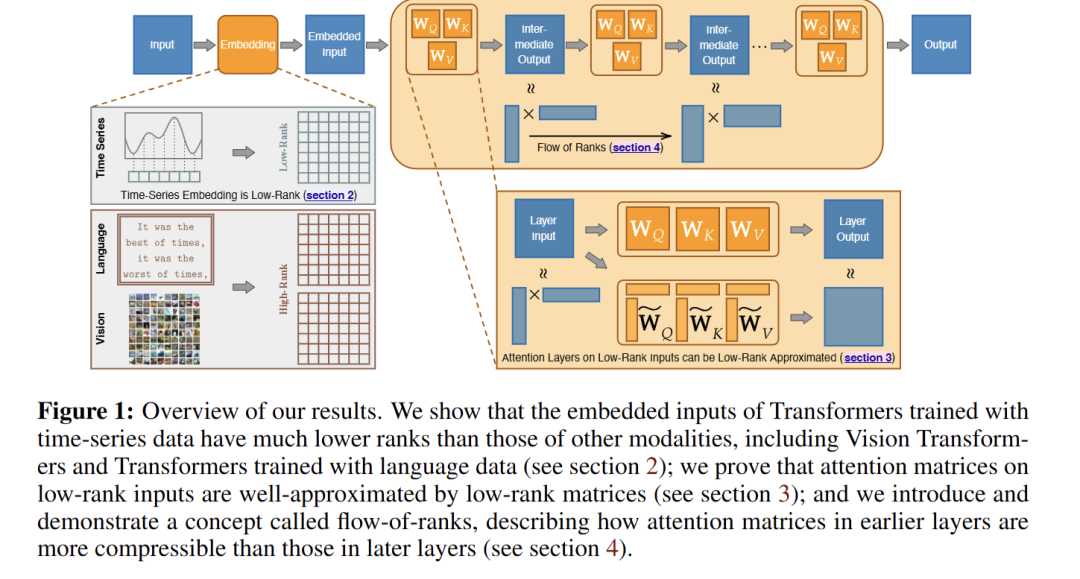

35 Understanding Transformers for Time Series: Rank Structure, Flow-of-ranks, and Compressibility

链接:https://openreview.net/forum?id=axR2KZwaD3

关键词:time series, foundation models, rank structure, attention, embedding

作者:Annan Yu,Danielle Maddix,Boran Han,Xiyuan Zhang,Abdul Fatir Ansari,Oleksandr Shchur,Christos Faloutsos,Andrew Gordon Wilson,Michael W Mahoney,Bernie Wang

分数:8, 4, 6, 8

信心:2, 2, 3, 3

均分:6.5

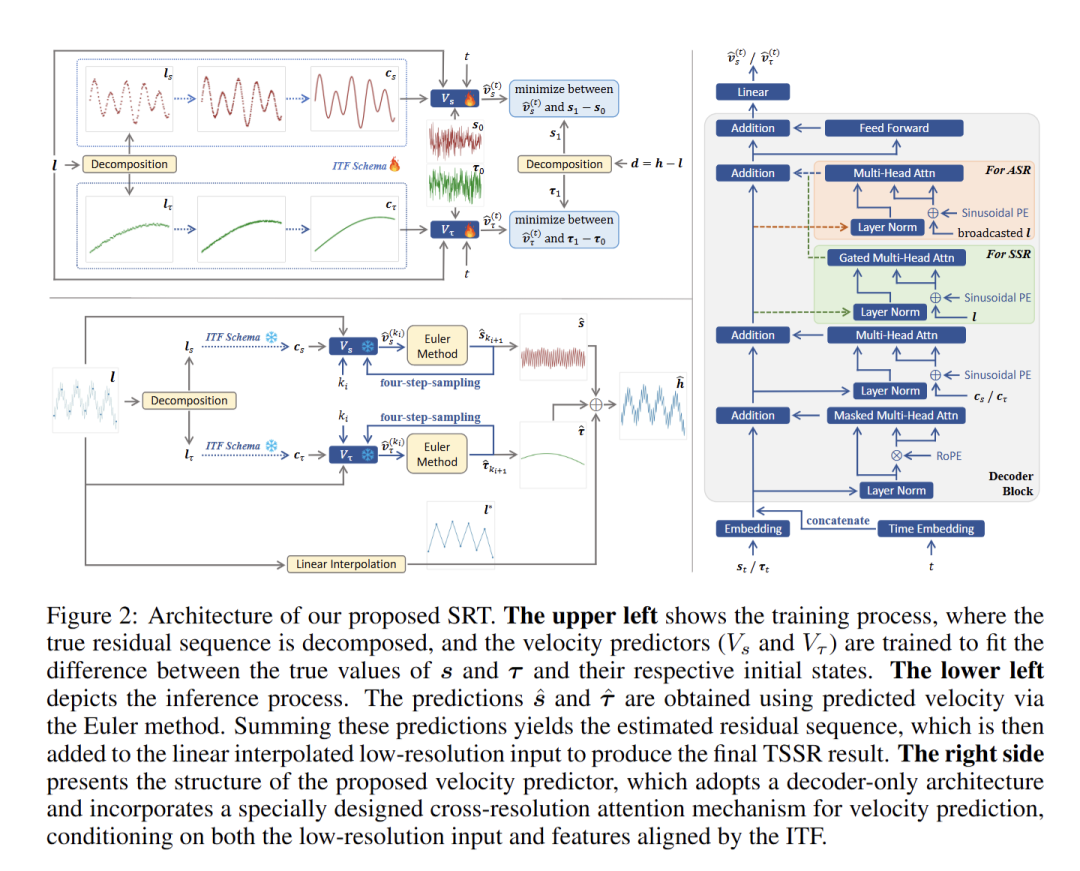

36 SRT: Super-Resolution for Time Series via Disentangled Rectified Flow

链接:https://openreview.net/forum?id=I94Eg6cu7P

关键词:Time Series Super-Resolution, Rectified Flow, Temporal Disentanglement, Implicit Neural Representations

作者:Jufang Duan,Shenglong Xiao,Yuren Zhang

分数:8, 4, 6, 4

信心:2, 3, 4, 4

均分:5.5

TL; DR:We propose SRT, a novel disentangled rectified flow framework for time series super-resolution that generates high-resolution details from low-resolution data, achieving state-of-the-art performance across nine benchmarks.

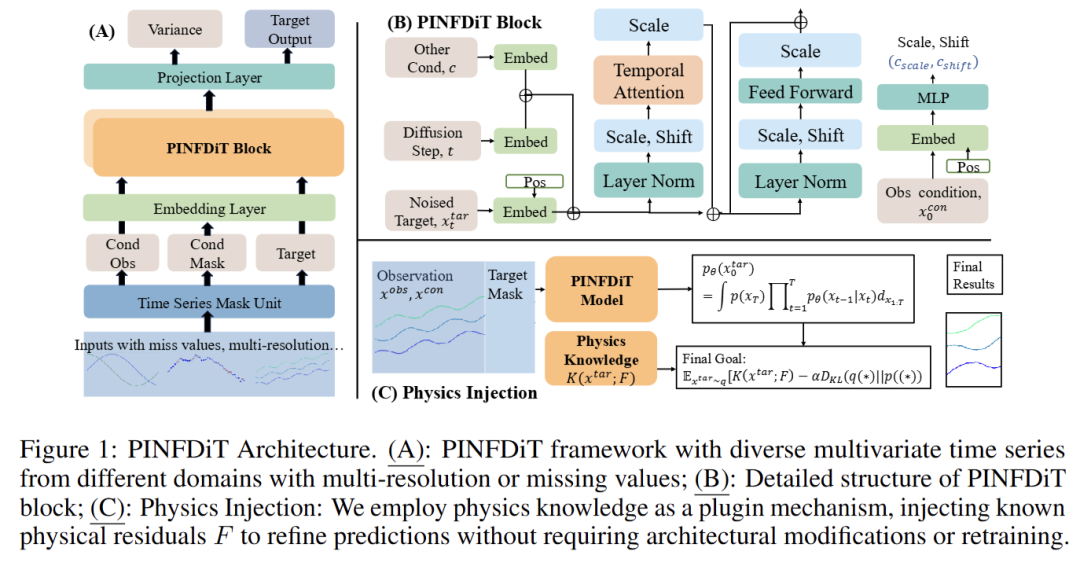

37 PINFDiT: Energy-Based Physics-Informed Diffusion Transformers for General-purpose Time Series Tasks

链接:https://openreview.net/forum?id=EphTlUJ4XN

关键词:Diffusion; Transformer; Time Series; Physics Informed Machine Learning;Physics-Guided Inference in Time Series Diffusion Transformers

作者:Defu Cao,Wen Ye,Yizhou Zhang,Sam Griesemer,Yan Liu

分数:8, 4, 2, 6

信心:4, 3, 4, 4

均分:5.0

TL; DR:Physics-Guided Inference in Time Series Diffusion Transformers

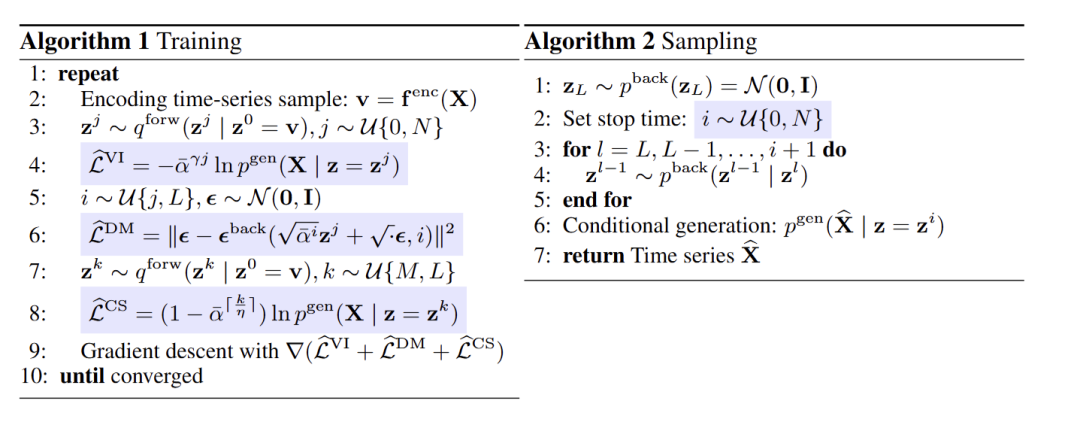

38 A Study of Posterior Stability in Time-Series Latent Diffusion

链接:https://openreview.net/forum?id=UbL2Fo0IvV

关键词:Latent Diffusion, Time Series, Posterior Collapse

作者:Yangming Li,Yixin Cheng,Mihaela van der Schaar

分数:6, 8, 2, 4

信心:2, 4, 4, 3

均分:5.0

TL; DR:Conducted a solid analysis of posterior collapse in time-series latent diffusion, and presented a new framework that is free from the problem.

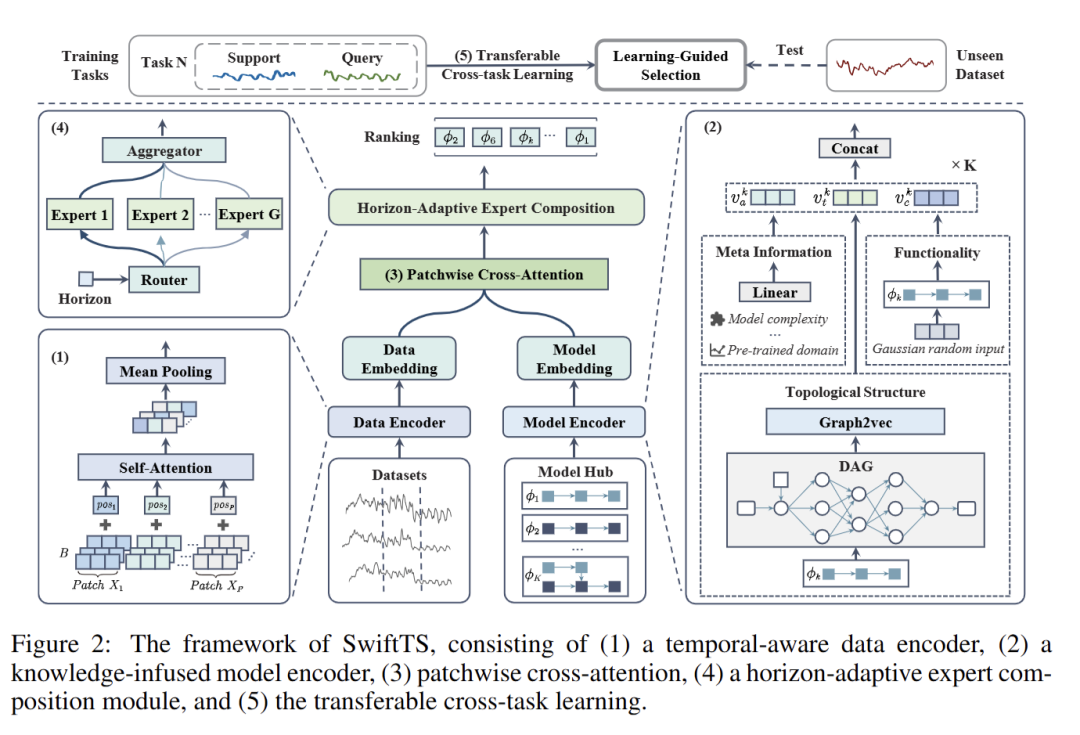

39 SwiftTS: A Swift Selection Framework for Time Series Pre-trained Models via Multi-task Meta-Learning

链接:https://openreview.net/forum?id=XrmXvv75KP

关键词:time series forecasting, model selection, transfer learning

作者:Tengxue Zhang,Biao Ouyang,Yang Shu,Xinyang Chen,Guo,Bin Yang

分数:6, 6, 2

信心:3, 3, 3

均分:4.666666667

TL; DR:We propose a swift selection framework for time series pre-trained models via multi-task meta-learning without fine-tuning.

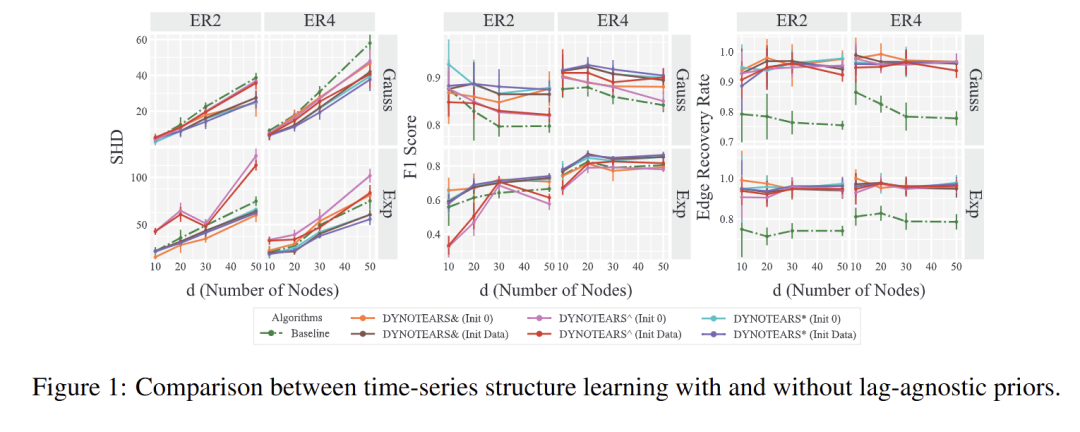

40 Structure Learning from Time-Series Data with Lag-Agnostic Structural Prior

链接:https://openreview.net/forum?id=kdJsB0J4Ic

关键词:Continuous DAG structure learning, dynamic causal discovery, structure learning from time series data

作者:Taiyu Ban,Changxin Rong,Xiangyu Wang,Lyuzhou Chen,Yanze Gao,Xin Wang,Huanhuan Chen

分数:6, 4, 6, 6

信心:5, 2, 3, 4

均分:5.4

TL; DR:This paper introduces how to use lag-agnostic prior, commonly available knowledge, to guide the discovery of lag-aware causal interactions from time-series data in the continuous optimization framework.

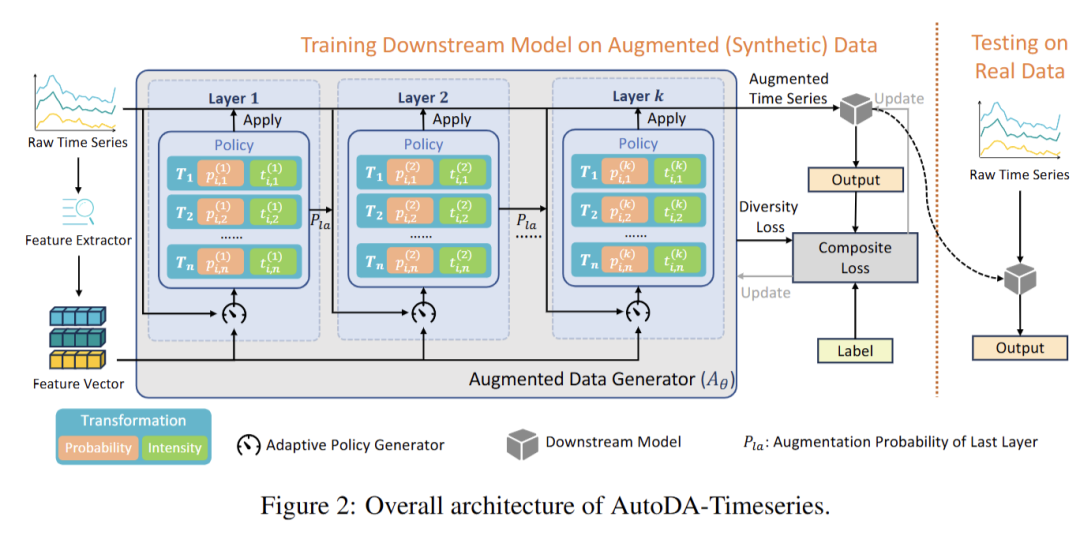

41 AutoDA-Timeseries: Automated Data Augmentation for Time Series

链接:https://openreview.net/forum?id=vTLmHAkoIW

关键词:time series analysis; automated data augmentation

作者:Zijun Dou,Zhenhe Yao,Zhe Xie,Xidao Wen,Tong Xiao,Dan Pei

分数:6, 4, 4, 6

信心:4, 4, 3, 3

均分:5.0

TL; DR:We propose AutoDA-Timeseries, the first automated data augmentation framework tailored for time series, which adaptively learns augmentation strategies and consistently improves performance across diverse tasks.

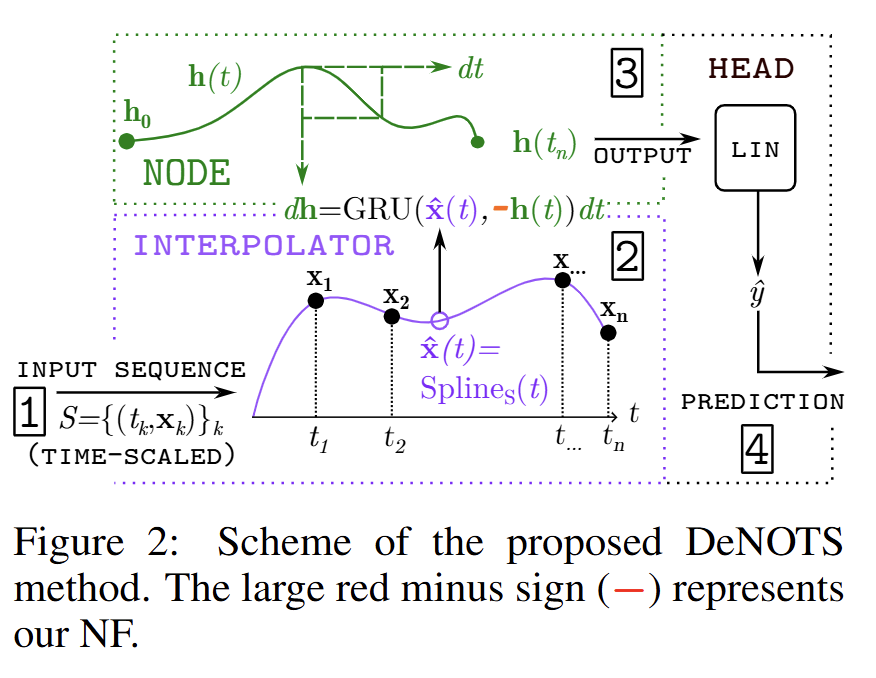

42 DeNOTS: Stable Deep Neural ODEs for Time Series

链接:https://openreview.net/forum?id=SFoDJZ1sSk

关键词:Neural ODE, Time series, Gaussian Processes

作者:Ilya Kuleshov,Evgenia Romanenkova,Vladislav Zhuzhel,Galina Boeva,Evgeni Vorsin,Alexey Zaytsev

分数:6, 2, 4, 6

信心:3, 3, 4, 3

均分:4.5

TL; DR:DeNOTS enhances Neural CDE expressiveness for irregular time series by scaling the integration horizon (instead of lowering tolerance) and making it input-to-state stable via Negative Feedback, and provides provable epistemic uncertainty bounds.

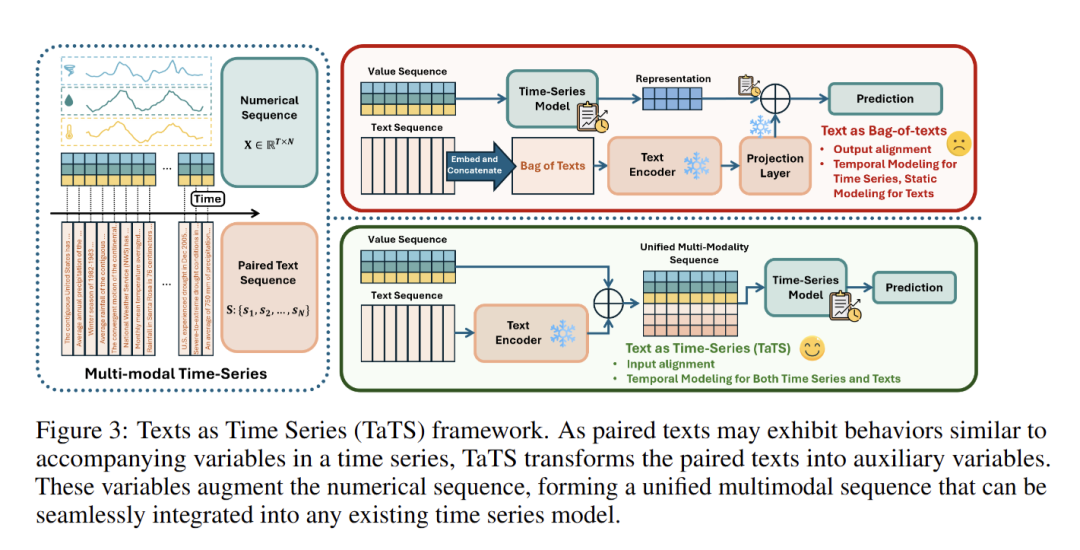

43 Language in the Flow of Time: Time-Series-Paired Texts Weaved into a Unified Temporal Narrative

链接:https://openreview.net/forum?id=a1zBg9cBvt

关键词:Time Series Modeling, Multimodal Learning, Time Series Forecasting

作者:Zihao Li,Xiao Lin,Zhining Liu,Jiaru Zou,Ziwei Wu,Lecheng Zheng,Dongqi Fu,Yada Zhu,Hendrik Hamann,Hanghang Tong,Jingrui He

分数:4, 6, 6, 6

信心:3, 3, 5, 4

均分:5.4

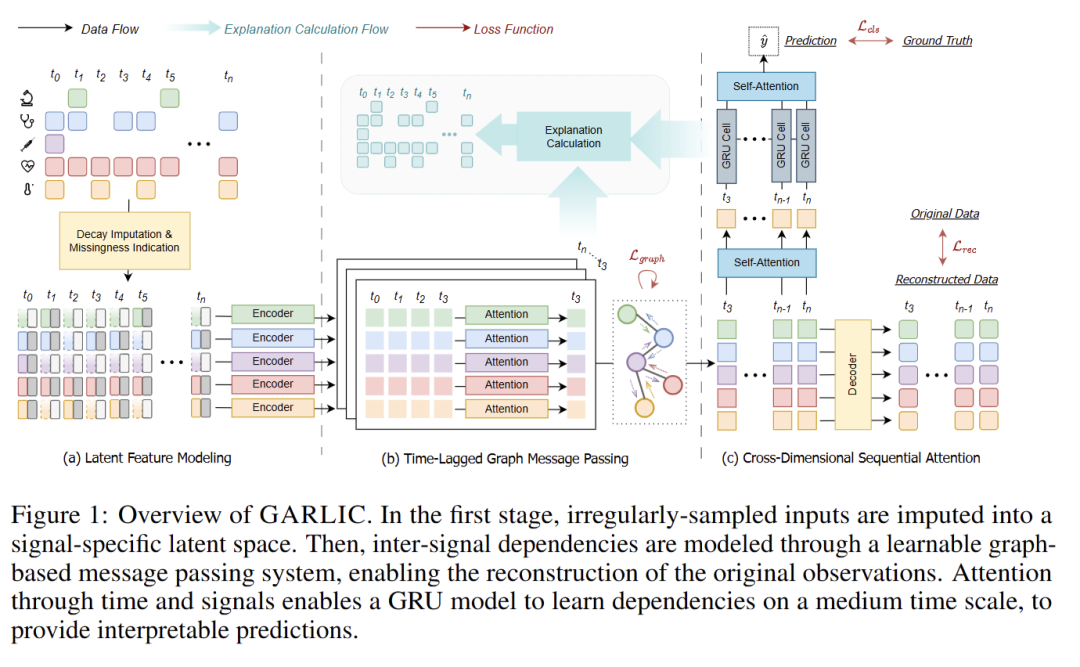

44 GARLIC: Graph Attention-based Relational Learning of Multivariate Time Series in Intensive Care

链接:https://openreview.net/forum?id=4ZAwmIaA9y

关键词:irregular multivariate time series, graph neural network, deep learning for health, intensive care unit, explainability

作者:Ruirui Wang,Yanke Li,Manuel G眉nther,Diego Paez-Granados

分数:4, 6, 6, 4

信心:4, 3, 4, 3

均分:5.0

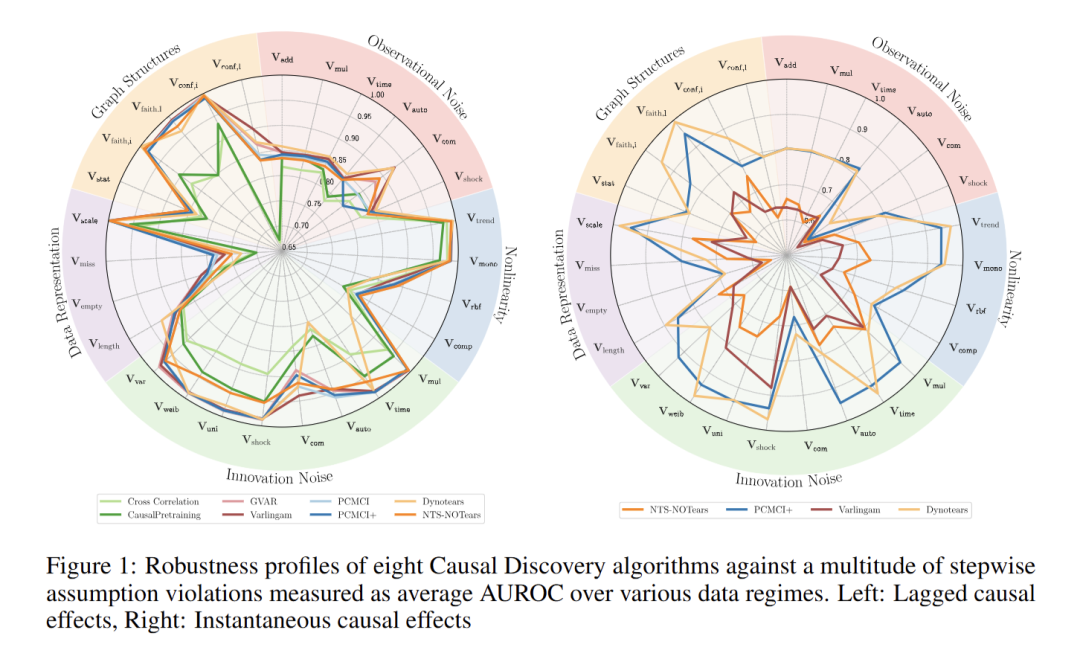

45 TCD-Arena: Assessing Robustness of Time Series Causal Discovery Methods Against Assumption Violations

链接:https://openreview.net/forum?id=MtdrOCLAGY

关键词:Causal Discovery, Benchmark, Robustness, Time-Series, Causality

作者:Gideon Stein,Niklas Penzel,Tristan Piater,Joachim Denzler

分数:4, 6, 2, 6

信心:5, 4, 3, 4

均分:4.5

TL; DR:large scale study on the robustness of causal discovery algorithms for time series data against violations of their assumptions.

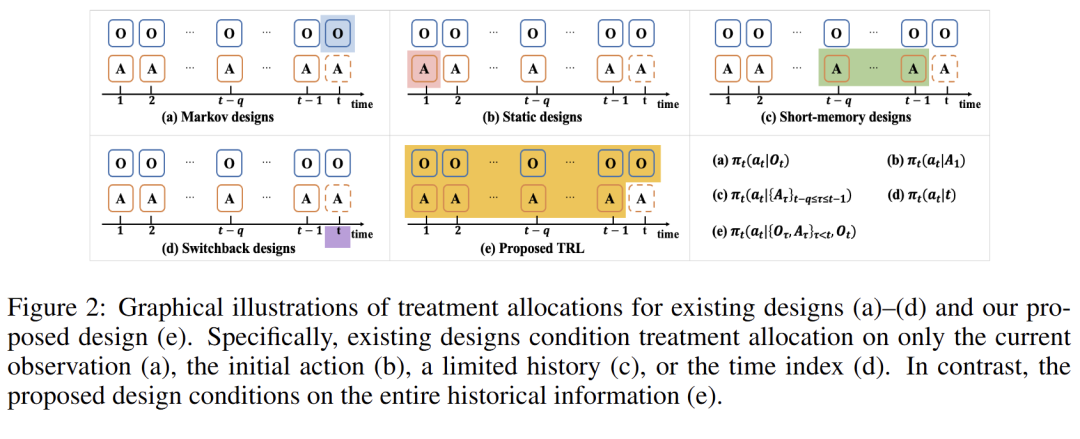

46 Designing Time Series Experiments in A/B Testing with Transformer Reinforcement Learning

链接:https://openreview.net/forum?id=T9PNKPmjGc

关键词:Experiment Designs, A/B Testing, Reinforcement Learning

作者:Xiangkun Wu,Qianglin Wen,Yingying Zhang,Hongtu Zhu,Ting Li,Chengchun Shi

分数:4, 4, 4, 2

信心:3, 2, 3, 4

均分:3.5

TL; DR:Transformer reinforcement learning is all you need for designing time series experiments.

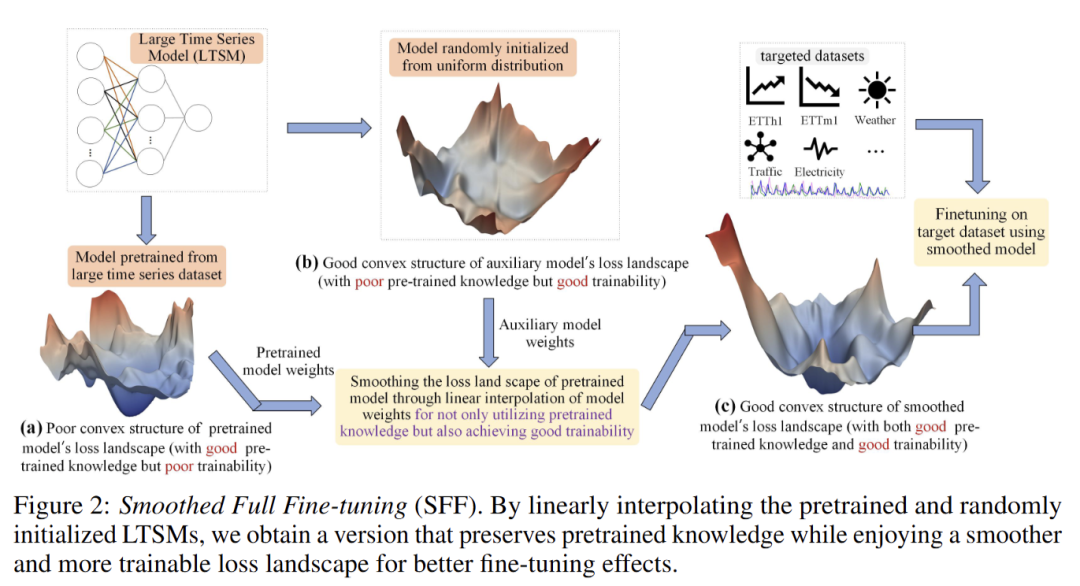

47 Lost in the Non-convex Loss Landscape: How to Fine-tune the Large Time Series Model?

链接:https://openreview.net/forum?id=8o4t5DHaE1

关键词:Time series analysis, large models, fine-tuning

作者:Xu Zhang,Peng Wang,Wei Wang

分数:2, 8, 4, 4

信心:4, 3, 4, 4

均分:4.5

TL; DR:We find that large models may suffer from performance limitations during fine-tuning due to overfitting in pre-training. We propose to smooth the loss landscape then fine-tuning to improve the fine-tuning performance.

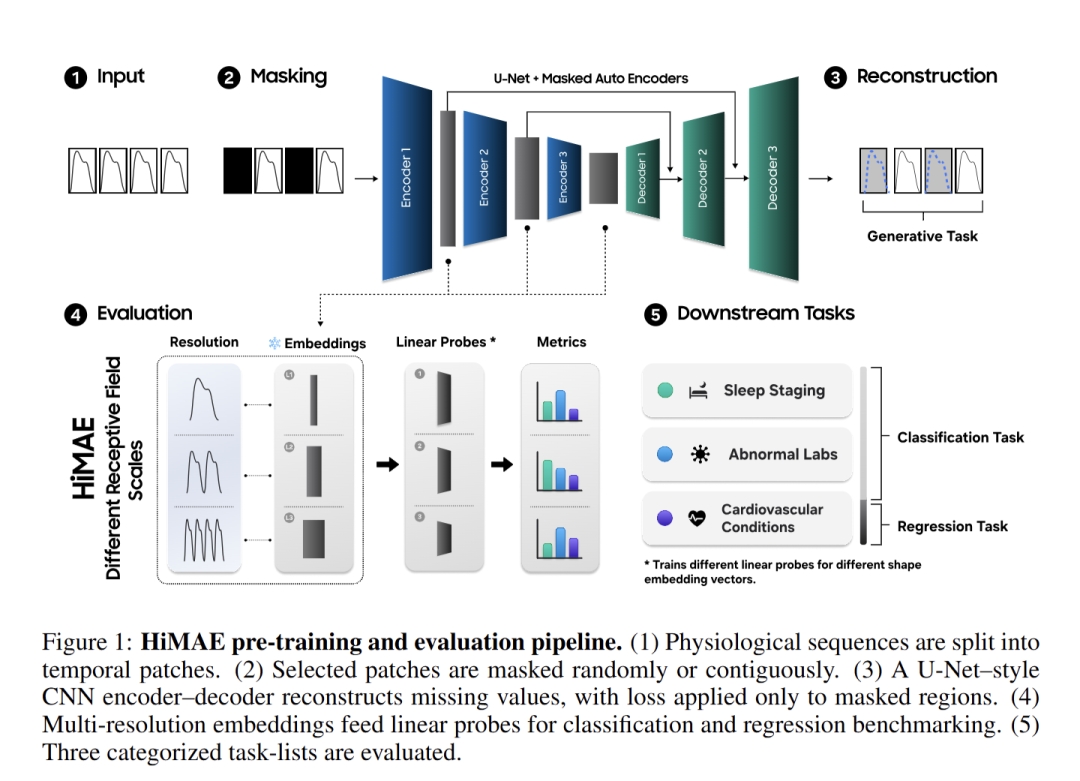

48 HiMAE: Hierarchical Masked Autoencoders Discover Resolution-Specific Structure in Wearable Time Series

链接:https://openreview.net/forum?id=iPAy5VpGQa

关键词:SSL, Wearables, Interpretability, Inductive Bias

作者:Simon Lee,Cyrus Tanade,Hao Zhou,Juhyeon Lee,Megha Thukral,Md. Sazzad Hissain Khan,Keum San Chun,Baiying Lu,Migyeong Gwak,Mehrab Bin Morshed,Viswam Nathan,Mahbubur Rahman,Li Zhu,Subramaniam Venkatraman,Sharanya Desai

分数:2, 4, 6

信心:3, 5, 4

均分:4.0

TL; DR:We propose a lightweight SSL objective that competes with much larger transformer Foundation models that also serve as an interpretability tool.

推荐阅读

ICLR 2026 | 时间序列(Time Series)论文总结(上)【预测,多模态,预测×LLM,基础模型】

ICLR 2026 | Rebuttal前 时间序列(Time Seires)高分论文总结

NeurIPS 2025 | 时间序列(Time Series)论文总结[上]——时间序列预测

NeurIPS 2025 | 时间序列(Time Series)论文总结[下]——基础模型, 异常检测, 分类, 生成,表示学习

ICML 2025 | 时间序列(Time Series)论文总结

此公众号的文章皆系本人原创,辛苦码字不易!如需转载,引用请注明出处。如商用联系作者。

欢迎各位作者投稿近期有关时空数据和时间序列录用的顶级会议和期刊的优秀文章解读,我们将竭诚为您宣传,共同学习进步。如有意愿,请通过后台私信与我们联系。

如果觉得有帮助还请分享,在看,点赞

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2026-02-12,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录