AAAI 2026 | 图基础模型(GFM)&文本属性图(TAG)论文总结

AAAI 2026 | 图基础模型(GFM)&文本属性图(TAG)论文总结

时空探索之旅

发布于 2026-03-10 14:47:44

发布于 2026-03-10 14:47:44

AAAI 2026将在2026年1月20日到1月27日于新加坡(Singapore)举行。AAAI 2026会议主会共有23, 680篇论文投稿,其中4, 167 篇被接收,接收率为17.6%。

还是尝试拓展一下知识面,试着总结了其中图基础模型(graph foundation model, GFM)和文本图(text-attributed graph, TAG)高分的论文。如有疏漏,欢迎大家补充。

1. Unlocking Multi-Modal Potentials for Link Prediction on Dynamic Text-Attributed Graphs2. Towards Robust Text-Attributed Federated Graph Learning: Multimodal Threats and Defense3. THGB: A Comprehensive Benchmark for Text-attributed Heterogeneous Graphs4. LLM-Enhanced Energy Contrastive Learning for Out-ofDistribution Detection in Text-Attributed Graphs5. GCL-OT: Graph Contrastive Learning with Optimal Transport for Heterophilic Text-Attributed Graphs6. Towards Effective, Stealthy, and Persistent Backdoor Attacks Targeting Graph Foundation Models7. Source-Free Graph Foundation Model Adaptation via Pseudo-Source Reconstruction8. SA?GFM: Enhancing Robust Graph Foundation Models with Structure-Aware Semantic Augmentation |

|---|

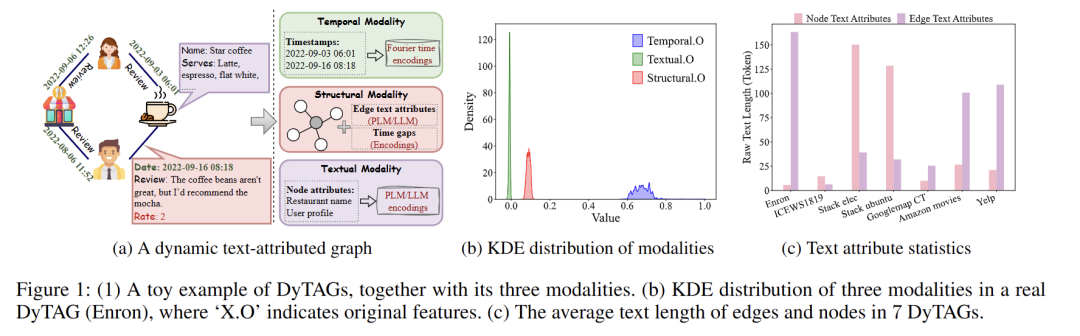

1 Unlocking Multi-Modal Potentials for Link Prediction on Dynamic Text-Attributed Graphs

链接:https://arxiv.org/abs/2502.19651

作者:Yuanyuan Xu; Wenjie Zhang; Ying Zhang; Xuemin Lin; Xiwei Xu

关键词:动态文本图,多模态,链接预测

2 Towards Robust Text-Attributed Federated Graph Learning: Multimodal Threats and Defense

作者:Zitong Shi; Guancheng Wan; Wenke Huang; Yuxin Wu; Quan Zhang; Mang Ye

关键词:联邦图学习,稳健性

3 THGB: A Comprehensive Benchmark for Text-attributed Heterogeneous Graphs

链接:https://github.com/xxxinxxx/THGB

作者:Lixin Zhou; Zemin Liu; Yuan Fang; Dan Niu; Jing Ying

关键词:异质图,benchmark

4 LLM-Enhanced Energy Contrastive Learning for Out-ofDistribution Detection in Text-Attributed Graphs

作者:Xiaoxu Ma; Dong Li; Minglai Shao; Xintao Wu; Chen Zhao

关键词:分布外检测,LLM增强

5 GCL-OT: Graph Contrastive Learning with Optimal Transport for Heterophilic Text-Attributed Graphs

链接:https://arxiv.org/abs/2511.16778

作者:Yating Ren; Yikun Ban; Huobin Tan

关键词:最优传输,对比学习,异质图

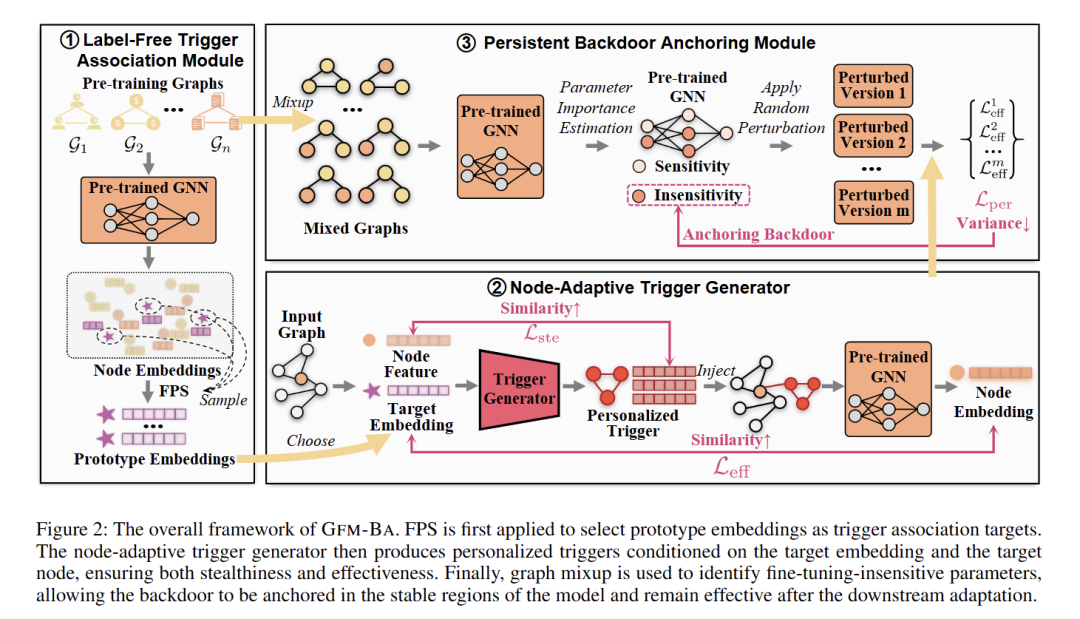

6 Towards Effective, Stealthy, and Persistent Backdoor Attacks Targeting Graph Foundation Models

链接:https://www.arxiv.org/abs/2511.17982

作者:Jiayi Luo; Qingyun Sun; Lingjuan Lyu; Ziwei Zhang; Haonan Yuan; Xingcheng Fu; Jianxin Li

关键词:图基础模型,后门攻击

7 Source-Free Graph Foundation Model Adaptation via Pseudo-Source Reconstruction

作者:Liang Yang; Hui Ning; Jiaming Zhuo; Ziyi Ma; Chuan Wang; Wenning Wu; Zhen Wang

关键词:图基础模型,适配

58 SGFM: Enhancing Robust Graph Foundation Models with Structure-Aware Semantic Augmentation

作者:Junhua Shi; Qingyun Sun; Haonan Yuan; Xingcheng Fu

关键词:稳健图基础模型,结构感知语义增强

推荐阅读

ICLR 2026 | Rebuttal前 图基础模型(GFM)&文本属性图(TAG)高分论文

AAAI 2026 | 时间序列(Time Series) 论文总结[下] (分类,异常检测,基础模型,表示学习,生成等)

AAAI 2026 | 时间序列(Time Series) 论文总结[上] (Oral+预测)

AAAI 2025 | 时间序列(Time Seies)论文总结

AAAI 2025 | 时空数据(Spatial-Temporal)论文总结

IJCAI 2025 | 时空数据(Spatial-temporal)论文总结

IJCAI 2025 | 时间序列(Time Series)论文总结

此公众号的文章皆系本人原创,辛苦码字不易!如需转载,引用请注明出处。如商用联系作者。

如果觉得有帮助还请分享,在看,点赞

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-12-05,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录