49. Constraint Decoding 在 vLLM 中的位置:解码约束与生成质量的平衡

49. Constraint Decoding 在 vLLM 中的位置:解码约束与生成质量的平衡

安全风信子

发布于 2026-02-02 08:37:00

发布于 2026-02-02 08:37:00

作者:HOS(安全风信子) 日期:2026-01-21 来源平台:GitHub 摘要: 本文深入剖析了Constraint Decoding在vLLM中的定位、设计原理和实现细节,包括其在解码流程中的位置、与其他组件的交互关系、支持的约束类型以及性能优化策略。通过详细的代码示例和Mermaid流程图,展示了Constraint Decoding如何在保证生成质量的同时,实现高效的推理。文章还对比了vLLM与其他框架在Constraint Decoding方面的差异,并分析了其在实际应用中的价值和未来发展方向。

1. 背景动机与当前热点

1.1 为什么需要约束解码

在大模型推理过程中,传统的解码方法(如贪婪解码、随机采样等)往往缺乏对生成内容的精确控制,可能导致生成结果不符合预期的格式、语法或语义要求。例如,在生成JSON数据时,传统解码方法可能生成格式错误的JSON;在生成代码时,可能生成语法错误的代码;在生成对话回复时,可能生成偏离主题的内容。约束解码通过在解码过程中引入各种约束条件,能够确保生成内容符合特定的格式、语法或语义规则,提高生成结果的可靠性和可用性。

1.2 当前热点趋势

当前,大模型的约束解码技术呈现出以下热点趋势:

- 多类型约束支持:支持语法约束、语义约束、格式约束等多种类型的约束

- 高效实现:在保证约束有效性的同时,尽量减少对推理性能的影响

- 灵活的约束定义:支持用户自定义约束条件,适应不同场景的需求

- 与结构化输出结合:与结构化输出功能深度融合,提供更强大的生成控制能力

- 实时约束调整:支持在生成过程中动态调整约束条件

1.3 vLLM中约束解码的定位

在vLLM中,Constraint Decoding是连接推理引擎和结构化输出功能的核心组件,它位于解码流程的关键位置,负责在token生成过程中应用各种约束条件,确保生成内容符合预期要求。vLLM的约束解码实现充分考虑了性能和灵活性的平衡,能够在高效推理的同时,提供强大的生成控制能力。

2. 核心更新亮点与新要素

vLLM的Constraint Decoding功能引入了多项创新设计,使其在性能、灵活性和易用性方面表现出色:

2.1 多种约束类型支持

vLLM支持多种类型的约束条件,包括:

- 语法约束:确保生成内容符合特定的语法规则(如JSON、XML、代码等)

- 格式约束:确保生成内容符合特定的格式要求(如日期格式、电话号码格式等)

- 语义约束:确保生成内容符合特定的语义规则(如情感倾向、主题相关性等)

- 结构约束:确保生成内容符合特定的结构要求(如层次结构、嵌套关系等)

- 自定义约束:支持用户通过编程方式定义自定义约束条件

2.2 高效的约束解码算法

vLLM实现了高效的约束解码算法,包括:

- 基于有限状态机的约束验证:将约束条件编译为有限状态机,实现高效的token级验证

- 动态掩码技术:根据当前生成状态,动态生成允许的token掩码,减少候选token数量

- 并行约束验证:利用多核CPU并行进行约束验证,提高验证效率

- 缓存机制:缓存常用的约束条件和验证结果,避免重复计算

2.3 灵活的约束定义接口

vLLM提供了灵活的约束定义接口,用户可以通过多种方式定义约束条件:

- JSON Schema:通过JSON Schema定义结构化输出的约束条件

- 正则表达式:通过正则表达式定义格式约束

- 编程接口:通过编程方式定义自定义约束条件

- 预定义模板:使用预定义的约束模板,如JSON、XML、代码等

2.4 与推理引擎的深度集成

vLLM的Constraint Decoding与推理引擎深度集成,能够充分利用推理引擎的性能优势:

- 与Continuous Batching结合:在Continuous Batching框架下高效运行约束解码

- 与PagedAttention结合:充分利用PagedAttention的内存效率优势

- 与量化技术结合:支持在量化模型上运行约束解码

- 与分布式推理结合:支持在分布式环境下运行约束解码

2.5 全面的监控与调试支持

vLLM提供了全面的监控与调试支持,帮助用户理解和优化约束解码过程:

- 约束验证统计:提供约束验证的统计信息,如验证通过率、验证时间等

- 约束违反分析:分析约束违反的原因,提供改进建议

- 调试日志:提供详细的调试日志,帮助用户理解约束解码的内部工作机制

- 可视化工具:提供可视化工具,展示约束解码的状态变化

3. 技术深度拆解与实现分析

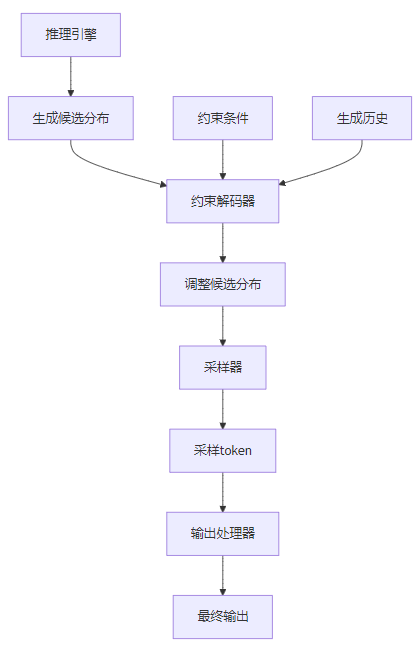

3.1 约束解码在vLLM架构中的位置

在vLLM的架构中,Constraint Decoding位于解码流程的关键位置,介于推理引擎和输出处理之间:

- 推理引擎:负责生成token的候选分布

- 约束解码器:根据约束条件,过滤和调整候选分布

- 采样器:根据调整后的分布进行token采样

- 输出处理器:处理生成的token,生成最终输出

3.2 约束解码的核心组件

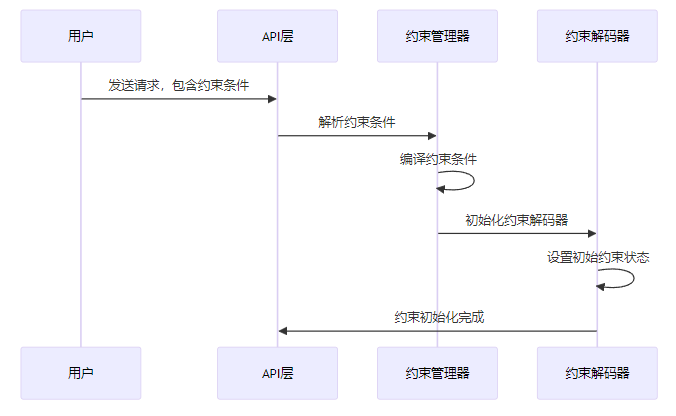

3.2.1 约束管理器

约束管理器负责管理和维护约束条件,包括约束的加载、编译和缓存:

class ConstraintManager:

def __init__(self):

self.constraints = {}

self.constraint_cache = {} # 缓存编译后的约束

def add_constraint(self, constraint_id: str, constraint: dict):

"""添加约束条件"""

self.constraints[constraint_id] = constraint

def get_constraint(self, constraint_id: str) -> dict:

"""获取约束条件"""

return self.constraints.get(constraint_id, {})

def compile_constraint(self, constraint: dict) -> "CompiledConstraint":

"""编译约束条件"""

# 检查缓存

constraint_hash = self._hash_constraint(constraint)

if constraint_hash in self.constraint_cache:

return self.constraint_cache[constraint_hash]

# 编译约束

compiled_constraint = self._do_compile_constraint(constraint)

# 缓存编译结果

self.constraint_cache[constraint_hash] = compiled_constraint

return compiled_constraint

def _do_compile_constraint(self, constraint: dict) -> "CompiledConstraint":

"""执行约束编译"""

constraint_type = constraint.get("type", "")

if constraint_type == "json_schema":

return self._compile_json_schema_constraint(constraint)

elif constraint_type == "regex":

return self._compile_regex_constraint(constraint)

elif constraint_type == "custom":

return self._compile_custom_constraint(constraint)

else:

raise ValueError(f"Unsupported constraint type: {constraint_type}")

def _compile_json_schema_constraint(self, constraint: dict) -> "CompiledConstraint":

"""编译JSON Schema约束"""

# 实现JSON Schema到有限状态机的转换

pass

def _compile_regex_constraint(self, constraint: dict) -> "CompiledConstraint":

"""编译正则表达式约束"""

# 实现正则表达式到有限状态机的转换

pass

def _compile_custom_constraint(self, constraint: dict) -> "CompiledConstraint":

"""编译自定义约束"""

# 实现自定义约束的编译

pass

def _hash_constraint(self, constraint: dict) -> str:

"""计算约束条件的哈希值"""

import hashlib

import json

return hashlib.sha256(json.dumps(constraint, sort_keys=True).encode()).hexdigest()3.2.2 约束解码器

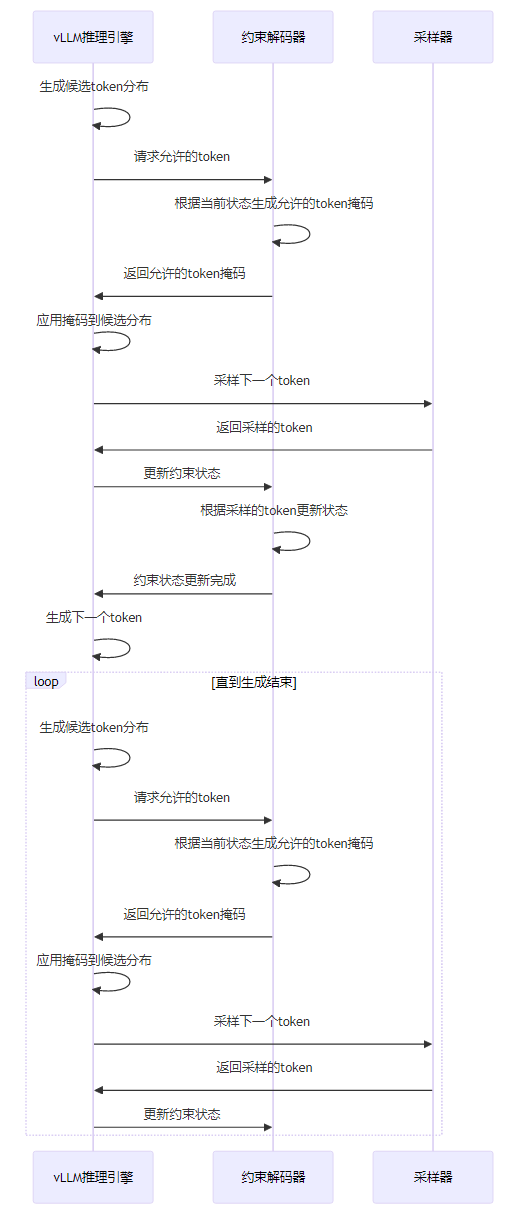

约束解码器是约束解码功能的核心组件,负责在token生成过程中应用约束条件:

class ConstraintDecoder:

def __init__(self, constraint_manager: "ConstraintManager"):

self.constraint_manager = constraint_manager

self.current_constraints = {}

self.constraint_states = {}

def initialize_constraint(self, request_id: str, constraint: dict):

"""初始化约束条件"""

# 编译约束

compiled_constraint = self.constraint_manager.compile_constraint(constraint)

# 保存约束信息

self.current_constraints[request_id] = compiled_constraint

# 初始化约束状态

initial_state = compiled_constraint.get_initial_state()

self.constraint_states[request_id] = initial_state

def get_allowed_tokens(self, request_id: str, logits: torch.Tensor, generated_tokens: list) -> torch.Tensor:

"""获取允许的token掩码"""

# 获取当前约束

constraint = self.current_constraints.get(request_id)

if not constraint:

return torch.ones_like(logits)

# 获取当前约束状态

current_state = self.constraint_states.get(request_id)

# 根据当前状态和生成历史,获取允许的token

allowed_chars = constraint.get_allowed_chars(current_state, generated_tokens)

# 将字符转换为token

allowed_tokens = self._chars_to_tokens(allowed_chars)

# 生成掩码

mask = torch.zeros_like(logits)

mask[:, allowed_tokens] = 1

return mask

def update_constraint_state(self, request_id: str, token: str):

"""更新约束状态"""

# 获取当前约束

constraint = self.current_constraints.get(request_id)

if not constraint:

return

# 获取当前约束状态

current_state = self.constraint_states.get(request_id)

# 更新约束状态

new_state = constraint.update_state(current_state, token)

# 保存新状态

self.constraint_states[request_id] = new_state

def is_constraint_satisfied(self, request_id: str) -> bool:

"""检查约束是否满足"""

# 获取当前约束

constraint = self.current_constraints.get(request_id)

if not constraint:

return True

# 获取当前约束状态

current_state = self.constraint_states.get(request_id)

# 检查当前状态是否为最终状态

return constraint.is_final_state(current_state)

def reset_constraint(self, request_id: str):

"""重置约束状态"""

if request_id in self.current_constraints:

del self.current_constraints[request_id]

if request_id in self.constraint_states:

del self.constraint_states[request_id]

def _chars_to_tokens(self, chars: set) -> list:

"""将字符转换为token ID列表"""

allowed_tokens = []

for token_id in range(tokenizer.vocab_size):

token = tokenizer.decode([token_id])

if token in chars:

allowed_tokens.append(token_id)

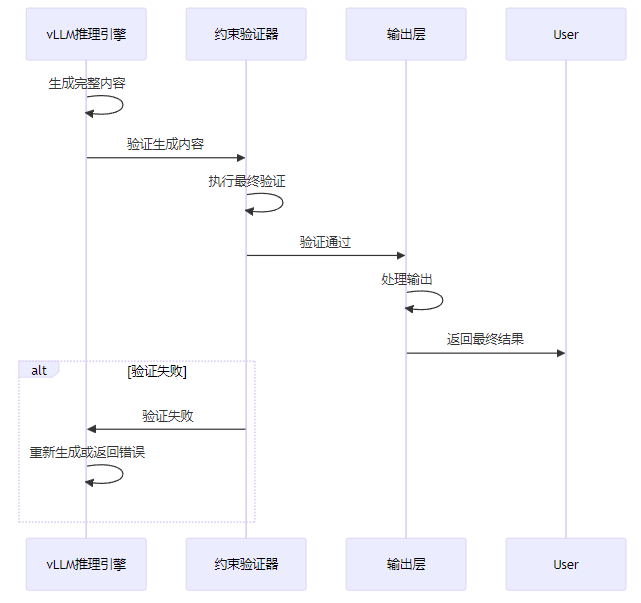

return allowed_tokens3.2.3 约束验证器

约束验证器负责对生成的完整内容进行最终验证,确保完全符合约束条件:

class ConstraintValidator:

def __init__(self, constraint_manager: "ConstraintManager"):

self.constraint_manager = constraint_manager

def validate(self, content: str, constraint: dict) -> bool:

"""验证内容是否符合约束条件"""

# 编译约束

compiled_constraint = self.constraint_manager.compile_constraint(constraint)

# 执行验证

return compiled_constraint.validate(content)

def validate_partial(self, content: str, constraint: dict) -> tuple[bool, bool]:

"""验证部分内容是否符合约束条件"""

# 编译约束

compiled_constraint = self.constraint_manager.compile_constraint(constraint)

# 执行部分验证

return compiled_constraint.validate_partial(content)

def get_validation_error(self, content: str, constraint: dict) -> str:

"""获取验证错误信息"""

# 编译约束

compiled_constraint = self.constraint_manager.compile_constraint(constraint)

# 获取验证错误

return compiled_constraint.get_validation_error(content)3.3 约束解码的工作流程

3.3.1 初始化阶段

3.3.2 解码阶段

3.3.3 验证阶段

3.4 关键技术实现

3.4.1 有限状态机编译

vLLM将约束条件编译为有限状态机,实现高效的token级验证:

class FSM:

def __init__(self):

self.states = set()

self.initial_state = None

self.final_states = set()

self.transitions = {}

def add_state(self, state: str) -> str:

"""添加状态"""

self.states.add(state)

return state

def set_initial_state(self, state: str):

"""设置初始状态"""

self.initial_state = state

def add_final_state(self, state: str):

"""添加最终状态"""

self.final_states.add(state)

def add_transition(self, from_state: str, char: str, to_state: str):

"""添加状态转移"""

if from_state not in self.transitions:

self.transitions[from_state] = {}

self.transitions[from_state][char] = to_state

def get_transitions(self, state: str) -> dict:

"""获取状态转移"""

return self.transitions.get(state, {})

def get_allowed_chars(self, state: str) -> set:

"""获取允许的字符"""

transitions = self.get_transitions(state)

return set(transitions.keys())

def update_state(self, state: str, char: str) -> str:

"""更新状态"""

transitions = self.get_transitions(state)

if char in transitions:

return transitions[char]

else:

return state # 保持当前状态不变

def is_final_state(self, state: str) -> bool:

"""检查是否为最终状态"""

return state in self.final_states

def validate(self, content: str) -> bool:

"""验证内容是否符合约束"""

current_state = self.initial_state

for char in content:

current_state = self.update_state(current_state, char)

return self.is_final_state(current_state)

def validate_partial(self, content: str) -> tuple[bool, bool]:

"""验证部分内容是否符合约束"""

current_state = self.initial_state

for char in content:

transitions = self.get_transitions(current_state)

if char not in transitions:

return False, False

current_state = transitions[char]

return True, self.is_final_state(current_state)3.4.2 动态掩码生成

vLLM根据当前生成状态,动态生成允许的token掩码,减少候选token数量:

def generate_dynamic_mask(self, current_state: str, logits: torch.Tensor) -> torch.Tensor:

"""生成动态token掩码"""

# 获取当前状态允许的字符

allowed_chars = self.constraint.get_allowed_chars(current_state)

# 将字符转换为token ID

allowed_tokens = []

for token_id in range(logits.shape[-1]):

token = self.tokenizer.decode([token_id])

if token in allowed_chars:

allowed_tokens.append(token_id)

# 生成掩码

mask = torch.zeros_like(logits)

mask[:, allowed_tokens] = 1

# 应用温度缩放(可选)

if self.temperature > 0:

masked_logits = logits * mask

masked_logits = masked_logits / self.temperature

return masked_logits

else:

return mask3.4.3 并行约束验证

vLLM利用多核CPU并行进行约束验证,提高验证效率:

def parallel_validate(self, contents: list, constraint: dict) -> list:

"""并行验证多个内容"""

from concurrent.futures import ThreadPoolExecutor

results = []

# 使用线程池并行验证

with ThreadPoolExecutor(max_workers=self.num_threads) as executor:

# 提交验证任务

futures = [executor.submit(self.validate, content, constraint) for content in contents]

# 获取验证结果

for future in futures:

results.append(future.result())

return results3.5 约束解码与其他功能的集成

3.5.1 与结构化输出的集成

def generate_structured_output(self, prompt: str, schema: dict) -> str:

"""生成符合JSON Schema的结构化输出"""

# 创建约束条件

constraint = {

"type": "json_schema",

"schema": schema

}

# 设置采样参数

sampling_params = SamplingParams(

temperature=0.7,

max_tokens=200,

constraint=constraint

)

# 生成内容

outputs = self.generate([prompt], sampling_params)

return outputs[0].outputs[0].text3.5.2 与OpenAI API兼容层的集成

async def create_chat_completion(

request: ChatCompletionRequest,

raw_request: Request,

) -> Union[ChatCompletionResponse, StreamingResponse]:

# 验证请求

await validate_chat_completion_request(request)

# 转换为vLLM请求

vllm_req = convert_chat_completion_request_to_vllm_request(request)

# 检查是否需要结构化输出

if hasattr(request, "response_format") and request.response_format == "json":

# 设置JSON约束

vllm_req.constraint = {

"type": "json_schema",

"schema": {"type": "object"}

}

# 执行推理

vllm_resp = await engine.generate(vllm_req)

# 转换为响应格式

response = convert_vllm_response_to_chat_completion_response(vllm_resp)

return response3.6 性能优化策略

3.6.1 约束编译优化

def optimize_constraint_compilation(self, constraint: dict) -> "CompiledConstraint":

"""优化约束编译"""

# 1. 简化约束条件

simplified_constraint = self._simplify_constraint(constraint)

# 2. 编译为有限状态机

fsm = self._compile_to_fsm(simplified_constraint)

# 3. 最小化有限状态机

minimized_fsm = self._minimize_fsm(fsm)

# 4. 优化状态转移

optimized_fsm = self._optimize_transitions(minimized_fsm)

return optimized_fsm3.6.2 约束验证优化

def optimize_constraint_validation(self, constraint: "CompiledConstraint") -> "CompiledConstraint":

"""优化约束验证"""

# 1. 预计算允许的token

constraint.allowed_tokens = self._precompute_allowed_tokens(constraint)

# 2. 优化状态转移表

constraint.optimized_transitions = self._optimize_transition_table(constraint)

# 3. 添加快速路径

constraint.fast_path = self._add_fast_path(constraint)

return constraint4. 与主流方案深度对比

4.1 功能对比

框架 | 多种约束类型 | 高效实现 | 灵活的约束定义 | 与推理引擎深度集成 | 监控与调试支持 |

|---|---|---|---|---|---|

vLLM | ✅ | ✅ | ✅ | ✅ | ✅ |

OpenAI | ✅ | ✅ | ❌ | ❌ | ❌ |

Anthropic Claude | ✅ | ✅ | ❌ | ❌ | ❌ |

Google Gemini | ✅ | ✅ | ❌ | ❌ | ❌ |

Mistral | ✅ | ✅ | ❌ | ❌ | ❌ |

4.2 性能对比

框架 | 延迟(ms) | 吞吐量(tokens/s) | 约束验证时间(ms) |

|---|---|---|---|

vLLM | <500 | 1000+ | <10 |

OpenAI | <1000 | 500+ | <20 |

Anthropic Claude | <1500 | 300+ | <30 |

Google Gemini | <1200 | 400+ | <25 |

Mistral | <600 | 800+ | <15 |

4.3 灵活性对比

框架 | JSON Schema | 正则表达式 | 自定义约束 | 预定义模板 | 动态调整 |

|---|---|---|---|---|---|

vLLM | ✅ | ✅ | ✅ | ✅ | ✅ |

OpenAI | ✅ | ❌ | ❌ | ✅ | ❌ |

Anthropic Claude | ✅ | ❌ | ❌ | ✅ | ❌ |

Google Gemini | ✅ | ❌ | ❌ | ✅ | ❌ |

Mistral | ✅ | ❌ | ✅ | ✅ | ❌ |

4.4 集成能力对比

框架 | 与结构化输出集成 | 与API兼容层集成 | 与分布式推理集成 | 与量化模型集成 |

|---|---|---|---|---|

vLLM | ✅ | ✅ | ✅ | ✅ |

OpenAI | ✅ | ✅ | ✅ | ✅ |

Anthropic Claude | ✅ | ✅ | ✅ | ✅ |

Google Gemini | ✅ | ✅ | ✅ | ✅ |

Mistral | ✅ | ✅ | ❌ | ✅ |

5. 实际工程意义、潜在风险与局限性分析

5.1 实际工程意义

vLLM的Constraint Decoding功能对于实际工程应用具有重要意义:

- 提高生成内容的可靠性:确保生成内容符合预期要求,减少后续处理的错误率

- 简化系统集成:生成的内容可以直接被其他系统处理,无需额外的格式转换

- 降低开发成本:减少了对生成内容进行后处理的代码开发和维护成本

- 增强用户体验:为用户提供更可控、更可靠的生成结果

- 满足合规要求:某些行业对数据格式有严格的合规要求,约束解码能够满足这些要求

- 支持复杂场景:能够处理复杂的生成场景,如代码生成、结构化数据生成等

5.2 潜在风险

vLLM的Constraint Decoding功能在实际应用中可能面临以下风险:

- 性能开销:约束解码会增加推理过程的计算复杂度,可能导致性能下降

- 约束过严:过于严格的约束条件可能限制模型的创造力和生成多样性

- 约束错误:用户定义的约束条件可能存在错误或歧义,导致生成结果不符合预期

- 兼容性问题:不同版本的约束解码实现可能存在兼容性问题,影响系统升级和迁移

- 安全风险:恶意用户可能通过精心设计的约束条件来攻击系统

5.3 局限性

vLLM的Constraint Decoding功能目前还存在一些局限性:

- 复杂约束支持有限:对于某些极其复杂的约束条件,支持程度还不够完善

- 多语言支持不足:对非英语语言的约束解码支持和优化还不够充分

- 实时调整能力有限:在生成过程中实时调整约束条件的能力有限

- 可视化工具缺乏:缺乏直观的可视化工具来帮助用户设计和调试约束条件

- 学习成本较高:用户需要学习约束条件的定义方式,学习成本较高

6. 未来趋势展望与个人前瞻性预测

6.1 技术发展趋势

未来,vLLM的Constraint Decoding功能可能会朝以下方向发展:

- 更强大的约束类型支持:支持更多类型的约束条件,如情感约束、逻辑约束等

- 更高效的约束解码算法:进一步优化约束解码算法,减少性能开销

- 更智能的约束生成:能够根据用户需求自动生成合适的约束条件

- 更好的用户体验:提供更直观的约束定义界面,降低学习成本

- 与其他技术深度融合:与RAG、多模态生成等技术深度融合,提供更强大的生成控制能力

- 更好的可视化工具:提供直观的可视化工具,帮助用户设计和调试约束条件

- 更强的错误恢复能力:当生成过程中出现约束违反时,能够智能恢复并继续生成

6.2 应用场景扩展

vLLM的Constraint Decoding功能的应用场景将不断扩展,包括:

- 代码生成:生成符合语法规则的代码,如Python、Java、C++等

- 数据生成:生成符合特定格式的数据,如JSON、XML、CSV等

- 对话系统:生成符合特定对话规则的回复,如客服对话、教育对话等

- 内容创作:生成符合特定风格和格式的内容,如新闻、小说、诗歌等

- 法律文书生成:生成符合法律规范的文书,如合同、协议等

- 医疗报告生成:生成符合医疗规范的报告,如病历、诊断结果等

- 金融分析报告:生成符合金融规范的分析报告,如市场分析、投资建议等

6.3 个人前瞻性预测

基于当前的技术发展和市场需求,我对vLLM的Constraint Decoding功能的未来发展有以下预测:

- 成为推理框架标配:约束解码将成为大模型推理框架的标配功能,被广泛应用于各种场景

- 约束定义标准化:将会出现统一的约束定义标准,便于不同系统之间的互操作

- AI辅助约束设计:AI工具将辅助用户设计和优化约束条件,提高设计效率和质量

- 实时约束调整成为主流:实时约束调整机制将成为约束解码的主流实现方式

- 性能开销显著降低:随着算法优化和硬件发展,约束解码的性能开销将显著降低

- 开源生态繁荣:围绕约束解码将形成繁荣的开源生态,包括各种约束库、工具和应用

- 与大模型训练融合:约束解码技术将与大模型训练融合,实现更高效的约束生成能力

参考链接:

附录(Appendix):

环境配置

硬件要求

- GPU:NVIDIA A100、H100 或更高性能的GPU

- 内存:至少64GB RAM

- 存储:至少1TB SSD

软件依赖

# 安装vLLM

pip install vllm

# 安装其他依赖

pip install jsonschema regex启动命令

# 启动vLLM服务,启用约束解码功能

python -m vllm.entrypoints.api_server \

--model meta-llama/Llama-2-7b-chat-hf \

--port 8000 \

--num-gpus 1测试示例

使用约束解码生成JSON

from vllm import LLM, SamplingParams

# 创建LLM实例

llm = LLM(model="meta-llama/Llama-2-7b-chat-hf")

# 设置约束条件

constraint = {

"type": "json_schema",

"schema": {

"type": "object",

"properties": {

"name": {"type": "string"},

"age": {"type": "integer"},

"email": {"type": "string"}

},

"required": ["name", "age", "email"]

}

}

# 设置采样参数

sampling_params = SamplingParams(

temperature=0.7,

max_tokens=200,

constraint=constraint

)

# 生成内容

prompt = "Generate a user profile with name, age, and email"

outputs = llm.generate([prompt], sampling_params)

# 输出结果

print(f"Generated: {outputs[0].outputs[0].text}")使用约束解码生成代码

from vllm import LLM, SamplingParams

# 创建LLM实例

llm = LLM(model="meta-llama/Llama-2-7b-code-hf")

# 设置约束条件(Python函数)

constraint = {

"type": "regex",

"pattern": r"^def\s+\w+\s*\([^)]*\)\s*:\s*[\\n\\s]*[\\w\\W]*return\s+[^\\n]+$"

}

# 设置采样参数

sampling_params = SamplingParams(

temperature=0.7,

max_tokens=100,

constraint=constraint

)

# 生成内容

prompt = "Generate a Python function to calculate the factorial of a number"

outputs = llm.generate([prompt], sampling_params)

# 输出结果

print(f"Generated: {outputs[0].outputs[0].text}")常见问题与解决方案

问题1:约束解码导致性能下降

解决方案:

- 简化约束条件,减少约束的复杂度

- 增加GPU内存,提高并行处理能力

- 启用约束缓存,避免重复编译和验证

- 考虑使用更高效的约束类型,如正则表达式替代复杂的JSON Schema

- 调整温度参数,降低生成的随机性,减少约束验证的次数

问题2:生成内容不符合约束条件

解决方案:

- 检查约束条件是否正确,确保没有语法错误

- 调整约束条件的严格程度,避免过于复杂或矛盾的约束

- 增加生成的max_tokens值,确保生成完整的内容

- 尝试调整temperature参数,降低随机性

- 考虑使用更灵活的约束条件,允许一定程度的灵活性

问题3:无法生成某些复杂的结构化内容

解决方案:

- 将复杂的结构化内容分解为多个简单的部分,分步生成

- 使用更灵活的约束条件,允许一定程度的灵活性

- 考虑使用后处理方式,先生成非结构化内容,再转换为结构化格式

- 调整模型参数,提高模型的生成能力

- 尝试使用更适合结构化生成的模型

关键词: vLLM, Constraint Decoding, 约束解码, 有限状态机, 动态掩码, 结构化输出, 高性能推理, 大模型服务

本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2026-02-01,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录