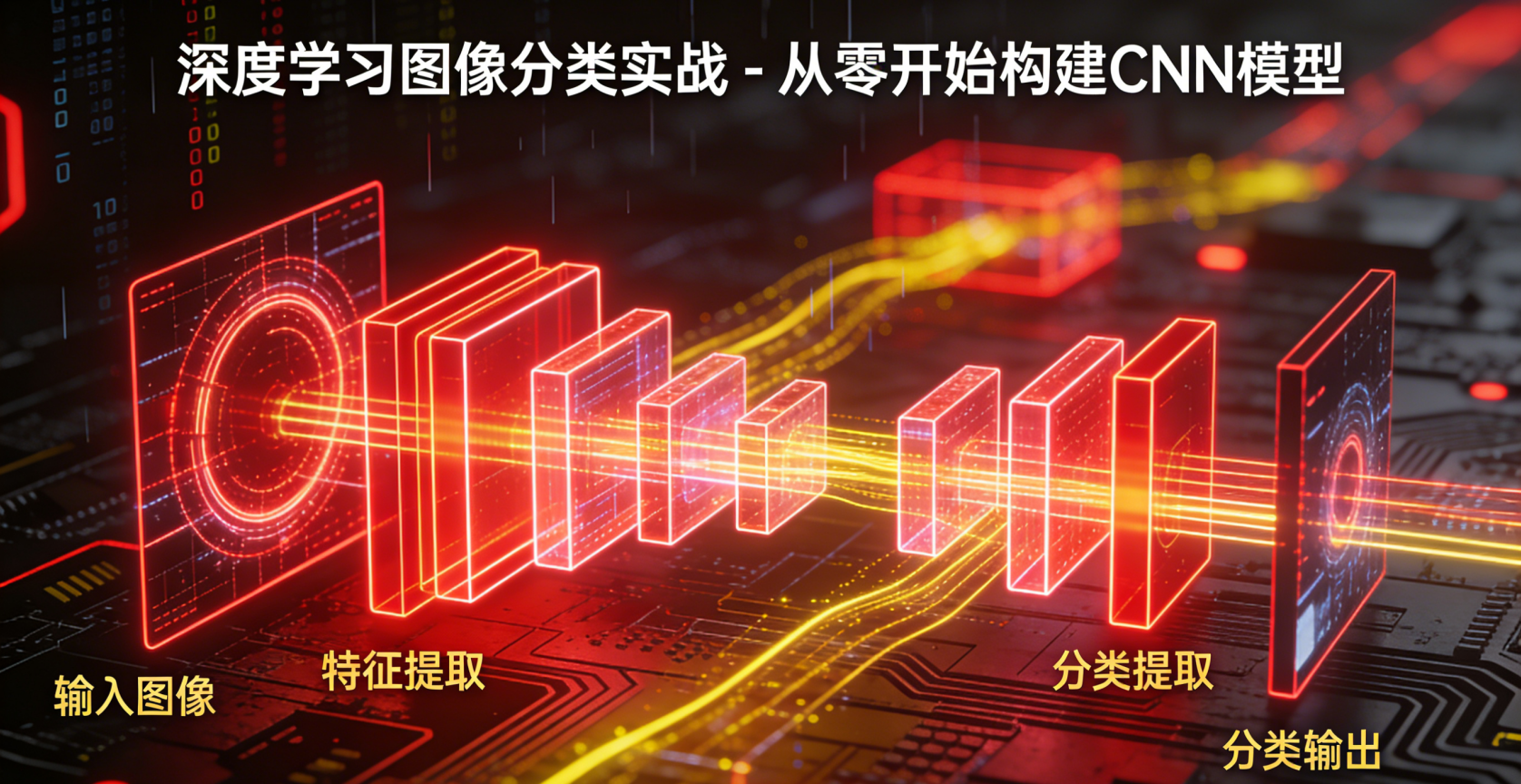

深度学习图像分类实战 - 从零开始构建CNN模型

引言

图像分类是计算机视觉领域最基础且重要的任务之一。它旨在将输入的图像分配到预定义的类别中。随着深度学习的发展,卷积神经网络(CNN)已成为图像分类的主流方法,在 ImageNet、CIFAR-10 等标准数据集上取得了超越传统方法的性能表现。

本文将带你从零开始构建一个CNN模型,用于图像分类任务。我们将深入理解CNN的原理、架构设计以及实现细节,并通过实际代码演示如何构建、训练和评估一个完整的图像分类系统。

在这里插入图片描述

CNN基础原理

卷积操作

CNN的核心是卷积操作。卷积层通过滤波器(或称为卷积核)在输入图像上滑动,执行逐元素乘法和求和运算,从而提取局部特征。这种局部连接的方式有两个主要优势:

- 参数共享:同一个滤波器在图像的不同位置共享参数,大大减少了模型参数量

- 平移不变性:特征检测不受特征在图像中位置的影响

池化操作

池化层用于降低特征图的空间维度,减少计算量,同时提供一定程度的平移不变性。最常见的池化操作是最大池化(Max Pooling),它选取感受野内的最大值作为输出。

典型CNN架构

一个典型的CNN架构通常包含以下组件:

- 输入层:接收原始图像数据

- 卷积层:提取局部特征

- 激活函数:引入非线性,常用ReLU

- 池化层:降维和增强平移不变性

- 全连接层:整合特征并输出分类结果

- 输出层:使用Softmax输出各类别概率

构建CNN图像分类器

环境准备

我们将使用PyTorch框架来实现CNN模型。首先确保安装必要的依赖:

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

import numpy as np

from torch.utils.data import DataLoader数据加载与预处理

我们使用CIFAR-10数据集,这是一个包含10个类别的60,000张32x32彩色图像的数据集。

# 定义数据转换

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

# 加载训练集和测试集

train_dataset = torchvision.datasets.CIFAR10(

root='./data', train=True, download=True, transform=transform

)

test_dataset = torchvision.datasets.CIFAR10(

root='./data', train=False, download=True, transform=transform

)

# 创建数据加载器

train_loader = DataLoader(train_dataset, batch_size=32, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=32, shuffle=False)

# 类别标签

classes = ('plane', 'car', 'bird', 'cat', 'deer',

'dog', 'frog', 'horse', 'ship', 'truck')CNN模型定义

下面我们定义一个包含两个卷积层和三个全连接层的CNN模型:

class CNN(nn.Module):

def __init__(self):

super(CNN, self).__init__()

# 第一个卷积块

self.conv1 = nn.Conv2d(3, 32, kernel_size=3, padding=1)

self.relu1 = nn.ReLU()

self.pool1 = nn.MaxPool2d(2, 2)

# 第二个卷积块

self.conv2 = nn.Conv2d(32, 64, kernel_size=3, padding=1)

self.relu2 = nn.ReLU()

self.pool2 = nn.MaxPool2d(2, 2)

# 全连接层

self.fc1 = nn.Linear(64 * 8 * 8, 512)

self.relu3 = nn.ReLU()

self.fc2 = nn.Linear(512, 10)

def forward(self, x):

# 卷积层1

x = self.conv1(x)

x = self.relu1(x)

x = self.pool1(x)

# 卷积层2

x = self.conv2(x)

x = self.relu2(x)

x = self.pool2(x)

# 展平

x = x.view(-1, 64 * 8 * 8)

# 全连接层

x = self.fc1(x)

x = self.relu3(x)

x = self.fc2(x)

return x

# 实例化模型

model = CNN()模型训练

def train_model(model, train_loader, criterion, optimizer, epochs=10):

train_losses = []

train_accuracies = []

for epoch in range(epochs):

running_loss = 0.0

correct = 0

total = 0

model.train()

for i, (images, labels) in enumerate(train_loader):

# 前向传播

outputs = model(images)

loss = criterion(outputs, labels)

# 反向传播和优化

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 统计

running_loss += loss.item()

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

if (i + 1) % 1000 == 0:

print(f'Epoch [{epoch+1}/{epochs}], Step [{i+1}/{len(train_loader)}], Loss: {loss.item():.4f}')

epoch_loss = running_loss / len(train_loader)

epoch_acc = 100 * correct / total

train_losses.append(epoch_loss)

train_accuracies.append(epoch_acc)

print(f'Epoch [{epoch+1}/{epochs}], Loss: {epoch_loss:.4f}, Accuracy: {epoch_acc:.2f}%')

return train_losses, train_accuracies

# 设置损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

# 训练模型

losses, accuracies = train_model(model, train_loader, criterion, optimizer, epochs=10)模型评估

def evaluate_model(model, test_loader):

model.eval()

correct = 0

total = 0

with torch.no_grad():

for images, labels in test_loader:

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

accuracy = 100 * correct / total

print(f'Test Accuracy: {accuracy:.2f}%')

return accuracy

# 评估模型

test_accuracy = evaluate_model(model, test_loader)结果可视化

def plot_results(losses, accuracies):

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(losses)

plt.title('Training Loss')

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.subplot(1, 2, 2)

plt.plot(accuracies)

plt.title('Training Accuracy')

plt.xlabel('Epoch')

plt.ylabel('Accuracy (%)')

plt.tight_layout()

plt.show()

# 绘制训练过程

plot_results(losses, accuracies)预测示例

def predict_image(model, image):

model.eval()

with torch.no_grad():

image = image.unsqueeze(0) # 添加batch维度

output = model(image)

_, predicted = torch.max(output.data, 1)

return classes[predicted.item()]

# 显示一些预测结果

def show_predictions(model, test_loader, num_samples=6):

model.eval()

images_shown = 0

plt.figure(figsize=(12, 8))

for images, labels in test_loader:

if images_shown >= num_samples:

break

for i in range(min(len(images), num_samples - images_shown)):

plt.subplot(2, 3, images_shown + 1)

# 反归一化图像

img = images[i] / 2 + 0.5

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

# 预测

pred = predict_image(model, images[i])

true_label = classes[labels[i]]

plt.title(f'Predicted: {pred}\nTrue: {true_label}')

plt.axis('off')

images_shown += 1

plt.tight_layout()

plt.show()

# 显示预测结果

show_predictions(model, test_loader)模型优化技巧

数据增强

数据增强是提高模型泛化能力的有效方法。我们可以通过随机旋转、裁剪、翻转等操作生成更多训练样本:

# 定义包含数据增强的转换

transform_augmented = transforms.Compose([

transforms.RandomHorizontalFlip(),

transforms.RandomRotation(10),

transforms.RandomAffine(0, shear=10, scale=(0.8, 1.2)),

transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])学习率调度

使用学习率调度器可以在训练过程中动态调整学习率:

# 定义学习率调度器

scheduler = optim.lr_scheduler.StepLR(optimizer, step_size=5, gamma=0.1)

# 在训练循环中更新学习率

for epoch in range(epochs):

train_one_epoch()

scheduler.step()正则化技术

添加Dropout层可以防止过拟合:

class CNNWithDropout(nn.Module):

def __init__(self):

super(CNNWithDropout, self).__init__()

# ... (前面的层保持不变)

self.fc1 = nn.Linear(64 * 8 * 8, 512)

self.dropout = nn.Dropout(0.5)

self.fc2 = nn.Linear(512, 10)

def forward(self, x):

# ... (前面的操作保持不变)

x = x.view(-1, 64 * 8 * 8)

x = self.fc1(x)

x = self.dropout(x) # 添加dropout

x = self.fc2(x)

return x总结与展望

本文从CNN的基本原理出发,详细介绍了如何从零构建一个图像分类模型。我们涵盖了数据预处理、模型设计、训练过程、模型评估以及优化技巧等关键环节。

通过这个实践项目,你应该能够:

- 理解CNN的基本工作原理

- 掌握使用PyTorch构建深度学习模型的流程

- 学会处理图像分类数据的技巧

- 了解模型训练和评估的方法

进一步探索

- 更深的网络架构:尝试构建更深、更复杂的网络,如ResNet、VGG等

- 迁移学习:使用预训练模型(如ResNet50)进行微调

- 目标检测:扩展到更复杂的计算机视觉任务

- 模型压缩:研究如何减小模型大小,提高推理速度

- 可解释性:探索如何理解CNN的决策过程

深度学习是一个快速发展的领域,保持学习和实践是掌握这一技术的关键。希望本文能够为你构建CNN图像分类器提供坚实的基础和实用的指导。

本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2025-12-17,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录